Building an Evaluation Set for Egocentric Manipulation Foundation Models: A Technical Recipe from Open-Source Data

Written by

Aerin Kim

The evaluation bottleneck is the new data bottleneck. Here's how to construct a rigorous, reproducible eval suite for manipulation VLAs using only publicly available egocentric datasets.

Why This Post, Why Now

In May 2026, Genesis AI released GENE-26.5, positioning evaluation as one of five pillars of full-stack manipulation: "Evaluation must match the diversity, efficiency, reproducibility, and throughput required for foundation-model-scale iteration. You can only improve what you can measure." [¹]

They are not alone. Physical Intelligence's π0 [²], Google DeepMind's RT-2 [³], Figure's Helix [⁴], NVIDIA's GR00T N1 [⁵], and the Open X-Embodiment collaboration [⁶] have all hit the same wall: closed-loop evaluation on real robots does not scale. One robot, one operator, one trial — minutes per rollout, operator-days per checkpoint.

Simulation (e.g., Genesis World [⁷], NVIDIA Isaac Lab [⁸], ManiSkill3 [⁹]) is one answer. But it has a domain gap. The other answer — the one we argue is under-explored — is open-loop evaluation on held-out egocentric human data. No robot. No sim-to-real. No operator-days. Just well-curated human demonstrations, stratified along axes that predict downstream manipulation performance.

This post is a technical recipe for building that eval set from public data.

1. The Evaluation Problem, Formally

A manipulation foundation model πθ(at∣o≤t,ℓ) maps observation history o≤t and language instruction ℓ to action distribution over a_t. The question every VLA (Vision-Language-Action) team asks:

"Which checkpoint should I ship to the robot?"

Three evaluation regimes exist:

- Real-robot closed-loop

Cost: ~$500/hr

Fidelity: Highest

Reproducibility: Low - Simulation closed-loop

Cost: ~$1/hr

Fidelity: Medium (sim gap)

Reproducibility: High - Human-data open-loop

Cost: ~$0.01/hr

Fidelity: Medium (no embodiment)

Reproducibility: Highest

Genesis AI reports a single sim eval datapoint costs 150 hours of robot execution; the same plot in the real world would require 2,700 human-robot hours [¹]. Open-loop eval on 1,000 held-out egocentric clips costs roughly 10 GPU-hours. The question isn't whether open-loop eval is cheap — it's whether it correlates with deployment performance.

It does, if the eval set is constructed correctly. That is the rest of this post.

2. The Five-Axis Framework (and Why It Maps Cleanly to Data)

Genesis AI proposes five axes for manipulation capability [¹]:

- Spatial Precision — positional accuracy of contacts

- Temporal Composition — timing and sequencing

- Contact Richness — number and diversity of simultaneous contacts

- Contact Coordination — synchronization across contacts

- Tool-Mediated Interaction — extending capability through intermediate objects

Manipulation difficulty isn't one-dimensional. "Pick up a block" and "pour coffee into a mug while holding the handle" are both pick-and-place in a trivial sense, but they stress completely different capabilities. To decide which checkpoint ships, we need to know *which axis* it improved on, not just whether the average success rate went up.

We decompose manipulation into five axes. Each one has a computable proxy, so we can score any video clip (human or robot) without running a policy.

Each axis has a data-side analog that is computable from egocentric video using off-the-shelf models:

- Spatial Precision

- Computable Proxy: 6DoF object pose variance at contact, hand-object distance at grasp

- Tooling: FoundationPose [¹⁰], HaMeR [¹¹]

- Intuition: How tightly does the hand need to land? Slotting a USB cable tolerates ~1mm of error; shoving a sock into a drawer tolerates ~5cm. Low pose variance at the moment of contact = the task demanded precision. This is the axis that separates "grasping" from "insertion." - Temporal Composition

- Computable Proxy: Subtask segmentation entropy, action boundary density

- Tooling: LVU-style segmentation, VideoMAE v2 [¹²]

- Intuition: How many distinct steps does the task chain together? Wiping a table is one motion repeated; making a sandwich is ten different motions in a specific order. High segmentation entropy means the policy has to *remember what it just did* and pick the right next thing — the regime where short-horizon policies quietly fail. - Contact Richness

- Computable Proxy: Per-finger contact binary map cardinality

- Tooling: ContactPose [¹³], 100DOH [¹⁴]

- Intuition: How much of the hand is actually touching something? A pinch grasp lights up two fingertips; kneading dough lights up the whole palm and every finger. Richer contact means the policy can't get away with a two-finger gripper abstraction — it has to reason about a contact *surface*, not a contact *point*. - Contact Coordination

- Computable Proxy: Bimanual mutual information, finger synergy entropy

- Tooling: ARCTIC [¹⁵], H2O [¹⁶]

- Intuition: Do the two hands (or the fingers) have to agree on a plan? Opening a jar is the canonical case: the left hand has to hold still *because* the right hand is twisting. High mutual information between the hands means one hand's trajectory is predictable from the other's — which is exactly where naive per-arm policies fall apart. - Tool-Mediated Interaction

- Computable Proxy: Tool presence + tool-object contact chain depth

- Tooling: EPIC-KITCHENS VISOR [¹⁷]

- Intuition: Is the hand acting directly on the object, or through something else? Picking up a tomato is depth-1 (hand → tomato). Slicing a tomato with a knife is depth-2 (hand → knife → tomato). Stirring soup with a spoon held in tongs is depth-3. Each layer of indirection adds a rigid-body constraint the policy has to internalize, and most current VLAs degrade sharply past depth-2.

The reason this framework "maps cleanly to data" is that every proxy above is extractable from a single RGB video — no robot, no teleop rig, no proprioception. That means we can score the 10,000+ hours of egocentric human data already sitting on the internet (Ego4D, EPIC-KITCHENS, HoloAssist) along the same axes we'd use to score a robot rollout. Same ruler for both sides of the sim-to-real gap.

This turns "manipulation capability" from a vibe into a set of measurable scalars you can stratify a dataset on.

3. Survey: What the Open-Source Corpus Actually Contains

Before we can build an eval set, we need to understand what the public egocentric corpus actually gives us, and more importantly, what it doesn't. Below is a scored walkthrough of the major datasets, rated on each of the five Genesis axes (0 = absent, 3 = strong coverage). Think of this as a shopping list: no single store carries everything you need, so we'll be composing across sources.

Ego4D [¹⁸] — 3,670 hours, egocentric. The giant of the space.

Spatial: 1.

Temporal: 3.

Contact Richness: 2.

Contact Coordination: 2.

Tool-Mediated: 3.

Think of Ego4D as the "internet-scale" option — it gives you unmatched diversity of scenes (kitchens, workshops, gardens, labs) and long temporal context, but the 3D ground truth is thin. You'll see a person assembling a bike or kneading dough, but you won't get 6DoF object poses for free. Use Ego4D when you need coverage, not precision.

Ego-Exo4D [¹⁹] — 1,286 hours, egocentric + exocentric.

Spatial: 2.

Temporal: 3.

Contact Richness: 2.

Contact Coordination: 2.

Tool-Mediated: 3.

The synchronized ego-exo views are gold for embodiment-transfer analysis: you can verify whether an egocentric policy's predicted hand pose is consistent with what a third-person observer would see. Domains skew toward skilled activities — cooking, bike repair, music, health — which makes it one of the better sources for tool-mediated clips.

EPIC-KITCHENS-100 [²⁰] — 100 hours, egocentric.

Spatial: 1.

Temporal: 3.

Contact Richness: 2.

Contact Coordination: 2.

Tool-Mediated: 3.

The canonical kitchen-manipulation benchmark. Dense verb-noun annotations make it the go-to for temporal segmentation work, and the "unseen kitchens" split is a battle-tested distribution-shift protocol. If you need cutting, pouring, stirring, peeling at scale, start here.

EPIC-KITCHENS VISOR [¹⁷] — 36 hours, egocentric.

Spatial: 2.

Temporal: 2.

Contact Richness: 2.

Contact Coordination: 2.

Tool-Mediated: 3.

A subset of EPIC with pixel-level hand and active-object segmentation. VISOR is where you go when you need contact masks — e.g., the exact pixels where a hand grips a knife handle versus brushes against it incidentally. Critical for contact-event F1 metrics.

HoloAssist [²¹] — 166 hours, egocentric.

Spatial: 2.

Temporal: 3.

Contact Richness: 2.

Contact Coordination: 2.

Tool-Mediated: 3.

Captured on HoloLens during instructional interactions: one person performs a procedural task (assembling a printer, installing a GPU) while another guides them. Two things make this special — it captures mistakes and recoveries, which is rare in curated demo data, and the HoloLens provides reasonable 6DoF head pose. Use it for temporal composition and failure-mode coverage.

Assembly101 [²²] — 513 hours, egocentric + exocentric.

Spatial: 2.

Temporal: 3.

Contact Richness: 3.

Contact Coordination: 3.

Tool-Mediated: 2.

People assembling and disassembling toy vehicles with 4 egocentric + 8 exocentric synchronized views. This is one of the few datasets where bimanual contact-coordination is genuinely dense — holding a chassis steady in one hand while snapping a wheel with the other is exactly the kind of skill that breaks most current VLAs.

ARCTIC [¹⁵] — 2.1M frames, exocentric.

Spatial: 3.

Temporal: 2.

Contact Richness: 3.

Contact Coordination: 3.

Tool-Mediated: 1.

The gold standard for dexterous bimanual 3D ground truth. Full MANO hand meshes, articulated object poses, contact maps — all synchronized. The catch: it's captured in a studio with a fixed set of articulated objects (scissors, laptops, boxes), so diversity is limited. Use ARCTIC to calibrate your hand and contact estimators against ground truth, not as a diversity source.

HOT3D [²³] — 833 minutes, egocentric.

Spatial: 3.

Temporal: 2.

Contact Richness: 2.

Contact Coordination: 3.

Tool-Mediated: 2.

Captured on Project Aria and Quest 3 glasses with motion-capture ground truth for hands and objects. Clips are short, but the 6DoF quality is the best you'll find in a truly egocentric dataset. This is where you verify whether your spatial-precision metric is measurable in realistic headset data, not just studio footage.

MECCANO [²⁴] — 20 hours, egocentric.

Spatial: 1.

Temporal: 2.

Contact Richness: 2.

Contact Coordination: 2.

Tool-Mediated: 3.

Industrial-style assembly of a toy motorbike from a Meccano kit. Small but high-density-of-tool-use: screwdrivers, wrenches, tiny screws. Useful specifically for the industrial tail of tool-mediated manipulation.

H2O [¹⁶] — 184k frames, egocentric.

Spatial: 3.

Temporal: 1.

Contact Richness: 2.

Contact Coordination: 3.

Tool-Mediated: 1.

Two-handed manipulation with 3D hand pose and object 6DoF ground truth. Short clips, limited object set, but the bimanual synchronization is clean and the data is fully egocentric — rare combination.

DexYCB [²⁵] — 582k frames, third-person.

Spatial: 3.

Temporal: 1.

Contact Richness: 2.

Contact Coordination: 1.

Tool-Mediated: 0.

Third-person and single-hand, so it's not ideal as an eval domain, but the calibration quality is excellent. Use it the way you'd use a ruler: to validate that your pose estimators are producing sensible numbers before you point them at Ego4D footage.

100DOH [¹⁴] — 131 days of video, mixed viewpoint.

Spatial: 1.

Temporal: 1.

Contact Richness: 2.

Contact Coordination: 1.

Tool-Mediated: 2.

Massive scale scraped from YouTube with hand-object contact state labels. Great for pretraining coverage of the "hands in contact" long tail, but too noisy for fine-grained evaluation — use it in the dedup index, not the eval set itself.

Something-Something V2 [²⁶] — 220k clips, third-person.

Spatial: 1.

Temporal: 2.

Contact Richness: 1.

Contact Coordination: 1.

Tool-Mediated: 2.

Classic action-recognition dataset with templated captions ("putting X into Y"). Useful for testing language-grounding on compositional instructions, less useful for fine motor evaluation.

RH20T [²⁷] — 110k episodes, egocentric + robot.

Spatial: 2.

Temporal: 2.

Contact Richness: 2.

Contact Coordination: 2.

Tool-Mediated: 2.

Teleoperated robot data with paired human egocentric demonstrations. This is the bridge dataset — when you want to study correlation between human-data scores and robot-execution success, RH20T is one of the few places you can do it without collecting new data.

Key takeaways

Stepping back from the list, three patterns matter for eval design:

No single dataset scores 3 on all axes. Ego4D is broad but spatially coarse. ARCTIC has beautiful 3D bimanual labels but is staged and low-diversity. HOT3D has dense 6DoF but short durations. This is why any serious eval set must compose across datasets. Picking one source means you are implicitly only evaluating one axis well.

The long tail is missing. Tool-mediated, contact-coordinated, high-precision workflows — lab pipetting, wire harnessing, surgical suturing, electronics rework — are nearly absent from public data. This is exactly the tail Genesis AI highlights as commercially valuable [¹], and it is where your eval set will have the weakest coverage. Plan for it: budget a small, internally-collected supplement for the tail, and be explicit in your eval reports about which capability clusters are under-sampled.

Bimanual coverage is weak. Only ARCTIC [¹⁵], H2O [¹⁶], and portions of Assembly101 [²²] provide synchronized bimanual ground truth. If your policy targets dual-arm platforms (Aloha, the upcoming humanoids from Figure, 1X, Apptronik), your eval set will be bottlenecked by these three sources and you should weight them heavily in the stratified sample.

Design implication: a good egocentric eval set is a composition, not a selection. The recipe in Section 4 makes this composition systematic.

Key observations:

- No single dataset scores 3 on all axes. Ego4D is broad but spatially coarse. ARCTIC has beautiful 3D bimanual labels but is staged. HOT3D has dense 6DoF but short durations.

- Long-tail gap: Tool-mediated, contact-coordinated, high-precision workflows (lab pipetting, wire harnessing) are nearly absent from public data. This is exactly what Genesis highlights as the valuable tail [¹].

- Bimanual coverage is weak. Only ARCTIC [¹⁵], H2O [¹⁶], and portions of Assembly101 [²²] provide synchronized bimanual ground truth.

Design implication: A good eval set must compose across datasets, not draw from one.

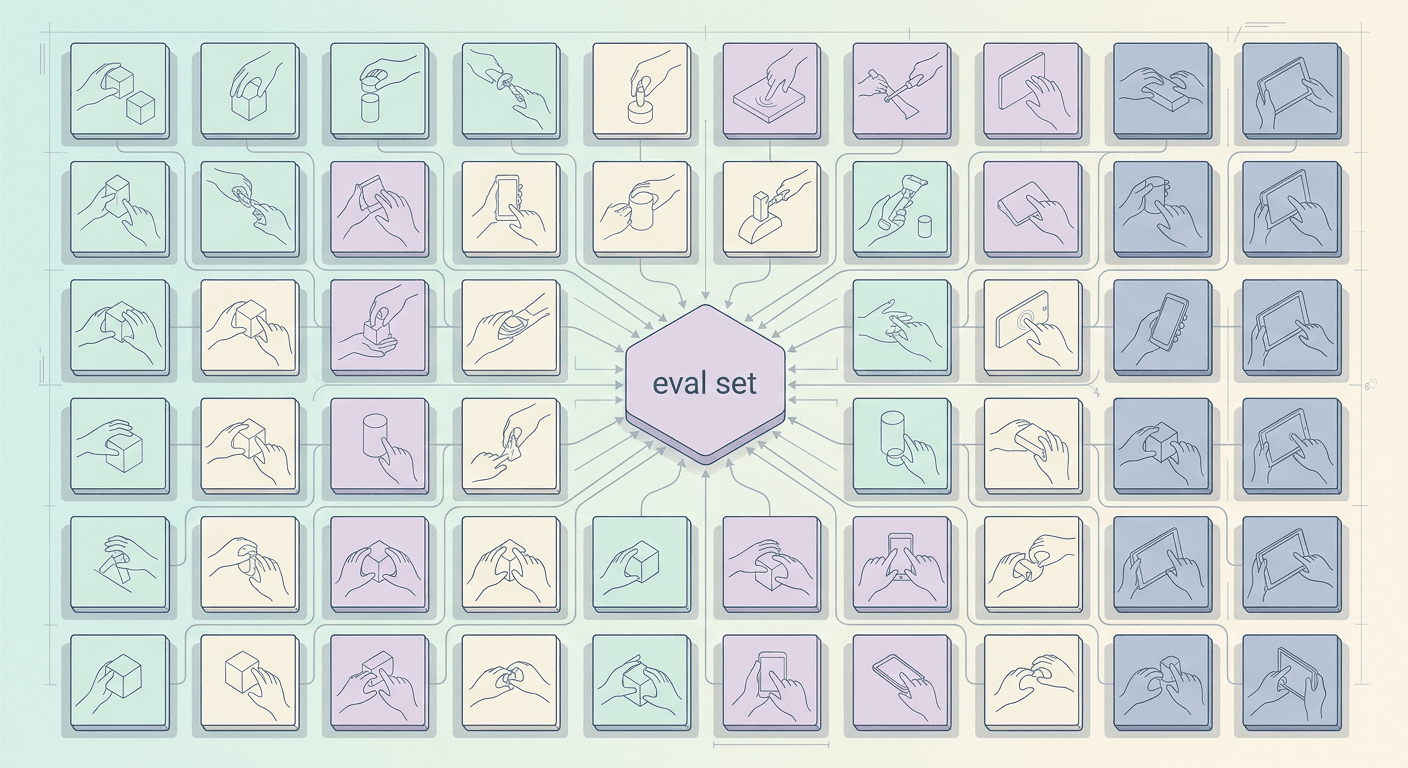

4. Eval Set Construction: The Recipe

If Section 3 was the shopping list, this is the kitchen. The goal is to end up with a few thousand carefully chosen video clips that, taken together, give you an honest score on each of the five capability axes, without accidentally testing the model on footage it already saw during training.

Step 1: Tag every candidate clip along the five axes

Start with the raw pool — say, a few hundred thousand clip candidates pulled from Ego4D, Ego-Exo4D, EPIC-KITCHENS, HoloAssist, Assembly101, ARCTIC, HOT3D, H2O, and MECCANO.

For each clip, you want a simple label on each axis — is it low, medium, or high on spatial precision? On bimanual coordination? On tool use? You don't need to do this by hand. A practical shortcut:

- Spatial precision — look at the smallest object the hand interacts with. A coin, a needle, a screw = high. A bowl or a mug = medium. A laptop lid = low. You can estimate this automatically from object bounding boxes where they exist, or from noun labels where they don't (e.g., EPIC's verb-noun annotations).

- Temporal length — simply clip duration and number of sub-actions. A 2-second "pick up cup" is low; a 90-second "replace printer cartridge" with 7 sub-steps is high.

- Contact richness — proxied by how often the hand-object contact state changes. Peeling a carrot has dozens of micro-contacts; carrying a box has one sustained grasp.

- Contact coordination — is only one hand active, or both? Are they doing the same thing (carrying a tray together) or different things (one stabilizes, one manipulates)? The latter is what scores high.

- Tool-mediated — does the action go through an intermediary object? Hands-on-food is low; hands-on-knife-on-food is high; hands-on-screwdriver-on-screw-in-hinge is highest.

At the end of this step, we have every clip tagged with five scores. Clip #48,221 might read: "spatial=3, temporal=1, contact=3, coord=2, tool=3" — translation: someone doing fiddly precision work, short duration, one-handed-dominant with tool use. That's our threading a needle-type clip.

Step 2: Stratify, don't average

The temptation is to sample uniformly from the pool and call it a day. We are not going to do that! A uniform sample of egocentric video is 80% kitchen, 15% assembly, 5% everything else — because that's what's in the source datasets. Then our eval will then mostly measure "can the model cook," and a policy that's amazing at cooking but terrible at fine assembly will look great.

Instead, we define cells along the axes we care about and sample a fixed budget from each. For example, we can create 25 cells by crossing spatial precision (low/med/high) with contact coordination (uni/bi) with tool use (yes/no), and aim for ~50 clips per cell. Cells that are over-represented in the source data get downsampled; cells that are under-represented (bimanual high-precision tool use — the "surgical suturing" corner) get every clip you can find, plus a flag in your report that this cell is under-sampled.

The point: our final eval set is a balanced slice, even though the raw data isn't. This ensures our datasets are customized to meet each client's unique requirements.

Step 3: Dedup against pretraining

This is the step most people skip and it's the one that silently inflates scores the most. If a model was pretrained on Ego4D, and your eval clips come from Ego4D, you are partially measuring memorization.

Two levels of dedup matter:

- Exact-clip dedup — obvious, but easy to forget when datasets get redistributed or re-clipped. Keep a hash of every video in your pretraining mix; refuse any eval clip whose source file hashes match.

- Near-duplicate dedup — the harder one. The same Ego4D scene re-cut into a 5-second window is still, effectively, training data. Embed every candidate eval clip and every pretraining clip using a generic video encoder (a frozen CLIP or VideoMAE is fine), and drop any eval candidate whose nearest pretraining neighbor is above a similarity threshold. A reasonable rule of thumb: if a human watching both clips would say "same kitchen, same person, same task," it's a near-duplicate.

Example of what this catches: two clips from the same Ego4D participant making two different sandwiches in the same kitchen on the same day. Different "clips" by Ego4D's index, but the model has essentially seen this environment. Drop one.

Step 4: Score with embodiment-agnostic metrics

Once we have a clean, stratified eval set, the model's job is to watch each clip and predict things like: where the hands are in 3D, which objects they contact, when contacts start and end, and what action is happening. We then compare predictions to ground truth.

The metrics should not assume any particular robot. Use:

- Hand trajectory error in meters (or in a normalized workspace unit) — not "gripper joint angles."

- Contact event F1 — did the model detect that a contact started within, say, 200ms of when it actually started? This is the temporal analog of precision/recall.

- Object 6DoF pose error where ground truth exists (from ARCTIC, HOT3D, H2O).

- Sub-action segmentation accuracy for the temporal axis — did the model correctly identify the 7 steps of "replace printer cartridge," and in the right order?

The reason for embodiment-agnosticism: a score that's tied to a specific gripper only predicts performance on that gripper. A score in meters and seconds predicts performance anywhere.

Step 5: Report per-axis, not just a headline number

The final eval report is a vector, not a scalar. A model might look like: spatial 0.71, temporal 0.58, contact 0.64, coordination 0.42, tool-use 0.55. That tells you something actionable — "this model is weakest on bimanual coordination" — in a way that a single "74% overall" never can.

It also makes your eval honest. If the coordination cell was under-sampled because public data is thin there, you say so, next to the number. Readers can then decide how much to trust each axis independently.

A concrete sample clip to make this tangible: clip from HoloAssist, 47 seconds long, wearer is installing a GPU.

Tags: spatial=3 (aligning a PCIe slot), temporal=3 (several sub-steps: remove case panel, align card, seat card, secure screw, reconnect power), contact=3, coordination=3 (left hand stabilizes motherboard tray, right hand manipulates card and screwdriver), tool=3 (screwdriver).

Ground truth: HoloLens head pose + annotated sub-action boundaries.

Dedup check: nearest pretraining neighbor at cosine 0.61, below the 0.85 threshold — keep.

This single clip exercises all five axes simultaneously and lives in the "high-value corner" cell that's chronically under-sampled.

Multiply this kind of thinking across a few thousand clips, balanced across cells, and we have an eval set that actually tells us what our model can and can't do.

5. Metrics: What to Score Against

Since we're scoring models on a fixed dataset (open-loop) but what we actually care about is whether the robot succeeds when given control (closed-loop), our metrics need to be chosen so that a high score on the dataset reliably predicts success on the real robot. Below is a defensible menu of scores, each with provenance and an intuition for what the number actually captures.

Action MSE measures how far off, on average, the predicted action is from the human's action at every timestep. Think of it as: "if the robot copied the model's output blindly, how wrong would each command be?" It penalizes large deviations quadratically, so a single wild prediction dominates the score. Introduced for manipulation evaluation in RT-1 [³⁵], it is the cheapest baseline but also the most misleading — two trajectories can have low MSE and very different contact outcomes.

Action token accuracy treats the action space as a vocabulary (after discretization) and asks "did the model pick the same action-token the human picked, among its top-k guesses?" This is the metric RT-2 [³] reports, and it maps naturally onto autoregressive VLAs. It is more forgiving than MSE because it tolerates small numerical wiggles inside the same bin, but it is also coarser — it cannot tell a near-miss from a catastrophic miss.

Trajectory DTW (dynamic time warping) answers "did the model produce the right shape of motion, even if the timing is slightly off?" DTW stretches and compresses time to find the best alignment between predicted and ground-truth trajectories before measuring distance. This is essential because humans and models often execute the same motion at different tempos, and you do not want to punish a model for being 200 ms early. Widely used in the Diffusion Policy [³⁶] line of work.

Contact-event F1 asks "when the human's finger actually touched the object, did the model predict a touch at the same moment (within a small tolerance, typically ±3 frames)?" It is the precision-recall harmonic mean over contact onsets. This is the metric that most directly predicts whether a policy will grasp versus swipe past — and it is under-used in the field. The labeling convention comes from ContactPose [¹³].

Hand MPJPE (mean per-joint position error) reports the average 3D distance, in millimeters, between each predicted hand joint and the ground-truth joint. Intuitively: "if you laid the predicted hand on top of the real hand, how far apart are the fingertips, knuckles, and wrist on average?" It is the standard yardstick from FreiHAND [³⁷] and is embodiment-agnostic because it lives in MANO space.

Object ADD-S (average distance, symmetric) measures how well the predicted 6DoF pose of an object matches the true pose, accounting for object symmetry (a symmetric cup rotated 180° is still correctly posed). The intuition: "if I rendered the object at the predicted pose and the true pose, how far apart are corresponding points on its surface, taking the best matching under symmetry?" Canonical reference: PoseCNN / YCB [³⁸].

Grasp-intent top-1 is a classification metric: "given the scene just before contact, did the model predict the correct upcoming grasp type (pinch, power, tripod, lateral, etc.)?" It captures high-level manipulation semantics that MSE and DTW miss entirely. The taxonomy and labels come from GRAB [³⁹].

Subtask boundary IoU evaluates temporal segmentation: "did the model correctly identify when one sub-action ends and the next begins?" It measures the intersection-over-union between predicted and ground-truth temporal segments. High IoU means the model understands the structure of the task — that "pick up knife," "cut tomato," and "push slices aside" are distinct phases — which is exactly the temporal-composition axis from §2. Standard protocol from ActionFormer [⁴⁰].

The composite score we recommend is a weighted sum across these metrics, where the weights are not set by taste but calibrated by empirical correlation with closed-loop robot success on a small held-out calibration set (~100 real-robot trials). In other words: whichever open-loop metric most reliably predicts "the robot finished the task" gets the highest weight. This calibration-first philosophy is adapted from HELM [²⁹] for language models and from LIBERO's sim-real correlation study [⁴¹] for robotics.

6. Open Problems

We see three directions where the community needs to move:

- Correlation calibration. We need a public study comparing open-loop eval scores on a fixed egocentric set against closed-loop robot success across 5+ models. Without this, weighting metrics is folklore. LIBERO [⁴¹] and SIMPLER [⁴³] are early precedents for sim-real correlation; the egocentric analog is missing.

- Contact ground truth at scale. ContactPose [¹³] and GRAB [³⁹] have dense contact labels but small scale. Lifting contact prediction from egocentric video with calibrated uncertainty is an open research problem.

- Tool-chain-depth taxonomy. No public dataset has systematic labels for indirect tool use (e.g., using a knife to push diced tomatoes — the Genesis cooking demo moment) [¹]. This is the long tail of manipulation and it is unlabeled."You can't improve what you can't measure."

6. Open Problems

The recipe in Sections 4 and 5 gets you a useful eval set today, but three big gaps remain. These are the places where the field as a whole still has work to do, and where a well-placed contribution could shift how everyone evaluates manipulation models.

1. We don't actually know how well open-loop scores predict real-robot success

Right now, if a model scores 0.72 on contact F1 and another scores 0.65, we assume the first one will do better when deployed on an actual robot. But nobody has published a careful study that proves it.

What's needed: take a fixed egocentric eval set, run 5 or more manipulation models through it, then also deploy those same models on a real robot across a matched set of tasks, and see whether the open-loop rankings match the closed-loop success rates. If they do, great, we know which metrics to trust. If they don't, we learn which metrics are misleading and by how much.

Until this study exists, any claim like "contact F1 matters more than trajectory error" is basically folklore — educated guessing dressed up in numbers. The simulation community has started doing this kind of calibration work with benchmarks like LIBERO [⁴¹] and SIMPLER [⁴³], which compare simulated performance to real-world performance. The egocentric version of that study simply hasn't been done yet, and it's the single highest-leverage thing someone could publish in this space.

2. Contact labels exist, but not at the scale we need

Contact is the thing that matters most and is labeled the least. ContactPose [¹³] and GRAB [³⁹] give you beautifully dense contact annotations — essentially, heatmaps showing exactly which parts of the hand touched which parts of the object. But both datasets are small and captured in controlled settings. You can't train a general-purpose contact predictor on them and expect it to work on a kitchen video from Ego4D.

The open problem: can we automatically label contacts in egocentric video, at scale, with a useful estimate of how confident the label is? "The pinky touched the mug handle at frame 1,204, and I'm 85% sure" is far more useful than "contact: yes." Calibrated uncertainty lets downstream users filter to only the labels they trust, which is the difference between a noisy dataset and a usable one.

Getting this right would unlock the contact-richness axis for every dataset in Section 3 that currently scores a 2 because it lacks ground-truth contact labels.

3. Nobody has mapped out the space of indirect tool use

Direct tool use — hand holds screwdriver, screwdriver turns screw — is at least sometimes labeled. Indirect tool use is almost never labeled and barely even defined.

An example: you're dicing tomatoes, and after they're cut you use the flat of the knife blade to scoop them sideways into a pan. The knife is no longer cutting — it's acting as a spatula. This is the Genesis cooking demo moment [¹] that sets human-level dexterity apart from "can operate one tool for one purpose."

More examples of tool-chain depth:

- Using a pen cap to press a reset button (cap-as-stylus).

- Using a fork to stabilize a piece of meat while cutting (fork-as-clamp).

- Using the butt of a screwdriver to tap a stuck part loose (screwdriver-as-hammer).

- Using tweezers held in one hand to guide a wire held by tweezers in the other hand (tool-on-tool).

Each of these is a different depth of tool chain, and none of them have systematic labels in any public dataset. This is the long tail of real manipulation — the part where humans are effortlessly general and robots are hopeless — and it is almost entirely invisible to our current evaluation pipelines.

A useful first step would be a taxonomy: a small, principled set of categories for indirect tool use (tool-as-substitute, tool-on-tool, multi-purpose tool within one task, etc.), plus a few hundred hand-annotated clips per category. Even a modest labeled seed would let the rest of the field bootstrap toward the tail.

Robotics labs are about to release manipulation foundation models faster than anyone can realistically test them on physical robots — real-world testing is slow, expensive, and hard to scale. Simulation helps fill the gap, but it's not enough on its own.

That's where egocentric open-loop evaluation comes in. The idea is simple: instead of running a model on a real robot, you show it first-person human videos (the kind captured by head-mounted cameras) and check whether its predictions about hands, objects, and actions match what actually happened in the video. No robot required, no physics simulator needed — just video in, predictions out, scored against ground truth.

Done well, this approach has four ingredients:

- Rigorous: clear protocols, reproducible splits, honest reporting of what's measured and what isn't.

- Stratified by capability: separate scores for spatial precision, temporal reasoning, contact richness, bimanual coordination, and tool use — so a model that's great at cutting but terrible at threading a needle can't hide behind a single average.

- Deduped against pretraining: making sure the eval clips weren't already seen during training, otherwise you're just measuring memorization.

- Embodiment-agnostic: metrics that don't assume a specific robot gripper or arm, so the same score means something whether you're targeting an Aloha, a Franka, or a humanoid.

Put together, this is the cheapest and most scalable way to rank manipulation models before they ever touch a real robot.

References

- Genesis AI Team. GENE-26.5: Advancing Robotic Manipulation to Human Level. Genesis AI Blog, May 2026. https://genesis.ai/blog/gene-26-5-advancing-robotic-manipulation-to-human-level

- Physical Intelligence. π0: A Vision-Language-Action Flow Model. 2024. https://www.physicalintelligence.company/blog/pi0

- Brohan et al. RT-2: Vision-Language-Action Models. Google DeepMind, 2023. https://robotics-transformer2.github.io/

- Figure AI. Helix: A Generalist Vision-Language-Action Model for Humanoid Control. 2025. https://www.figure.ai/news/helix

- NVIDIA. GR00T N1 Foundation Model for Humanoid Robots. 2025. https://developer.nvidia.com/isaac/gr00t

- Open X-Embodiment Collaboration. Open X-Embodiment: Robotic Learning Datasets and RT-X Models. ICRA 2024. https://robotics-transformer-x.github.io/

- Genesis Embodied AI. Genesis: A Generative and Universal Physics Engine. 2024. https://genesis-embodied-ai.github.io/

- NVIDIA. Isaac Lab. https://isaac-sim.github.io/IsaacLab/

- Tao et al. ManiSkill3: GPU-Parallelized Robotic Simulation and Rendering. 2024. https://www.maniskill.ai/

- Wen et al. FoundationPose: Unified 6D Pose Estimation for Novel Objects. CVPR 2024. https://nvlabs.github.io/FoundationPose/

- Pavlakos et al. Reconstructing Hands in 3D with Transformers (HaMeR). CVPR 2024. https://geopavlakos.github.io/hamer/

- Wang et al. VideoMAE V2: Scaling Video Masked Autoencoders. CVPR 2023. https://github.com/OpenGVLab/VideoMAEv2

- Brahmbhatt et al. ContactPose: A Dataset of Grasps with Object Contact and Hand Pose. ECCV 2020. https://contactpose.cc.gatech.edu/

- Shan et al. Understanding Human Hands in Contact at Internet Scale (100DOH). CVPR 2020. https://fouheylab.eecs.umich.edu/~dandans/projects/100DOH/

- Fan et al. ARCTIC: A Dataset for Dexterous Bimanual Hand-Object Manipulation. CVPR 2023. https://arctic.is.tue.mpg.de/

- Kwon et al. H2O: Two Hands Manipulating Objects for First-Person Interaction Recognition. ICCV 2021. https://taeinkwon.com/projects/h2o/

- Darkhalil et al. EPIC-KITCHENS VISOR Benchmark. NeurIPS 2022. https://epic-kitchens.github.io/VISOR/

- Grauman et al. Ego4D: Around the World in 3,000 Hours of Egocentric Video. CVPR 2022. https://ego4d-data.org/

- Grauman et al. Ego-Exo4D: Understanding Skilled Human Activity from First- and Third-Person Perspectives. CVPR 2024. https://ego-exo4d-data.org/

- Damen et al. Rescaling Egocentric Vision: EPIC-KITCHENS-100. IJCV 2022. https://epic-kitchens.github.io/

- Wang et al. HoloAssist: An Egocentric Human Interaction Dataset for Interactive AI Assistants. ICCV 2023. https://holoassist.github.io/

- Sener et al. Assembly101: A Large-Scale Multi-View Video Dataset for Understanding Procedural Activities. CVPR 2022. https://assembly-101.github.io/

- Banerjee et al. HOT3D: An Egocentric Dataset for 3D Hand and Object Tracking. Meta Reality Labs, 2024. https://facebookresearch.github.io/hot3d/

- Ragusa et al. MECCANO: A Multimodal Egocentric Dataset for Humans Behaviour in the Industrial-like Domain. WACV 2021. https://iplab.dmi.unict.it/MECCANO/

- Chao et al. DexYCB: A Benchmark for Capturing Hand Grasping of Objects. CVPR 2021. https://dex-ycb.github.io/

- Goyal et al. The "Something Something" Video Database for Learning and Evaluating Visual Common Sense. ICCV 2017. https://developer.qualcomm.com/software/ai-datasets/something-something

- Fang et al. RH20T: A Comprehensive Robotic Dataset for Learning Diverse Skills in One-Shot. ICRA 2024. https://rh20t.github.io/

- Hoffmann et al. Training Compute-Optimal Large Language Models (Chinchilla). DeepMind, 2022. https://arxiv.org/abs/2203.15556

- Liang et al. Holistic Evaluation of Language Models (HELM). Stanford CRFM, 2022. https://crfm.stanford.edu/helm/

- Miech et al. HowTo100M: Learning a Text-Video Embedding by Watching Hundred Million Narrated Clips. ICCV 2019. https://www.di.ens.fr/willow/research/howto100m/

- Lozhkov et al. The Stack v2. BigCode Project, 2024. https://www.bigcode-project.org/docs/about/the-stack/

- Lozhkov et al. StarCoder 2 and The Stack v2: The Next Generation. BigCode, 2024. https://arxiv.org/abs/2402.19173

- Koh et al. WILDS: A Benchmark of in-the-Wild Distribution Shifts. ICML 2021. https://wilds.stanford.edu/

- Kim et al. OpenVLA: An Open-Source Vision-Language-Action Model. 2024. https://openvla.github.io/

- Brohan et al. RT-1: Robotics Transformer for Real-World Control at Scale. Google, 2022. https://robotics-transformer1.github.io/

- Chi et al. Diffusion Policy: Visuomotor Policy Learning via Action Diffusion. RSS 2023. https://diffusion-policy.cs.columbia.edu/

- Zimmermann et al. FreiHAND: A Dataset for Markerless Capture of Hand Pose. ICCV 2019. https://lmb.informatik.uni-freiburg.de/projects/freihand/

- Xiang et al. PoseCNN: A Convolutional Neural Network for 6D Object Pose Estimation in Cluttered Scenes. RSS 2018. https://rse-lab.cs.washington.edu/projects/posecnn/

- Taheri et al. GRAB: A Dataset of Whole-Body Human Grasping of Objects. ECCV 2020. https://grab.is.tue.mpg.de/

- Zhang et al. ActionFormer: Localizing Moments of Actions with Transformers. ECCV 2022. https://github.com/happyharrycn/actionformer_release

- Liu et al. LIBERO: Benchmarking Knowledge Transfer for Lifelong Robot Learning. NeurIPS 2023. https://libero-project.github.io/

- Romero et al. Embodied Hands: Modeling and Capturing Hands and Bodies Together (MANO). SIGGRAPH Asia 2017. https://mano.is.tue.mpg.de/

- Li et al. SIMPLER: Evaluating Real-World Robot Manipulation Policies in Simulation. CoRL 2024. https://simpler-env.github.io/