Finetuning LLMs with Direct Preference Optimization (DPO): A Simpler Alternative to RLHF

Written by

Jay Kim

RLHF made ChatGPT possible. DPO makes the same alignment possible without the reward model, without the PPO loop, and without the engineering nightmare. This guide covers the theory, the math, the code, and the hard-won practical lessons for training preference-aligned language models.

Introduction

The story of modern language model alignment has two chapters. The first chapter is Reinforcement Learning from Human Feedback (RLHF), the technique that transformed GPT-3 from an impressive autocompleter into ChatGPT, a system that could follow instructions, refuse harmful requests, and produce outputs humans actually preferred. The second chapter is the realization that most of the complexity in RLHF was unnecessary.

RLHF works, but it works at enormous cost. The standard pipeline requires training a separate reward model on human preference data, then using that reward model to provide a training signal for the language model through Proximal Policy Optimization (PPO), a reinforcement learning algorithm that is notoriously unstable, memory-hungry, and sensitive to hyperparameters. You end up running four models simultaneously during training: the policy model being trained, a reference copy of the policy, the reward model, and a value function estimator. The infrastructure requirements are staggering. The debugging experience is miserable. And the number of hyperparameters that need careful tuning is enough to make any practitioner reconsider their career choices.

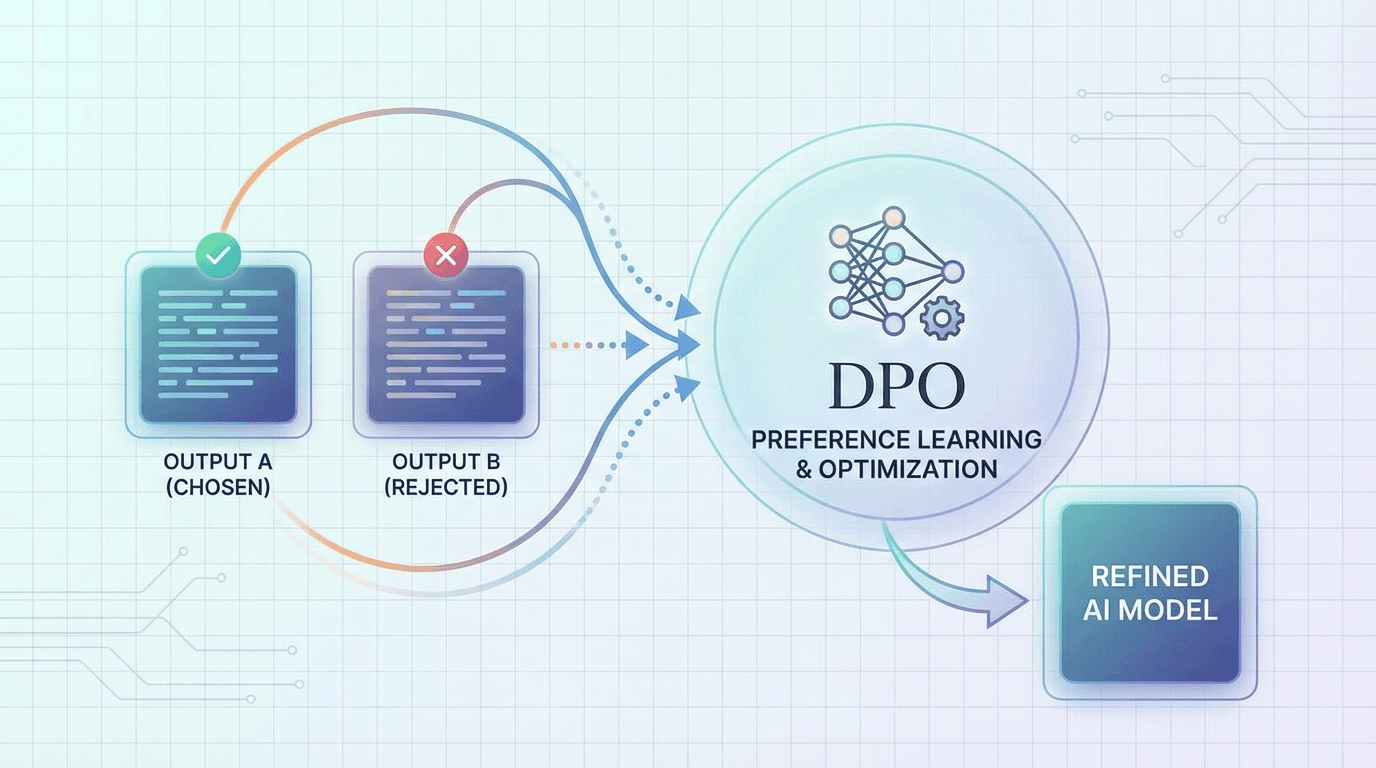

Direct Preference Optimization (DPO), introduced by Rafailov et al. in May 2023, eliminates most of this complexity. The core insight is elegant: you don't need to explicitly train a reward model and then optimize a policy against it. Instead, you can derive a closed-form mapping between the optimal policy and the reward function, and rearrange the objective so that the language model is trained directly on preference data using a simple classification-style loss. No reward model. No PPO. No value function. No reinforcement learning at all.

The result is a training procedure that looks and feels like supervised fine-tuning. You have pairs of outputs where one is preferred over the other. You compute a loss that encourages the model to assign higher probability to the preferred output and lower probability to the rejected output, relative to a frozen reference model. You backpropagate and update. That's it.

This article is a practical guide to DPO. We'll start with enough theory to understand why DPO works and where it might fail. Then we'll build up a complete training pipeline with real code, covering data preparation, training, evaluation, and the many practical decisions that determine whether your DPO run succeeds or wastes a week of GPU time.

The RLHF Pipeline: What DPO Replaces

To understand DPO, you first need to understand the system it simplifies. The standard RLHF pipeline has three stages, and each one introduces its own complexity.

Stage 1: Supervised Fine-Tuning (SFT)

You start with a pretrained language model and fine-tune it on high-quality demonstration data. This teaches the model the format and style of desired outputs. If you're building a chatbot, your SFT data consists of (instruction, response) pairs written or curated by humans. The SFT stage uses standard cross-entropy loss and is relatively straightforward. Both RLHF and DPO share this stage.

Stage 2: Reward Model Training

You collect preference data: for a given prompt, you generate two (or more) responses, and a human annotator indicates which response is better. You then train a reward model, typically initialized from the SFT model, to predict a scalar reward for any (prompt, response) pair. The reward model is trained using the Bradley-Terry preference model, which assumes the probability that response A is preferred over response B is a sigmoid function of the difference in their rewards:

The reward model training itself is stable and well-understood. The problem is that you now have a separate model to maintain, and any errors in the reward model get amplified during the RL stage.

Stage 3: RL Optimization (PPO)

This is where the pain lives. You use the reward model to provide a training signal, and you optimize the language model using PPO with a KL-divergence penalty that keeps the policy from drifting too far from the SFT model:

The KL penalty is crucial. Without it, the model quickly learns to exploit the reward model, generating outputs that score high on the reward function but are degenerate or nonsensical, a phenomenon known as reward hacking. The hyperparameter β controls the strength of this constraint.

PPO requires generating responses from the current policy at each training step (which means running inference during training), estimating advantages using a value function (which requires a separate value head or model), and managing multiple gradient updates per batch with careful clipping. The memory footprint is roughly 4x that of standard fine-tuning, and the training dynamics are fragile. Small changes to the learning rate, batch size, KL coefficient, or clipping parameters can cause training to diverge.

The DPO Insight: Skipping the Middle Step

The key mathematical insight behind DPO is that the optimization problem in Stage 3 has a closed-form solution. Given a reward function r(x,y) and a reference policy π ref, the optimal policy is:

where Z(x) is a normalizing constant (the partition function). This is a well-known result from the KL-constrained optimization literature. What Rafailov et al. noticed is that you can rearrange this expression to solve for the reward:

Now substitute this expression for the reward back into the Bradley-Terry preference model. The partition function Z(x) cancels out (it depends only on the prompt, not the response), and you get:

This gives us the DPO loss function directly:

The beauty of this result is that the loss depends only on the log-probabilities of the policy model and the reference model, both of which you already have. There is no reward model. There is no RL. You just need to:

- For each training example, compute the log-probability of the preferred response under both πθ and πref

- Do the same for the rejected response

- Compute the difference of the log-ratios

- Apply a sigmoid and take the negative log-likelihood

This is a binary classification loss. The reference model acts as an anchor, preventing the policy from changing too drastically (serving the same role as the KL penalty in RLHF).

Intuition: What DPO Actually Does to the Model

The gradient of the DPO loss has an intuitive interpretation. For each preference pair, the gradient:

- Increases the probability of tokens in the preferred response

- Decreases the probability of tokens in the rejected response

- Weights these updates by how "wrong" the model currently is: pairs where the model already strongly prefers the correct response contribute less gradient, while pairs where the model disagrees with human preference contribute more

This is essentially a contrastive learning objective applied at the sequence level. The model learns to distinguish between preferred and rejected completions, with the reference model providing a baseline that prevents the model from overfitting to superficial features of the preference data.

Preparing Preference Data

The quality of your preference data is the single biggest determinant of DPO success. The math is elegant, but it can only work with what you feed it.

Data Format

DPO requires triplets of (prompt, chosen_response, rejected_response). Each triplet represents a single preference judgment: for this prompt, a human (or AI judge) preferred the chosen response over the rejected response.

python"""Verify imports for the DPO hands-on.""" import importlib import importlib.util pkgs = [ "torch", "numpy", "json", "dataclasses", "typing", "matplotlib", "sklearn", "transformers", "datasets", "evaluate", "trl", "peft", ] missing = [p for p in pkgs if importlib.util.find_spec(p) is None] if missing: raise ImportError(f"Missing: {missing}. pip install torch transformers datasets evaluate trl peft scikit-learn matplotlib") import torch import trl import transformers print(f"torch {torch.__version__}") print(f"transformers {transformers.__version__}") print(f"trl {trl.__version__}") print("Setup OK.")

Sources of Preference Data

There are several ways to obtain preference data, each with different cost-quality tradeoffs.

Human annotation is the gold standard but the most expensive. You generate two or more responses per prompt (typically by sampling from the SFT model at different temperatures), then have human annotators pick the better one. Costs range from $0.50 to $5.00 per comparison depending on task complexity. For production alignment work, you typically need 10,000 to 100,000 preference pairs, though smaller datasets (1,000-5,000) can work for narrow tasks.

AI feedback (RLAIF) uses a stronger model (like GPT-4 or Claude) as a judge instead of human annotators. This is dramatically cheaper and faster, and recent research suggests that AI judges agree with human preferences at rates comparable to inter-annotator agreement among humans. The main risk is that the judge model's biases get baked into your trained model.

Existing datasets provide a quick starting point. Popular open-source preference datasets include Anthropic's HH-RLHF, OpenAssistant's OASST, Stanford's SHP (Stanford Human Preferences), Intel's Orca DPO pairs, and UltraFeedback. These are valuable for experimentation but may not align with your specific use case.

Synthetic preference generation creates both the responses and the preferences programmatically. You prompt a strong model to generate both a good and a bad response to each prompt, or you generate responses with different system prompts (one encouraging helpfulness, another encouraging vagueness) and treat the helpful response as chosen.

python"""Preference data format (dataclass + in-memory examples).""" import json from dataclasses import dataclass from typing import Optional @dataclass class PreferencePair: prompt: str chosen: str rejected: str metadata: Optional[dict] = None preference_data = [ PreferencePair( prompt="Explain quantum entanglement to a 10-year-old.", chosen=( "Imagine you have two magic coins. You put one in your pocket " "and mail the other to your friend across the world. When you " "flip your coin and it lands on heads, your friend's coin will " "always land on tails, instantly, no matter how far apart they " "are. Nobody knows why this happens, but scientists have proven " "it's real! That's kind of what quantum entanglement is like." ), rejected=( "Quantum entanglement is a phenomenon in quantum mechanics where " "two particles become correlated such that the quantum state of " "one particle cannot be described independently of the other, " "even when separated by large distances. This is described by " "the EPR paradox and Bell's theorem." ), metadata={"reason": "chosen is age-appropriate, rejected is too technical"}, ), PreferencePair( prompt="Write a Python function that checks if a number is prime.", chosen=( "```python\n" "def is_prime(n):\n" ' """Check if n is a prime number."""\n' " if n < 2:\n" " return False\n" " if n < 4:\n" " return True\n" " if n % 2 == 0 or n % 3 == 0:\n" " return False\n" " i = 5\n" " while i * i <= n:\n" " if n % i == 0 or n % (i + 2) == 0:\n" " return False\n" " i += 6\n" " return True\n" "```\n\n" "This function handles edge cases (numbers less than 2), checks " "divisibility by 2 and 3 first, then only checks potential factors " "of the form 6k +/- 1, which makes it efficient up to very large numbers." ), rejected=( "```python\n" "def is_prime(n):\n" " for i in range(2, n):\n" " if n % i == 0:\n" " return False\n" " return True\n" "```" ), metadata={ "reason": "chosen is correct, efficient, and well-explained; " "rejected has bugs (returns True for 0 and 1) and is O(n)" }, ), ] print(f"Loaded {len(preference_data)} preference pairs.") print(json.dumps(preference_data[0].metadata, indent=2)) def convert_to_dpo_format(pairs, chat_template: str = "chatml"): """Build dict rows with 'prompt', 'chosen', 'rejected' for TRL / trainers.""" formatted = [] for pair in pairs: if chat_template == "chatml": prompt_formatted = ( f"<|im_start|>user\n{pair.prompt}<|im_end|>\n" f"<|im_start|>assistant\n" ) chosen_formatted = f"{pair.chosen}<|im_end|>" rejected_formatted = f"{pair.rejected}<|im_end|>" elif chat_template == "llama": prompt_formatted = f"[INST] {pair.prompt} [/INST] " chosen_formatted = pair.chosen rejected_formatted = pair.rejected elif chat_template == "zephyr": prompt_formatted = f"<|user|>\n{pair.prompt}</s>\n<|assistant|>\n" chosen_formatted = f"{pair.chosen}</s>" rejected_formatted = f"{pair.rejected}</s>" else: prompt_formatted = pair.prompt chosen_formatted = pair.chosen rejected_formatted = pair.rejected formatted.append({ "prompt": prompt_formatted, "chosen": chosen_formatted, "rejected": rejected_formatted, }) return formatted dpo_rows = convert_to_dpo_format(preference_data, chat_template="chatml") print(f"Converted to {len(dpo_rows)} DPO rows (chatml).")

Data Quality Checks

Before training, validate your preference data. Bad data is the most common cause of DPO failure, and it manifests in subtle ways: the model becomes evasive, repetitive, or develops weird stylistic tics.

python"""PreferenceDataValidator on `dpo_rows` (built in cell 1).""" import numpy as np from collections import Counter from difflib import SequenceMatcher class PreferenceDataValidator: """Validate preference data quality before DPO training.""" def __init__(self, pairs: list[dict]): self.pairs = pairs def check_length_statistics(self): chosen_lens = [len(p["chosen"].split()) for p in self.pairs] rejected_lens = [len(p["rejected"].split()) for p in self.pairs] chosen_mean = float(np.mean(chosen_lens)) rejected_mean = float(np.mean(rejected_lens)) length_ratio = chosen_mean / max(rejected_mean, 1) chosen_longer = sum(c > r for c, r in zip(chosen_lens, rejected_lens)) chosen_longer_frac = chosen_longer / len(self.pairs) print("Length Analysis:") print(f" Chosen mean length: {chosen_mean:.1f} words") print(f" Rejected mean length: {rejected_mean:.1f} words") print(f" Length ratio: {length_ratio:.2f}x") print(f" Chosen is longer: {chosen_longer_frac:.1%} of pairs") if length_ratio > 1.5 or length_ratio < 0.67: print(" WARNING: Possible length bias.") return { "chosen_mean_length": chosen_mean, "rejected_mean_length": rejected_mean, "length_ratio": length_ratio, "chosen_longer_fraction": chosen_longer_frac, } def check_duplicates(self): prompts = [p["prompt"] for p in self.pairs] prompt_counts = Counter(prompts) duplicates = {k: v for k, v in prompt_counts.items() if v > 1} identical_pairs = sum( 1 for p in self.pairs if p["chosen"].strip() == p["rejected"].strip() ) print("\nDuplicate Analysis:") print(f" Unique prompts: {len(prompt_counts)} / {len(self.pairs)} total") print(f" Duplicate prompts: {len(duplicates)}") print(f" Identical chosen/rejected: {identical_pairs}") return { "unique_prompts": len(prompt_counts), "duplicate_prompts": len(duplicates), "identical_pairs": identical_pairs, } def check_response_overlap(self): similarities = [] for p in self.pairs: ratio = SequenceMatcher( None, p["chosen"].lower(), p["rejected"].lower() ).ratio() similarities.append(ratio) similarities = np.array(similarities) print("\nResponse Similarity Analysis:") print(f" Mean similarity: {similarities.mean():.3f}") print(f" Median similarity: {float(np.median(similarities)):.3f}") print(f" Very similar (>0.9): {(similarities > 0.9).mean():.1%}") print(f" Very different (<0.1): {(similarities < 0.1).mean():.1%}") return { "mean_similarity": float(similarities.mean()), "very_similar_fraction": float((similarities > 0.9).mean()), "very_different_fraction": float((similarities < 0.1).mean()), } def check_empty_responses(self): empty_chosen = sum(1 for p in self.pairs if len(p["chosen"].strip()) < 10) empty_rejected = sum(1 for p in self.pairs if len(p["rejected"].strip()) < 10) print("\nEmpty/Short Response Analysis:") print(f" Short chosen (<10 chars): {empty_chosen}") print(f" Short rejected (<10 chars): {empty_rejected}") return {"empty_chosen": empty_chosen, "empty_rejected": empty_rejected} def full_report(self): print("=" * 60) print("PREFERENCE DATA VALIDATION REPORT") print(f"Total pairs: {len(self.pairs)}") print("=" * 60) results = {} results["length"] = self.check_length_statistics() results["duplicates"] = self.check_duplicates() results["overlap"] = self.check_response_overlap() results["empty"] = self.check_empty_responses() issues = [] if results["length"]["length_ratio"] > 1.5: issues.append("length bias") if results["duplicates"]["identical_pairs"] > 0: issues.append("identical pairs") if results["overlap"]["very_similar_fraction"] > 0.2: issues.append("weak preference signal") print("\n" + "=" * 60) if issues: print(f"ISSUES FOUND: {', '.join(issues)}") else: print("NO MAJOR ISSUES FOUND. Data appears suitable for DPO.") print("=" * 60) return results validator = PreferenceDataValidator(dpo_rows) validator.full_report()

Implementing DPO from Scratch

Let's implement the DPO loss function from scratch before using the high-level libraries. Understanding the internals helps enormously when debugging training runs.

python""" From-scratch DPO (two parts in one cell): A) `compute_log_probs` + `dpo_loss` + tiny GPT-2 forward + scalar sanity check B) `TinyDPOTrainer` — one optimizer step (policy vs frozen copy of GPT-2) Part B reuses the same `compute_log_probs` / `dpo_loss` as Part A (no duplicate math). """ import copy import torch import torch.nn.functional as F from transformers import GPT2LMHeadModel, GPT2TokenizerFast def compute_log_probs(model, input_ids, attention_mask, labels): outputs = model(input_ids=input_ids, attention_mask=attention_mask) logits = outputs.logits shift_logits = logits[:, :-1, :].contiguous() shift_labels = labels[:, 1:].contiguous() shift_mask = shift_labels != -100 log_probs = F.log_softmax(shift_logits, dim=-1) gather_labels = shift_labels.clamp(min=0) per_token = log_probs.gather(dim=-1, index=gather_labels.unsqueeze(-1)).squeeze(-1) per_token = per_token * shift_mask.float() return per_token.sum(dim=-1) def dpo_loss( policy_chosen_logps, policy_rejected_logps, reference_chosen_logps, reference_rejected_logps, beta: float = 0.1, label_smoothing: float = 0.0, loss_type: str = "sigmoid", ): chosen_rewards = beta * (policy_chosen_logps - reference_chosen_logps) rejected_rewards = beta * (policy_rejected_logps - reference_rejected_logps) reward_margin = chosen_rewards - rejected_rewards if loss_type == "sigmoid": if label_smoothing > 0: losses = ( -F.logsigmoid(reward_margin) * (1 - label_smoothing) - F.logsigmoid(-reward_margin) * label_smoothing ) else: losses = -F.logsigmoid(reward_margin) elif loss_type == "hinge": losses = F.relu(1 - reward_margin) elif loss_type == "ipo": losses = (reward_margin - 1 / (2 * beta)) ** 2 else: raise ValueError(loss_type) loss = losses.mean() with torch.no_grad(): accuracy = (reward_margin > 0).float().mean() metrics = { "loss": float(loss.detach()), "accuracy": float(accuracy), "reward_margin": float(reward_margin.mean()), } return loss, metrics # ----- Part A: logp on real LM + closed-form DPO sanity check ----- tok = GPT2TokenizerFast.from_pretrained("gpt2") tok.pad_token = tok.eos_token policy = GPT2LMHeadModel.from_pretrained("gpt2") policy.train() prompt = "The answer is" chosen = " four." rejected = " five." for text in [prompt + chosen, prompt + rejected]: enc = tok(text, return_tensors="pt", truncation=True, max_length=32) ids = enc["input_ids"] plen = len(tok(prompt, add_special_tokens=False)["input_ids"]) labels = ids.clone() labels[:, :plen] = -100 logp = compute_log_probs(policy, ids, enc["attention_mask"], labels) print(text, "-> logp", float(logp)) pc = torch.tensor([-2.0], requires_grad=True) pr = torch.tensor([-2.5], requires_grad=True) rc = torch.tensor([-2.1]) rr = torch.tensor([-2.4]) loss_a, m_a = dpo_loss(pc, pr, rc, rr, beta=0.5) loss_a.backward() print("DPO loss (scalar test)", m_a) assert m_a["accuracy"] == 1.0 print("Part A OK.") # ----- Part B: one manual DPO train step ----- reference = copy.deepcopy(policy) reference.eval() for p in reference.parameters(): p.requires_grad = False class TinyDPOTrainer: def __init__(self, model, ref_model, tokenizer, beta=0.1, learning_rate=3e-5, max_length=64, max_prompt_len=48): self.model = model self.ref_model = ref_model self.tokenizer = tokenizer self.beta = beta self.max_length = max_length self.max_prompt_len = max_prompt_len self.optimizer = torch.optim.AdamW(self.model.parameters(), lr=learning_rate, weight_decay=0.01) def tokenize_pair(self, prompt, chosen, rejected): p_ids = self.tokenizer(prompt, add_special_tokens=False, truncation=True, max_length=self.max_prompt_len)[ "input_ids" ] c_ids = self.tokenizer(chosen, add_special_tokens=False, truncation=True, max_length=self.max_length - len(p_ids))[ "input_ids" ] r_ids = self.tokenizer(rejected, add_special_tokens=False, truncation=True, max_length=self.max_length - len(p_ids))[ "input_ids" ] def pack(p_ids, r_ids): seq = (p_ids + r_ids)[: self.max_length] attn = [1] * len(seq) lab = seq.copy() lab[: len(p_ids)] = [-100] * len(p_ids) pad = self.max_length - len(seq) seq = seq + [self.tokenizer.pad_token_id] * pad attn = attn + [0] * pad lab = lab + [-100] * pad return ( torch.tensor([seq], dtype=torch.long), torch.tensor([attn], dtype=torch.long), torch.tensor([lab], dtype=torch.long), ) c_in, c_m, c_l = pack(p_ids, c_ids) r_in, r_m, r_l = pack(p_ids, r_ids) return { "chosen_input_ids": c_in, "chosen_attention_mask": c_m, "chosen_labels": c_l, "rejected_input_ids": r_in, "rejected_attention_mask": r_m, "rejected_labels": r_l, } def train_step(self, batch): self.model.train() dev = next(self.model.parameters()).device b = {k: v.to(dev) for k, v in batch.items()} pol_c = compute_log_probs(self.model, b["chosen_input_ids"], b["chosen_attention_mask"], b["chosen_labels"]) pol_r = compute_log_probs(self.model, b["rejected_input_ids"], b["rejected_attention_mask"], b["rejected_labels"]) with torch.no_grad(): ref_c = compute_log_probs(self.ref_model, b["chosen_input_ids"], b["chosen_attention_mask"], b["chosen_labels"]) ref_r = compute_log_probs(self.ref_model, b["rejected_input_ids"], b["rejected_attention_mask"], b["rejected_labels"]) loss_b, metrics = dpo_loss(pol_c, pol_r, ref_c, ref_r, beta=self.beta) self.optimizer.zero_grad() loss_b.backward() torch.nn.utils.clip_grad_norm_(self.model.parameters(), 1.0) self.optimizer.step() return metrics trainer = TinyDPOTrainer(policy, reference, tok, beta=0.2, learning_rate=5e-5) batch = trainer.tokenize_pair( "Question: 2+2? Answer:", " The answer is 4.", " The answer is 99.", ) metrics_b = trainer.train_step(batch) print("One-step DPO metrics:", metrics_b) assert "accuracy" in metrics_b print("Part B OK — scratch DPO cell complete.")

Training DPO with TRL (The Production Approach)

For production training, use Hugging Face's TRL (Transformer Reinforcement Learning) library. It handles tokenization, batching, distributed training, logging, and dozens of edge cases that the minimal implementation above doesn't cover.

python""" Production-style DPO with TRL on a tiny model (CPU/MPS friendly). The original notebook uses Mistral-7B + 4-bit + flash_attention_2 + wandb — that requires a 24GB+ GPU. This cell proves the same TRL API on `distilgpt2` with LoRA. TRL 1.2: use `processing_class=tokenizer` and `DPOConfig(max_length=...)` (no max_prompt_length). """ import torch from datasets import Dataset from peft import LoraConfig from transformers import AutoModelForCausalLM, AutoTokenizer from trl import DPOConfig, DPOTrainer MODEL_ID = "distilgpt2" rows = [ {"prompt": "What is 2+2?", "chosen": "The answer is 4.", "rejected": "The answer is 5."}, {"prompt": "Capital of France?", "chosen": "Paris.", "rejected": "London."}, {"prompt": "Say hi.", "chosen": "Hello!", "rejected": "Bye."}, {"prompt": "Color of sky?", "chosen": "Blue on a clear day.", "rejected": "Green."}, ] train_ds = Dataset.from_list(rows) tok = AutoTokenizer.from_pretrained(MODEL_ID) tok.pad_token = tok.eos_token model = AutoModelForCausalLM.from_pretrained(MODEL_ID) lora = LoraConfig( r=4, lora_alpha=8, lora_dropout=0.05, bias="none", task_type="CAUSAL_LM", target_modules=["c_attn", "c_proj"], ) args = DPOConfig( output_dir="./dpo_trl_tiny_out", max_length=256, per_device_train_batch_size=1, max_steps=5, logging_steps=1, report_to="none", bf16=torch.cuda.is_available() and torch.cuda.is_bf16_supported(), fp16=torch.cuda.is_available() and not torch.cuda.is_bf16_supported(), beta=0.1, loss_type="sigmoid", dataloader_num_workers=0, learning_rate=5e-5, ) trainer = DPOTrainer( model=model, ref_model=None, args=args, train_dataset=train_ds, processing_class=tok, peft_config=lora, ) trainer.train() last = trainer.state.log_history[-1] print("TRL DPO finished. Last log entry keys:", list(last.keys())[:12]) print("train_loss:", last.get("train_loss", last)) assert trainer.state.global_step >= 5 print("TRL tiny DPO OK.")

Understanding TRL's DPO Implementation Details

Several aspects of TRL's DPOTrainer deserve attention because they affect training behavior in non-obvious ways.

When you pass a PEFT model (LoRA) and set ref_model=None, TRL uses the frozen base model (without LoRA adapters) as the reference. This is memory-efficient because you don't need a separate copy of the full model. The implicit assumption is that your LoRA adapters were initialized to zero (which is the default for LoRA's B matrix), so at the start of training, the policy and reference produce identical outputs. This is the correct behavior.

If you're not using PEFT and want to fine-tune the full model, you must provide an explicit ref_model. This means loading two copies of the model into memory, which roughly doubles the GPU memory requirement. For 7B models, this typically requires at least 2x 80GB GPUs or heavy use of offloading.

TRL handles the chat template formatting automatically if your dataset uses the conversation format (list of message dicts). Make sure your tokenizer's chat template matches the format your SFT model was trained with. Mismatched templates are a common source of poor results because the model ends up seeing token patterns it wasn't trained on during SFT.

Monitoring DPO Training: What to Watch

DPO training metrics are different from standard fine-tuning metrics, and knowing what to look for can save you from wasting days on bad runs.

python"""Plot + diagnose DPO-style metrics from a synthetic log history.""" from pathlib import Path import numpy as np import matplotlib matplotlib.use("Agg") import matplotlib.pyplot as plt def plot_dpo_training_metrics(log_history: list[dict], save_path: str = "output/dpo_metrics.png"): losses = [e["loss"] for e in log_history if "loss" in e] steps = list(range(len(losses))) reward_margins = [e.get("rewards/margins") for e in log_history if "rewards/margins" in e] chosen_r = [e.get("rewards/chosen") for e in log_history if "rewards/chosen" in e] rejected_r = [e.get("rewards/rejected") for e in log_history if "rewards/rejected" in e] acc = [e.get("rewards/accuracies") for e in log_history if "rewards/accuracies" in e] fig, axes = plt.subplots(2, 2, figsize=(12, 9)) axes[0, 0].plot(steps, losses, color="blue") axes[0, 0].set_title("DPO Loss") axes[0, 0].axhline(y=np.log(2), color="red", linestyle="--", label="ln(2) random-ish") axes[0, 0].legend() if reward_margins: axes[0, 1].plot(reward_margins, color="green") axes[0, 1].set_title("Reward margin") axes[0, 1].axhline(0, color="red", linestyle="--") if chosen_r and rejected_r: m = min(len(chosen_r), len(rejected_r)) axes[1, 0].plot(chosen_r[:m], label="chosen") axes[1, 0].plot(rejected_r[:m], label="rejected") axes[1, 0].set_title("Implicit rewards") axes[1, 0].legend() if acc: axes[1, 1].plot(acc, color="purple") axes[1, 1].set_title("Reward accuracy") axes[1, 1].set_ylim(0, 1) axes[1, 1].axhline(0.5, color="red", linestyle="--") plt.tight_layout() Path(save_path).parent.mkdir(parents=True, exist_ok=True) plt.savefig(save_path, dpi=120, bbox_inches="tight") plt.close(fig) print("Saved", save_path) def diagnose_training_issues(log_history: list[dict]): losses = [e["loss"] for e in log_history if "loss" in e] if not losses: print("No losses"); return first = float(np.mean(losses[: max(1, len(losses) // 4)])) last = float(np.mean(losses[-max(1, len(losses) // 4) :])) print(f"Loss {first:.4f} -> {last:.4f} (delta {last - first:+.4f})") print("Initial loss ~ln(2):", float(np.log(2))) # Synthetic log similar to TRL keys (floats, not strings) synth = [] for t in range(20): synth.append({ "loss": float(0.7 - 0.01 * t + 0.005 * np.sin(t)), "rewards/margins": float(-0.05 + 0.02 * t), "rewards/chosen": float(-0.1 + 0.015 * t), "rewards/rejected": float(-0.2 + 0.005 * t), "rewards/accuracies": float(0.52 + 0.02 * t), }) plot_dpo_training_metrics(synth) diagnose_training_issues(synth) print("Monitoring cell OK.")

Key Metrics Explained

Loss should start near ln(2) ≈ 0.693. This is the loss you get when the policy is identical to the reference (the log-ratio is zero for both chosen and rejected, so the sigmoid is 0.5, and -log(0.5) = ln(2)). If your initial loss is significantly different, something is wrong with your reference model setup.

Reward accuracy measures the fraction of preference pairs where the model assigns a higher implicit reward to the chosen response. It should increase during training, typically reaching 0.65-0.85 for well-behaved runs. Accuracy above 0.95 often indicates overfitting. Accuracy below 0.55 after significant training indicates the model isn't learning.

Reward margin (chosen reward minus rejected reward) should increase over training. Watch both components individually: healthy training shows the chosen reward increasing while the rejected reward stays flat or decreases. If both rewards drift in the same direction, the model is changing its overall behavior rather than learning to discriminate.

Chosen and rejected rewards diverging rapidly early in training is a warning sign. The model may be memorizing the training set rather than learning generalizable preferences. This manifests as high training accuracy but poor eval accuracy and poor generation quality.

The Beta Parameter: The Most Important Hyperparameter

The β parameter in DPO controls how much the policy is allowed to deviate from the reference model. It is the single most impactful hyperparameter you will tune, and getting it wrong is the most common cause of DPO failure.

High beta (0.3-0.5+) means the KL constraint is tight. The model is penalized heavily for deviating from the reference, so it makes small, conservative updates. Training is stable but the model may not learn strong preferences. Use high beta when your preference data is noisy, when you want to preserve the reference model's behavior closely, or when you're fine-tuning a model that's already quite good.

Low beta (0.01-0.05) means the KL constraint is loose. The model is free to deviate significantly from the reference, learning strong preferences but risking instability. Low beta can cause the model to overfit to surface features (like response length) or to degenerate (producing repetitive or incoherent text). Use low beta only when your preference data is very high quality and you want aggressive behavior change.

Medium beta (0.1-0.2) is the typical starting point for most applications.

pythondef beta_sweep_analysis( model_name, dataset, betas=[0.01, 0.05, 0.1, 0.2, 0.5], eval_prompts=None, ): """ Run DPO training at multiple beta values to find the optimal setting. This is the single most important hyperparameter search you'll do. """ results = {} for beta in betas: print(f"\n{'='*50}") print(f"Training with beta = {beta}") print(f"{'='*50}") # In practice, you'd run full training for each beta # Here we sketch the structure config = DPOConfig( output_dir=f"./dpo_beta_{beta}", beta=beta, num_train_epochs=1, per_device_train_batch_size=4, gradient_accumulation_steps=4, learning_rate=5e-7, # ... other args ) # trainer = DPOTrainer(model=..., args=config, ...) # trainer.train() # Evaluate: compute both automatic metrics and generation quality # eval_results = evaluate_model(trainer.model, eval_prompts) # results[beta] = eval_results # Plot results # The optimal beta is typically the one that: # 1. Has reasonable eval loss (not too low / not too high) # 2. Produces the best generation quality (judged by humans or AI) # 3. Maintains coherent, non-repetitive outputs return results

DPO Variants: Beyond Vanilla DPO

The original DPO paper spawned a family of related algorithms, each addressing a specific limitation. Here are the ones that matter in practice.

IPO (Identity Preference Optimization)

IPO (Azar et al., 2023) replaces the logistic loss with a squared loss. The motivation is that DPO's logistic loss implicitly assumes the Bradley-Terry preference model, which may not hold for real human preferences. IPO makes weaker assumptions and is more robust to noisy labels.

pythondef ipo_loss(chosen_rewards, rejected_rewards, beta=0.1): """ IPO loss: (reward_margin - 1/(2*beta))^2 The target margin is 1/(2*beta), not infinity. This prevents the model from becoming overconfident. """ margin = chosen_rewards - rejected_rewards target = 1.0 / (2.0 * beta) return ((margin - target) ** 2).mean()

IPO tends to produce more conservative models. It's a good choice when you suspect your preference data contains significant noise or when the annotators themselves had low agreement.

KTO (Kahneman-Tversky Optimization)

KTO (Ethayarajh et al., 2024) is notable because it doesn't require paired preference data at all. Instead of needing (prompt, chosen, rejected) triplets, KTO works with unpaired data: individual (prompt, response) examples labeled as either "good" or "bad." This dramatically simplifies data collection because annotators don't need to compare two responses; they just evaluate one.

pythondef kto_loss( policy_logps, # log probs under policy reference_logps, # log probs under reference is_desirable, # boolean: True for good responses, False for bad beta=0.1, desirable_weight=1.0, undesirable_weight=1.0, ): """ KTO loss function. For desirable (good) outputs: loss = 1 - sigmoid(beta * (logr - KL_ref)) For undesirable (bad) outputs: loss = 1 - sigmoid(beta * (KL_ref - logr)) where logr = log(policy/reference) and KL_ref is the estimated KL divergence. """ log_ratio = policy_logps - reference_logps # Estimate KL divergence from reference (using the batch) kl_estimate = (log_ratio).mean().detach() # simplified desirable_mask = is_desirable.float() undesirable_mask = (~is_desirable).float() # Desirable loss: want high log-ratio desirable_loss = ( 1 - torch.sigmoid(beta * (log_ratio - kl_estimate)) ) * desirable_mask * desirable_weight # Undesirable loss: want low log-ratio undesirable_loss = ( 1 - torch.sigmoid(beta * (kl_estimate - log_ratio)) ) * undesirable_mask * undesirable_weight loss = (desirable_loss.sum() + undesirable_loss.sum()) / ( desirable_mask.sum() + undesirable_mask.sum() ) return loss

KTO is particularly useful when you have asymmetric data, for example, lots of "bad" examples from a weak model but only a few "good" examples from human writers. It also maps more naturally to thumbs-up/thumbs-down feedback that you might collect from users in production.

ORPO (Odds Ratio Preference Optimization)

ORPO (Hong et al., 2024) eliminates the need for a reference model entirely. It combines the SFT objective with a preference objective into a single training stage, using the odds ratio of chosen versus rejected responses as the contrastive signal.

pythondef orpo_loss( policy_chosen_logps, policy_rejected_logps, chosen_nll_loss, # standard cross-entropy on chosen response lambda_weight=0.1, ): """ ORPO loss = SFT loss + lambda * odds ratio loss No reference model needed! The SFT component anchors the model, and the odds ratio component teaches preferences. """ # Odds ratio: exp(chosen_logp) / exp(rejected_logp) # In log space: chosen_logp - rejected_logp log_odds = policy_chosen_logps - policy_rejected_logps # Odds ratio loss (sigmoid of log-odds) or_loss = -F.logsigmoid(log_odds).mean() # Combined loss total_loss = chosen_nll_loss + lambda_weight * or_loss return total_loss

ORPO is appealing for smaller teams because it skips the SFT stage entirely (the SFT objective is built into the ORPO loss) and doesn't require storing a reference model. The downside is less control: you can't separately tune SFT and alignment behavior.

SimPO (Simple Preference Optimization)

SimPO (Meng et al., 2024) modifies DPO by using the average log-probability (per token) rather than the total log-probability as the implicit reward, and by replacing the reference model with a simple length normalization. This addresses DPO's known bias toward longer sequences.

pythondef simpo_loss( policy_chosen_logps, policy_rejected_logps, chosen_length, # number of tokens in chosen response rejected_length, # number of tokens in rejected response beta=2.0, gamma=0.5, # target margin ): """ SimPO: length-normalized implicit reward without reference model. reward(y) = (1/|y|) * sum(log p(y_t | y_<t, x)) """ # Length-normalized rewards chosen_reward = policy_chosen_logps / chosen_length rejected_reward = policy_rejected_logps / rejected_length # Loss with target margin gamma margin = chosen_reward - rejected_reward loss = -F.logsigmoid(beta * margin - gamma).mean() return loss

Evaluation: How to Know if DPO Worked

Training loss going down doesn't mean DPO worked. The model might be overfitting to surface patterns. Proper evaluation requires multiple complementary approaches.

Automatic Evaluation with Language Model Judges

python"""Cell LLM-judge prompt helper + DPOEvaluator with a mock judge.""" import json import torch from transformers import GPT2LMHeadModel, GPT2TokenizerFast def create_judge_prompt(prompt, response_a, response_b, criteria=None): if criteria is None: criteria = [ "Helpfulness: Does the response address the user's question?", "Accuracy: Is the information correct?", "Clarity: Is the response easy to understand?", ] criteria_str = "\n".join(f"- {c}" for c in criteria) return f"""You are an impartial judge evaluating two AI responses. USER PROMPT: {prompt} RESPONSE A: {response_a} RESPONSE B: {response_b} EVALUATION CRITERIA: {criteria_str} Output JSON with keys: reasoning, verdict ("A"/"B"/"tie"), confidence. """ class DPOEvaluator: def __init__(self, dpo_model, sft_model, tokenizer, judge_fn=None): self.dpo_model = dpo_model self.sft_model = sft_model self.tokenizer = tokenizer self.judge_fn = judge_fn def generate_response(self, model, prompt, max_new_tokens=40, temperature=0.9): inputs = self.tokenizer(prompt, return_tensors="pt").to(model.device) with torch.no_grad(): out = model.generate( **inputs, max_new_tokens=max_new_tokens, temperature=temperature, do_sample=True, top_p=0.9, pad_token_id=self.tokenizer.pad_token_id, ) return self.tokenizer.decode( out[0][inputs["input_ids"].shape[1] :], skip_special_tokens=True ) def pairwise_evaluation(self, eval_prompts, num_samples=4): results = {"dpo_wins": 0, "sft_wins": 0, "ties": 0, "details": []} for i, prompt in enumerate(eval_prompts[:num_samples]): dpo_response = self.generate_response(self.dpo_model, prompt) sft_response = self.generate_response(self.sft_model, prompt) judgments = [] for swap in (False, True): if swap: a, b = sft_response, dpo_response else: a, b = dpo_response, sft_response judge_prompt = create_judge_prompt(prompt, a, b) if self.judge_fn: raw = self.judge_fn(judge_prompt) try: judgment = json.loads(raw) except json.JSONDecodeError: judgment = {"verdict": "tie", "reasoning": "parse error"} else: judgment = {"verdict": "tie", "reasoning": "no judge"} v = judgment.get("verdict", "tie") if swap: verdict = {"A": "sft", "B": "dpo", "tie": "tie"}.get(v, "tie") else: verdict = {"A": "dpo", "B": "sft", "tie": "tie"}.get(v, "tie") judgments.append(verdict) if judgments[0] == judgments[1]: final = judgments[0] else: final = "tie" if final == "dpo": results["dpo_wins"] += 1 elif final == "sft": results["sft_wins"] += 1 else: results["ties"] += 1 results["details"].append({"prompt": prompt[:40], "verdict": final}) total = results["dpo_wins"] + results["sft_wins"] + results["ties"] print("Pairwise (mock judge):", {k: v for k, v in results.items() if k != "details"}) return results def mock_judge(prompt): # Deterministic: prefer whichever response is longer (demo only) if "RESPONSE A:" in prompt and "RESPONSE B:" in prompt: a = prompt.split("RESPONSE A:")[1].split("RESPONSE B:")[0] b = prompt.split("RESPONSE B:")[1].split("EVALUATION")[0] verdict = "A" if len(a) >= len(b) else "B" return json.dumps({"verdict": verdict, "reasoning": "length proxy", "confidence": "low"}) return json.dumps({"verdict": "tie", "reasoning": "fallback", "confidence": "low"}) tok = GPT2TokenizerFast.from_pretrained("gpt2") tok.pad_token = tok.eos_token m1 = GPT2LMHeadModel.from_pretrained("gpt2") m2 = GPT2LMHeadModel.from_pretrained("gpt2") evalr = DPOEvaluator(m1, m2, tok, judge_fn=mock_judge) evalr.pairwise_evaluation(["What is Python?", "Say one fact about the moon."]) print("Judge / evaluator cell OK.")

The Alignment Tax

DPO training (like any alignment method) can degrade the model's raw capabilities. This is known as the "alignment tax." The model may become more helpful and harmless but slightly worse at math, coding, or factual recall. Always compare pre- and post-DPO benchmark scores to quantify this tax.

Common patterns: TruthfulQA scores typically improve after alignment (the model becomes less likely to produce confident-sounding falsehoods). MMLU and coding benchmarks may show slight regression (1-3%). If you see larger drops (>5%), your training may be too aggressive. Try increasing beta, reducing the learning rate, or training for fewer steps.

Practical Recipes

Recipe 1: Aligning a Small Model with AI Feedback

This is the most common practical scenario: you have a 7B model (Mistral, Llama, etc.) that has been instruction-tuned (SFT), and you want to improve its response quality using DPO with AI-generated preferences.

python""" Recipe 1 — Runnable mini pipeline: treat `distilgpt2` as the SFT model → generate two candidates per prompt → heuristic AI judge → similarity filter → short TRL DPO run. Uses CPU/MPS-friendly sizes (no API keys required). Swap `distilgpt2` for your real SFT checkpoint and plug in a real judge (OpenAI / Claude) in `judge_pair`. """ import json import random from difflib import SequenceMatcher import torch from datasets import Dataset from peft import LoraConfig from transformers import AutoModelForCausalLM, AutoTokenizer from trl import DPOConfig, DPOTrainer MODEL_ID = "distilgpt2" MAX_NEW = 48 def score_response(text: str) -> float: """Cheap stand-in for an LLM judge: prefer substantive, non-refusal answers.""" t = text.lower().strip() penalty = 3.0 * t.count("cannot") + 2.0 * t.count("sorry") + 2.0 * t.count("as an ai") return float(len(t.split())) - penalty def judge_pair(a: str, b: str) -> tuple[str, str, str]: """Return (chosen, rejected, verdict).""" sa, sb = score_response(a), score_response(b) if sa >= sb: return a, b, "A" if sa > sb else "tie" return b, a, "B" def generate_one(model, tokenizer, prompt: str, temperature: float, gen_seed: int) -> str: torch.manual_seed(gen_seed) inputs = tokenizer(prompt, return_tensors="pt").to(model.device) with torch.no_grad(): out = model.generate( **inputs, max_new_tokens=MAX_NEW, temperature=temperature, do_sample=True, top_p=0.95, pad_token_id=tokenizer.pad_token_id, ) return tokenizer.decode(out[0][inputs["input_ids"].shape[1] :], skip_special_tokens=True).strip() def build_preference_dataset(model, tokenizer, prompts: list[str], sim_threshold: float = 0.88): rows = [] for i, prompt in enumerate(prompts): a = generate_one(model, tokenizer, prompt, temperature=0.75, gen_seed=1000 + i * 2) b = generate_one(model, tokenizer, prompt, temperature=1.0, gen_seed=1000 + i * 2 + 1) sim = SequenceMatcher(None, a.lower(), b.lower()).ratio() if sim >= sim_threshold: print(f" [skip] prompt {i}: responses too similar (sim={sim:.2f})") continue chosen, rejected, verdict = judge_pair(a, b) rows.append({ "prompt": prompt, "chosen": chosen, "rejected": rejected, "metadata": json.dumps({"sim": sim, "judge_verdict": verdict}), }) return rows PROMPTS = [ "Explain what a neural network is in two sentences.", "Give one reason exercise can help mood.", "What is the capital of Italy?", "Write a one-line Python comment about lists.", "Why do we shuffle training data?", ] print("Recipe 1: load SFT stand-in (%s)…" % MODEL_ID) tok = AutoTokenizer.from_pretrained(MODEL_ID) tok.pad_token = tok.eos_token sft_model = AutoModelForCausalLM.from_pretrained(MODEL_ID) sft_model.eval() print("Generating + judging + filtering…") random.seed(42) pairs = build_preference_dataset(sft_model, tok, PROMPTS, sim_threshold=0.88) print(f" Kept {len(pairs)} preference pairs (from {len(PROMPTS)} prompts).") assert len(pairs) >= 2, "Need at least 2 pairs — lower sim_threshold or add prompts." train_ds = Dataset.from_list([{k: v for k, v in p.items() if k != "metadata"} for p in pairs]) print("Sample row:", train_ds[0]) lora = LoraConfig( r=8, lora_alpha=16, lora_dropout=0.05, bias="none", task_type="CAUSAL_LM", target_modules=["c_attn", "c_proj"], ) args = DPOConfig( output_dir="./dpo_recipe1_out", max_length=256, per_device_train_batch_size=1, max_steps=6, logging_steps=2, report_to="none", bf16=torch.cuda.is_available() and torch.cuda.is_bf16_supported(), fp16=False, beta=0.1, loss_type="sigmoid", dataloader_num_workers=0, learning_rate=5e-5, ) trainer = DPOTrainer( model=sft_model, ref_model=None, args=args, train_dataset=train_ds, processing_class=tok, peft_config=lora, ) trainer.train() print("Recipe 1 DPO done. global_step=", trainer.state.global_step) trainer.save_model("./dpo_recipe1_out/final_adapter") tok.save_pretrained("./dpo_recipe1_out/final_adapter") print("Saved adapter to ./dpo_recipe1_out/final_adapter") print("Recipe 1 OK.")

Recipe 2: Iterative DPO (Self-Play)

One powerful technique is to run DPO iteratively: generate new preference data using the latest model, then train DPO again. This is sometimes called online DPO or iterative DPO, and it tends to outperform single-round training because the preference data becomes more relevant to the model's current behavior.

python""" Recipe 2 — Runnable iterative DPO (self-play style) on `distilgpt2`: Each iteration: 1) Generate fresh (chosen, rejected) pairs from the *current* policy (with LoRA). 2) Heuristic judge + similarity filter (same helpers as Recipe 1). 3) Train a few DPO steps in-place on the new batch (TRL keeps the same PeftModel). After the first TRL pass the model is already a PeftModel; the second DPOTrainer uses peft_config=None so we do not wrap twice. """ import random from difflib import SequenceMatcher import torch from datasets import Dataset from peft import LoraConfig from transformers import AutoModelForCausalLM, AutoTokenizer from trl import DPOConfig, DPOTrainer MODEL_ID = "distilgpt2" MAX_NEW = 40 NUM_ITERS = 2 STEPS_PER_ITER = 3 def score_response(text: str) -> float: t = text.lower().strip() penalty = 3.0 * t.count("cannot") + 2.0 * t.count("sorry") + 2.0 * t.count("as an ai") return float(len(t.split())) - penalty def judge_pair(a: str, b: str) -> tuple[str, str]: if score_response(a) >= score_response(b): return a, b return b, a def generate_one(model, tokenizer, prompt: str, temperature: float, gen_seed: int) -> str: torch.manual_seed(gen_seed) inputs = tokenizer(prompt, return_tensors="pt").to(model.device) with torch.no_grad(): out = model.generate( **inputs, max_new_tokens=MAX_NEW, temperature=temperature, do_sample=True, top_p=0.95, pad_token_id=tokenizer.pad_token_id, ) return tokenizer.decode(out[0][inputs["input_ids"].shape[1] :], skip_special_tokens=True).strip() def collect_pairs(model, tokenizer, prompts: list[str], sim_threshold: float = 0.88): rows = [] for i, prompt in enumerate(prompts): a = generate_one(model, tokenizer, prompt, 0.75, 5000 + i * 3) b = generate_one(model, tokenizer, prompt, 1.0, 5000 + i * 3 + 1) if SequenceMatcher(None, a.lower(), b.lower()).ratio() >= sim_threshold: continue c, r = judge_pair(a, b) rows.append({"prompt": prompt, "chosen": c, "rejected": r}) return rows PROMPTS = [ "Name one benefit of reading fiction.", "What does CPU stand for?", "One tip for better sleep.", "Explain overfitting in one sentence.", ] print("Recipe 2: iterative DPO on", MODEL_ID) random.seed(0) tok = AutoTokenizer.from_pretrained(MODEL_ID) tok.pad_token = tok.eos_token policy = AutoModelForCausalLM.from_pretrained(MODEL_ID) lora = LoraConfig( r=8, lora_alpha=16, lora_dropout=0.05, bias="none", task_type="CAUSAL_LM", target_modules=["c_attn", "c_proj"], ) for it in range(NUM_ITERS): print(f"\n{'=' * 60}\nITERATION {it + 1}/{NUM_ITERS}\n{'=' * 60}") policy.eval() pairs = collect_pairs(policy, tok, PROMPTS, sim_threshold=0.88) print(f" Collected {len(pairs)} pairs this round.") if len(pairs) < 2: raise RuntimeError("Too few pairs after filter — relax threshold or prompts.") ds = Dataset.from_list(pairs) args = DPOConfig( output_dir=f"./dpo_recipe2_iter_{it}", max_length=256, per_device_train_batch_size=1, max_steps=STEPS_PER_ITER, logging_steps=1, report_to="none", bf16=torch.cuda.is_available() and torch.cuda.is_bf16_supported(), fp16=False, beta=0.1 + 0.05 * it, loss_type="sigmoid", dataloader_num_workers=0, learning_rate=5e-5 / (it + 1), ) trainer = DPOTrainer( model=policy, ref_model=None, args=args, train_dataset=ds, processing_class=tok, peft_config=lora if it == 0 else None, ) trainer.train() policy = trainer.model lora = None trainer.save_model(f"./dpo_recipe2_iter_{it}/checkpoint") tok.save_pretrained(f"./dpo_recipe2_iter_{it}/checkpoint") print(f" Saved checkpoint under ./dpo_recipe2_iter_{it}/checkpoint") print("\nRecipe 2 OK — policy updated across iterations in-process.")

Common Failure Modes and Fixes

Having trained dozens of DPO models and helped others debug their runs, I can report that the same handful of failure modes appear over and over. Here they are, with concrete fixes.

The model becomes overly verbose. This is the most common complaint. It happens when your preference data has a length bias (chosen responses are systematically longer than rejected ones). Human annotators and AI judges both tend to prefer longer, more detailed responses, and the model picks up on this. Fix: filter your dataset to remove the length correlation, add examples where the shorter response is chosen, or switch to SimPO which explicitly normalizes by length.

The model becomes evasive or sycophantic. The model starts every response with "That's a great question!" or hedges excessively with "It's important to note that..." This happens when your preference data rewards politeness and caution over substance. Fix: include preference pairs that specifically penalize sycophancy, use more diverse evaluation criteria in your judge prompt, or add a small portion of "honesty" focused preference data.

Training loss drops but generation quality doesn't improve. The model is overfitting to surface features of the preference data rather than learning meaningful quality distinctions. Fix: reduce training epochs (often 0.5-1 epoch is better than 2-3), increase beta, use a larger and more diverse preference dataset, or add label smoothing (0.1 works well).

The model forgets capabilities it had after SFT. Catastrophic forgetting of pre-existing abilities. Fix: use LoRA (which inherently limits the magnitude of changes), increase beta, reduce learning rate, or mix a small fraction of SFT data into the DPO training batch (some implementations call this a "SFT loss coefficient").

The model develops repetitive or degenerate outputs. This is a sign of too-aggressive training: low beta, high learning rate, or too many epochs. The policy has drifted too far from the reference. Fix: increase beta, decrease learning rate, train for fewer steps.

Train and eval metrics diverge early. Classic overfitting. Your preference dataset may be too small or too homogeneous. Fix: get more diverse data, use regularization (higher beta, LoRA dropout, weight decay), or try IPO which has built-in regularization through the squared loss.

python# Quick diagnostic: generate responses at each checkpoint # and check for common pathologies def check_for_pathologies(model, tokenizer, test_prompts): """ Generate responses and check for common DPO failure modes. """ pathology_checks = { "verbose": 0, # Excessively long responses "sycophantic": 0, # Starts with flattery "evasive": 0, # Refuses to give a direct answer "repetitive": 0, # Repeats phrases or sentences "degenerate": 0, # Incoherent or garbled output } sycophancy_markers = [ "great question", "excellent question", "wonderful question", "that's a really", "i appreciate", "what a thoughtful", ] evasion_markers = [ "it's important to note", "it depends on", "there are many perspectives", "i cannot", "i'm not able to", "it's worth noting that", ] responses = [] for prompt in test_prompts: inputs = tokenizer(prompt, return_tensors="pt").to(model.device) with torch.no_grad(): output = model.generate( **inputs, max_new_tokens=512, temperature=0.7, do_sample=True, pad_token_id=tokenizer.pad_token_id, ) response = tokenizer.decode(output[0][inputs["input_ids"].shape[1]:], skip_special_tokens=True) responses.append(response) # Check for verbose word_count = len(response.split()) if word_count > 400: pathology_checks["verbose"] += 1 # Check for sycophancy response_lower = response.lower().strip() if any(response_lower.startswith(m) for m in sycophancy_markers): pathology_checks["sycophantic"] += 1 # Check for evasion if sum(m in response_lower for m in evasion_markers) >= 2: pathology_checks["evasive"] += 1 # Check for repetition sentences = response.split(".") unique_sentences = set(s.strip().lower() for s in sentences if s.strip()) if len(unique_sentences) < len(sentences) * 0.7: pathology_checks["repetitive"] += 1 # Check for degenerate if word_count < 5 or len(set(response.split())) < word_count * 0.3: pathology_checks["degenerate"] += 1 total = len(test_prompts) print("Pathology Check Results:") print(f" Verbose (>400 words): {pathology_checks['verbose']}/{total}") print(f" Sycophantic: {pathology_checks['sycophantic']}/{total}") print(f" Evasive: {pathology_checks['evasive']}/{total}") print(f" Repetitive: {pathology_checks['repetitive']}/{total}") print(f" Degenerate: {pathology_checks['degenerate']}/{total}") return pathology_checks, responses

DPO vs. RLHF: When to Use Which

Despite DPO's advantages, RLHF is not obsolete. The two methods have different strengths, and the right choice depends on your situation.

Choose DPO when you want simplicity and fast iteration. DPO is dramatically easier to implement, debug, and tune. If you're a small team, working with a 7B-13B model, and have a reasonable preference dataset (5K-50K pairs), DPO is almost certainly the right choice. The engineering overhead of RLHF is difficult to justify unless you have a dedicated infrastructure team.

Choose RLHF when you need online learning. RLHF with PPO generates new responses during training and evaluates them with the reward model, which means the training signal is always on-policy. DPO trains on a fixed dataset of preference pairs, which makes it an offline method. For frontier model alignment where the preference distribution shifts as the model improves, RLHF's online nature is a genuine advantage. This is why several leading labs still use RLHF (or hybrid approaches) for their largest models.

Choose RLHF when you have an excellent reward model and want to optimize it aggressively. A well-trained reward model can provide dense signal for any possible output, while DPO's signal is limited to the specific pairs in the training set. If your reward model generalizes well, PPO can find better policies by exploring the output space more broadly.

Consider hybrid approaches for the best of both worlds. Several recent papers propose "online DPO" where you generate new preference pairs using the current model during training, essentially getting the online learning benefit of RLHF without the PPO machinery. The RLHF and DPO distinction is becoming less binary as the field matures.

The practical reality, as of early 2025, is that most open-source models and most production alignment work outside of frontier labs uses DPO or one of its variants. The simplicity advantage is overwhelming for teams that don't have the engineering resources to run PPO reliably at scale.

Scaling DPO: Memory Optimization and Distributed Training

Training DPO on large models requires careful memory management. Here's a practical guide to fitting DPO training into your hardware.

python

Multi-GPU DPO with DeepSpeed

For models that don't fit on a single GPU, use DeepSpeed ZeRO:

python# deepspeed_config.json for DPO training with ZeRO Stage 3 deepspeed_config = { "bf16": { "enabled": True, }, "zero_optimization": { "stage": 3, "offload_optimizer": { "device": "cpu", # Offload optimizer to CPU to save GPU memory "pin_memory": True, }, "offload_param": { "device": "none", # Keep params on GPU for speed }, "overlap_comm": True, "contiguous_gradients": True, "sub_group_size": 1e9, "reduce_bucket_size": "auto", "stage3_prefetch_bucket_size": "auto", "stage3_param_persistence_threshold": "auto", "stage3_max_live_parameters": 1e9, "stage3_max_reuse_distance": 1e9, }, "gradient_accumulation_steps": 8, "gradient_clipping": 1.0, "train_batch_size": "auto", "train_micro_batch_size_per_gpu": "auto", "wall_clock_breakdown": False, } # Launch command: # deepspeed --num_gpus 4 train_dpo.py \ # --deepspeed deepspeed_config.json \ # --model_name mistralai/Mistral-7B-Instruct-v0.2 \ # --dataset ultrafeedback \ # --beta 0.1 \ # --learning_rate 5e-7

Conclusion

DPO represents a genuine simplification of the alignment pipeline. It takes the core idea behind RLHF (learn from human preferences) and strips away the infrastructure complexity (reward models, PPO, value functions) by exploiting a mathematical equivalence between reward-based and policy-based formulations of the preference learning problem.

The practical implications are significant. A single researcher with a consumer GPU can now align a 7B model using QLoRA and DPO in a few hours. Two years ago, this required a team of engineers managing a multi-model PPO training loop across a cluster of A100s. The democratization of alignment is real, and DPO is a major reason why.

But DPO is not magic. It is only as good as the preference data you feed it. It can teach the model to be verbose, sycophantic, or evasive if the data rewards those behaviors. It can overfit if trained too aggressively. It can degrade general capabilities if the KL constraint is too loose. The math is elegant; the engineering still requires care.

The most important takeaways from this guide are: start with clean, validated preference data and spend more time on data quality than on hyperparameter tuning. Use beta=0.1 as a starting point and adjust based on whether the model changes too much or too little. Train for one epoch, not three. Evaluate with both automatic metrics and manual inspection. Check for capability regression on standard benchmarks. And when something goes wrong, look at the data first, the metrics second, and the hyperparameters third.

References

- Rafailov, R., Sharma, A., Mitchell, E., Ermon, S., Manning, C. D., & Finn, C. (2023). Direct Preference Optimization: Your Language Model is Secretly a Reward Model. NeurIPS 2023. arXiv:2305.18290

- Azar, M. G., Rowland, M., Piot, B., Guo, D., Calandriello, D., Valko, M., & Munos, R. (2023). A General Theoretical Paradigm to Understand Learning from Human Feedback. arXiv:2310.12036

- Ethayarajh, K., Xu, W., Muennighoff, N., Jurafsky, D., & Kiela, D. (2024). KTO: Model Alignment as Prospect Theoretic Optimization. arXiv:2402.01306

- Hong, J., Lee, N., & Thorne, J. (2024). ORPO: Monolithic Preference Optimization without Reference Model. arXiv:2403.07691

- Meng, Y., Xia, M., & Chen, D. (2024). SimPO: Simple Preference Optimization with a Reference-Free Reward. arXiv:2405.14734

- Ouyang, L., Wu, J., Jiang, X., et al. (2022). Training Language Models to Follow Instructions with Human Feedback. NeurIPS 2022. arXiv:2203.02155

- Schulman, J., Wolski, F., Dhariwal, P., Radford, A., & Klimov, O. (2017). Proximal Policy Optimization Algorithms. arXiv:1707.06347

- Tunstall, L., Beeching, E., Lambert, N., et al. (2023). Zephyr: Direct Distillation of LM Alignment. arXiv:2310.16944

- Ivison, H., Wang, Y., Pyatkin, V., et al. (2023). Camels in a Changing Climate: Enhancing LM Adaptation with Tulu 2. arXiv:2311.10702