OpenAI's "Duct Tape" Model Explained: GPT Image 2 Is Already Here and It's Terrifying

Written by

Jay Kim

OpenAI's leaked "Duct Tape" model appeared on LM Arena under three codenames — and it's already in ChatGPT. GPT Image 2 beats Nano Banana Pro in photorealism, text rendering, and world knowledge. Here's everything we know.

On April 4, 2026, three anonymous image models quietly appeared on LM Arena, the platform where users compare AI models in blind tests. They had names that sounded like they belonged in a hardware store, not on the bleeding edge of AI research. Within hours, the AI community had figured out what they were. Within days, the internet lost its mind.

An unreleased OpenAI image generation model briefly appeared on LMArena under three different codenames before being taken down within hours.[5] The model, internally referred to as GPT-Image-2, was live under aliases including maskingtape-alpha, gaffer-tape-alpha, and packingtape-alpha.[5]

The AI community has since dubbed this family of models "Duct Tape" — a collective nickname for the adhesive-tape-themed codenames that now represents the most significant leap in AI image generation anyone has seen in 2026.

The consensus across every thread: this is a meaningful step above anything currently available publicly.[9]

This is the complete breakdown of what GPT Image 2 is, why the leak has the entire AI image industry scrambling, how to tell if you've already been served the new model without knowing it, and what it means for creators, designers, and developers who depend on AI-generated visuals.

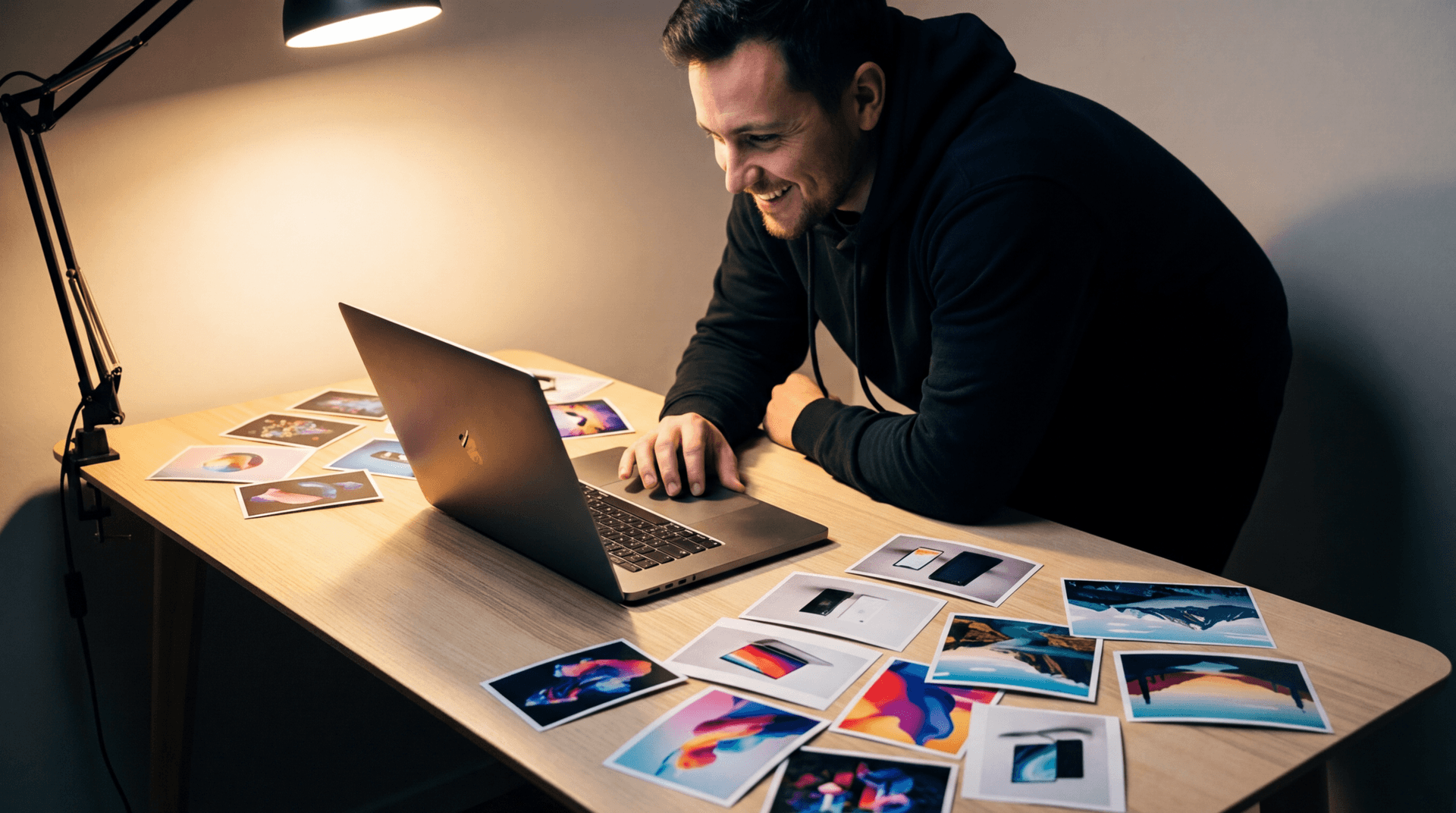

Before I explain how, look at what it can do - 5 Use Cases

I ran five real-world use cases through GPT Image 2 — each designed to break a different aspect of traditional image generation.

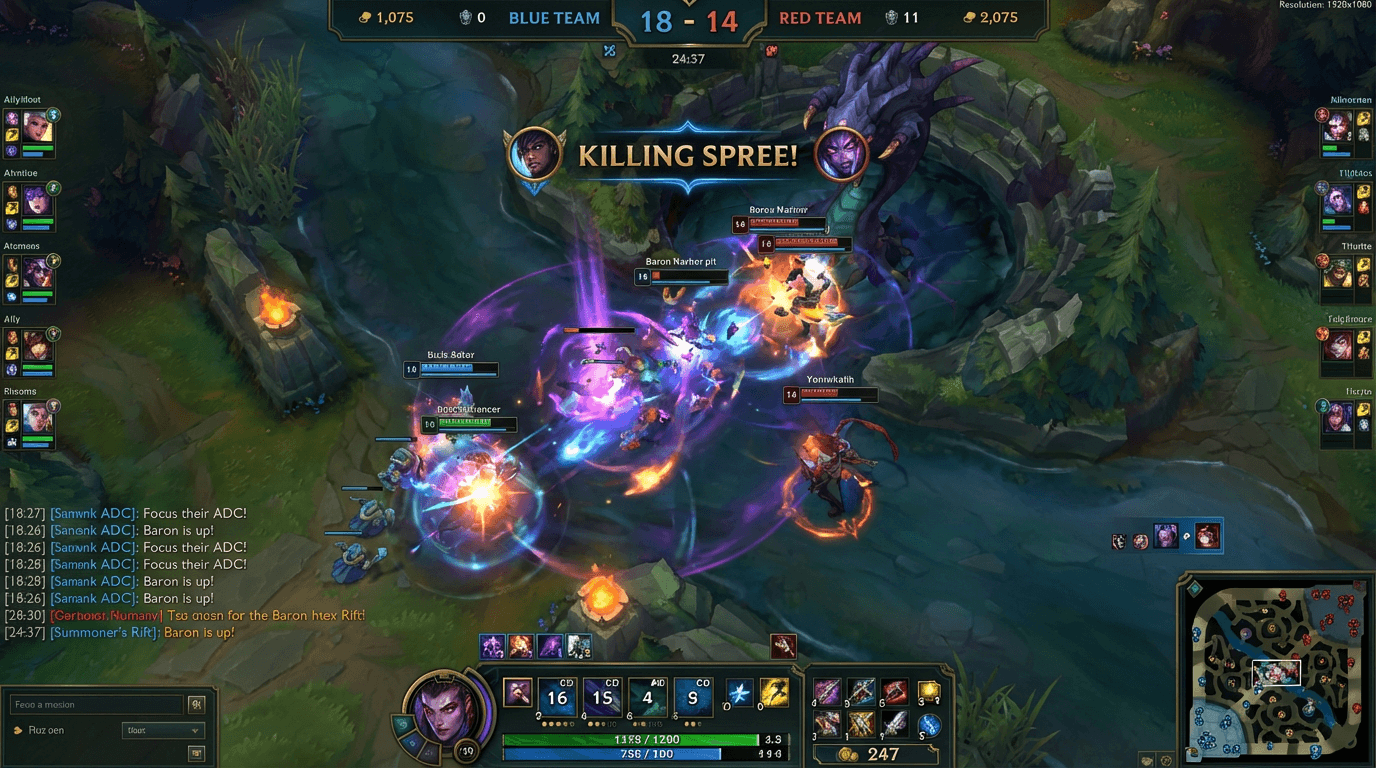

[League of Legends screenshot]

First, I asked it to generate a full League of Legends ranked match screenshot, complete with HUD, minimap, kill score, cooldown timers, and chat box. Every single text element came back legible, every icon sat in the right place — a pixel-perfect recreation of a UI that would have been unreadable gibberish six months ago.

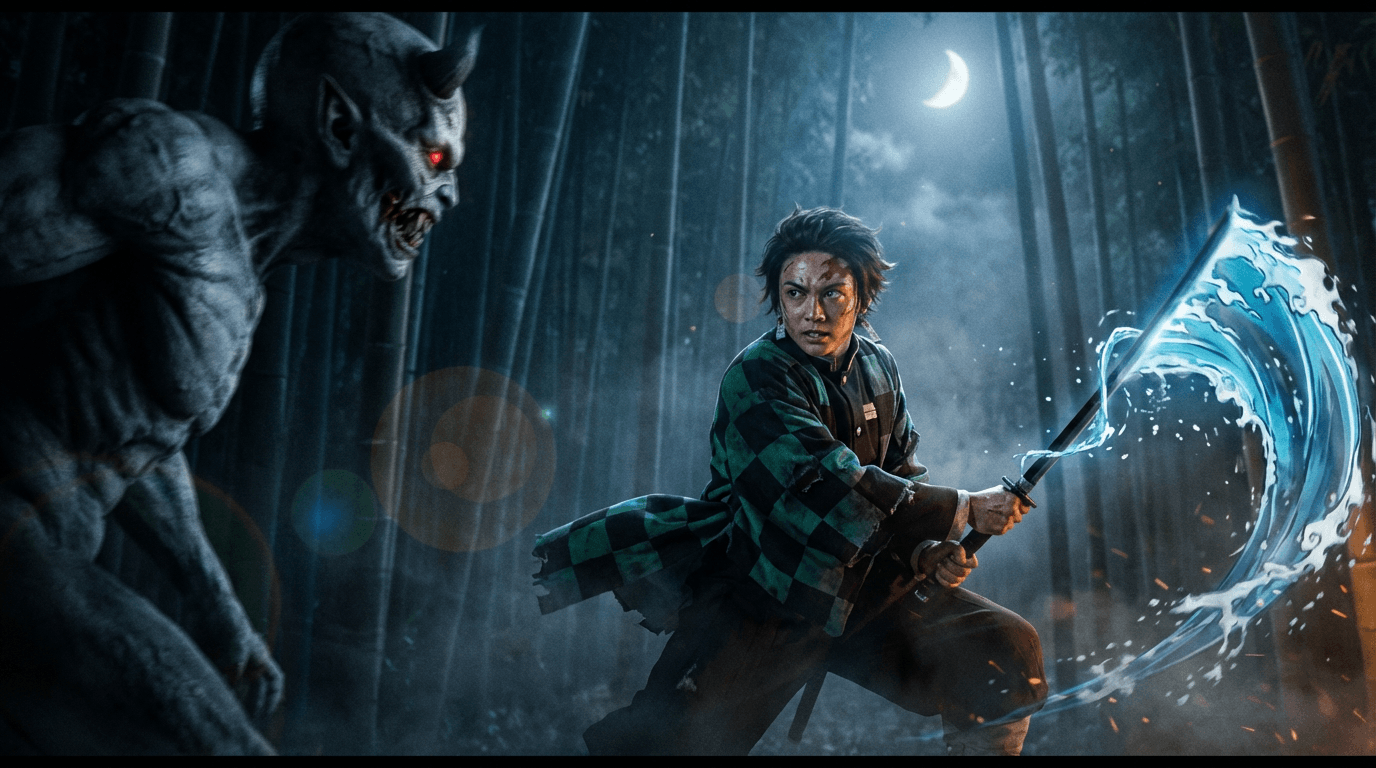

[Demon Slayer live-action]

Next, I pushed it into cinematic territory: a live-action reimagining of Demon Slayer, with real skin textures, real fabric wrinkles, and Tanjiro's Water Breathing technique rendered as a photorealistic VFX effect swirling around the blade. The result looks like a leaked still from a Hollywood adaptation, not an AI output.

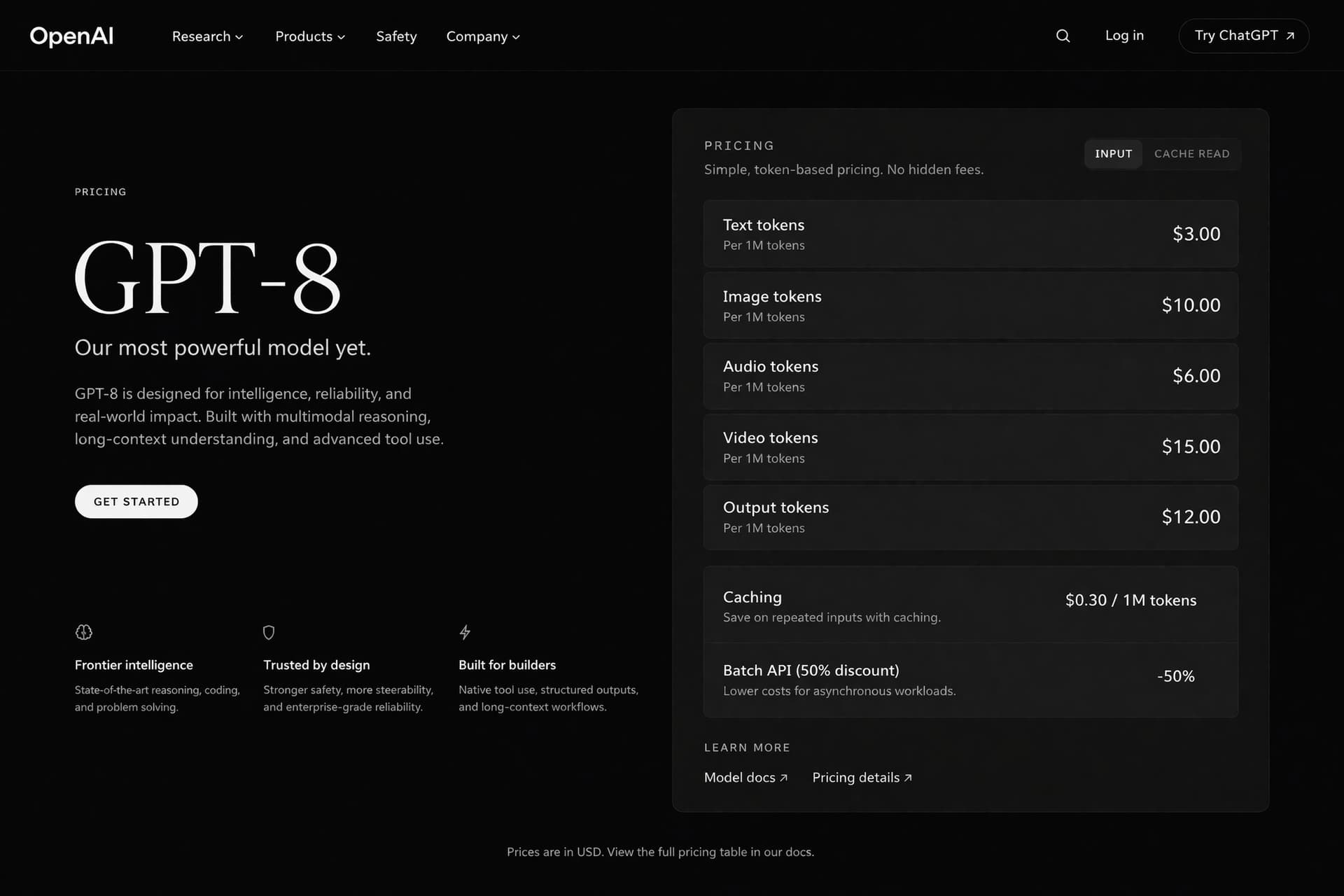

[Fake website]

For the third test, I generated a fake OpenAI landing page inside a browser frame — headline, trusted-by logos, the whole homepage formula. The result is unsettling precisely because it looks completely ordinary, like a real website with correctly spelled text and a functional-looking layout.

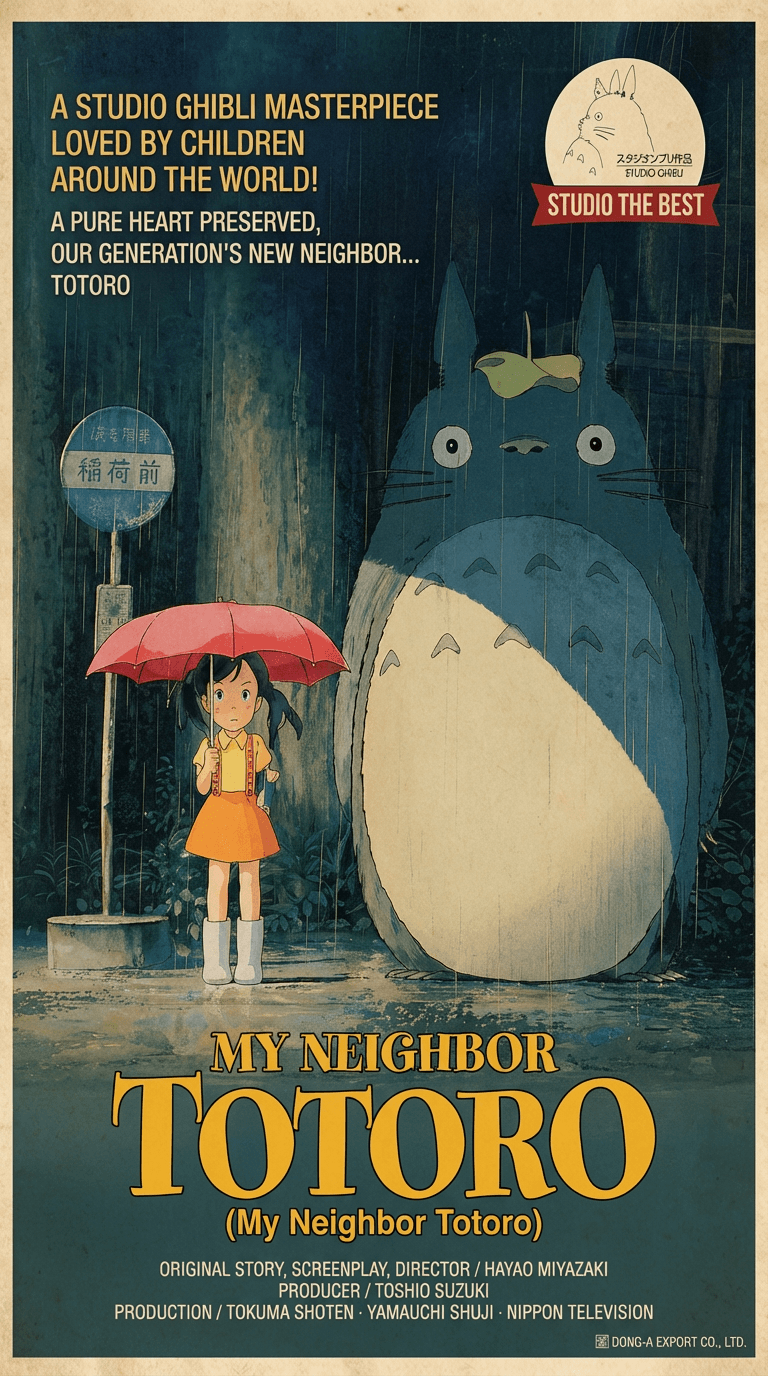

[Totoro poster]

Then I shifted to artistic style, prompting a Totoro-inspired movie poster to see if the model understood the hand-painted warmth and compositional balance of Studio Ghibli's visual language — and it nailed it. GPT Image 2 doesn't just have technical skill. It has genuine stylistic comprehension.

[JJK × LoL collaboration webpage]

Finally, I gave it something that has never existed in the real world: a Jujutsu Kaisen × League of Legends collaboration landing page. This forced the model to know both IPs, understand how a game collaboration webpage is structured, and blend two completely different visual identities into one cohesive design. It produced character splash art, event branding, navigation elements, and promotional copy arranged in a layout that looks like it was shipped by a Riot Games marketing team.

Five prompts. Five capabilities — text rendering, photorealism, UI accuracy, artistic style, and cross-IP creative synthesis. It passed every single one.

Now let me explain why.

The Discovery: Three Models Walk Into an Arena

To understand the "Duct Tape" story, you need to understand how LM Arena works. It is a public benchmarking platform where AI models are served to users anonymously. You type a prompt, you get two outputs side by side from unknown models, and you vote on which one is better. The model identities are hidden. This is how the AI industry gets unbiased quality signals.

This is a familiar playbook. AI companies routinely test upcoming models on public leaderboards under anonymous names to gather unbiased benchmark data. But the community is getting better at sniffing these out.[8]

Early April 4, 2026 is when things got interesting. Three models turned up on LM Arena with names nobody recognized: maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. No company attached.[3]

Developer Pieter Levels was among the first to call it out on X, posting that the models showed "extremely good world knowledge and great text rendering" and speculating they could outperform Nano Banana Pro.[5] Venture investor Justine Moore followed up with her own tests, noting that simple prompts like "average engineer's screen" and "young woman taking selfie with Sam Altman" produced results with an uncanny level of contextual awareness.[5]

That Sam Altman detail is doing a lot of heavy lifting here, if you think about which company's training data would contain enough Sam Altman photos to render his face accurately from a casual prompt.[5]

Then they vanished. The models were pulled from Arena within hours of being publicly identified.[3]

The speed of removal told the community everything it needed to know. They pulled all three fast. That is not what you do with a model that is not ready. That is what you do with a model that is about to launch.[9]

Why "Duct Tape"? The Naming Convention Explained

The three models appeared under adhesive-tape-themed codenames: maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. They are the codenames for three variants of GPT Image 2 being tested on Chatbot Arena. The names follow an "adhesive tape" theme and likely represent different configurations or optimizations of the same base model.[9]

The community quickly adopted "Duct Tape" as a collective shorthand — partly because it is catchier than saying three separate tape names, and partly because the viral posts on X and Threads used it to describe the model family. One widely shared post described "a GPT-based model currently testing in the arena under the bizarre name 'duct-tape'" that was "turning the global AI community upside down."

Three separate code names running simultaneously suggests OpenAI was testing multiple variants, probably with different safety or quality tuning, to see which performed best in blind evaluations before picking one to ship.[9]

This is not a new strategy. In December 2025, two anonymous models appeared on LM Arena under the codenames "Chestnut" and "Hazelnut." They were tested briefly, removed, and weeks later OpenAI shipped GPT Image 1.5.[10] The tape models follow the exact same playbook.[10]

And Google did the same thing before them. Nano Banana first appeared on LMArena in August 2025 with no company claiming credit, quickly drawing attention for its image editing capabilities. Within days, it had shot to the top of the leaderboard. Google hinted at its involvement through a series of banana emoji posts by senior executives including Demis Hassabis, before formally confirming the model was Gemini 2.5 Flash Image.[9]

If you are a creator building visual content and want to stay ahead of whichever model ends up on top, the AI Image Generator inside Miraflow AI lets you generate professional-quality images from text prompts using multiple leading models — so your workflow is never dependent on a single provider.

What Makes GPT Image 2 Terrifying: The Five Breakthroughs

The word "terrifying" is not hyperbole. The outputs from the brief window that the tape models were live on Arena — and the ongoing A/B testing inside ChatGPT — represent genuine capability breakthroughs that change what AI image generation is useful for. Here are the five areas where GPT Image 2 separates itself from everything else.

1. Text Rendering That Actually Works

This is the headline capability, and it is the one that has the most immediate practical implications for creators and marketers.

Rather than floating text awkwardly over images — a persistent limitation in most AI image generators — GPT-Image-2 integrates written language into scenes naturally, including handwritten medical notes with convincing penmanship and comic book panels with readable speech bubbles and accurate costume details on characters like Spider-Man and Batman.[5]

Letters bleed into each other, words get scrambled, and whatever text does appear tends to hover over the scene like an afterthought rather than belonging to it. GPT Image 1.5 improved this significantly. GPT Image 2 appears to have largely solved it. Community testers reported that text inside complex scenes — UI screenshots, product mockups, signage — rendered cleanly and correctly. The words sat in context, not on top of it. For anyone who's tried to generate marketing assets or interface mockups with earlier models, that's a practical difference that changes what the tool is actually useful for.[3]

While GPT Image 1.5 achieved roughly 90-95% text accuracy, the leaked GPT Image 2 models demonstrated near-perfect text rendering on signs, labels, UI elements, code snippets, and even handwritten text.[10]

This alone transforms the use case for AI image generation. Before this, AI-generated images were mostly useful for illustrations, stock photo replacements, and creative exploration. The text rendering improvement opens up marketing automation, document generation, product visualization, and content pipelines. GPT Image 2 extends it into territory where the text in the image matters — which is most real-world marketing and product content.[2]

2. World Knowledge That Feels Uncanny

This is the capability that made people stop scrolling. The model does not just generate aesthetically plausible images — it generates images of things that look like the actual things they are supposed to be.

Where GPT-Image-1 often defaulted to generic aesthetics, GPT-Image-2 demonstrates what early testers described as genuine world knowledge: IKEA storefronts at night rendered with architectural accuracy, YouTube and Windows interfaces reproduced closely enough to pass as real screenshots, and Minecraft scenes with correct in-game UI and art style.[5]

This is where GPT Image 2 surprised people most. One tester prompted the model with "average engineer's screen" and got back a monitor setup that looked like it came from a real software developer's desk with realistic browser tabs, familiar UI patterns, and contextually appropriate content on the screen. That's not just artistic style. That's knowledge about what the world actually looks like.[3]

A fabricated "Claude Opus 5 Internal Document" embedded inside a Minecraft world generated by the model went viral on X, accumulating over 439,000 views.[5]

3. Photorealism That Fools Humans

Users who accessed the model before it disappeared reported significant improvements over its predecessor. The persistent warm yellow color cast that had become a signature flaw of GPT-Image-1 appears to have been eliminated. Photorealistic portraits — including complex beach scenes with multiple subjects, accurate hand anatomy, and realistic sunglass reflections — were described as indistinguishable from real photographs by people shown them without context.[5]

Something the original Russian-language post flagged deserves more attention: some of the generated images are getting hard to distinguish from photographs. The poster admitted they couldn't always tell whether users were uploading real camera photos to troll, or whether the generations had simply gotten that good. That's a different kind of problem than text rendering or spatial reasoning. And it's one that gets thornier the better these models become.[5]

4. A Brand-New Architecture

This is not an incremental update to GPT-4o's image capabilities. It's built from scratch, suggesting OpenAI has been working on a dedicated image generation pipeline rather than bolting image output onto a multimodal model.[8]

The "new standalone architecture" of GPT Image 2 may be a hybrid of autoregressive and diffusion methods, combining the strengths of both.[4]

Multiple sources report a shift from a two-stage inference process to single-pass inference, which would explain both the quality improvement and the expected speed gains. New metadata tags have also been detected in PNG file outputs from suspected GPT Image 2 generations, further supporting the theory of a fundamentally different system. This architectural overhaul matters because it suggests OpenAI is not just iterating. They're rebuilding the image generation stack from the ground up.[10]

5. Speed That Could Redefine Workflows

GPT Image 1.5 already achieved a 4x speed increase compared to the first generation. With a brand-new architecture, GPT Image 2 is expected to complete high-quality image generation in under 3 seconds, further shortening the creative cycle.[4]

If these speed claims hold at launch, the model moves from "tool you use occasionally" to "tool embedded in every step of your creative process." When generation takes less time than typing the next prompt, iteration becomes effectively free.

For creators who want to experiment with the best available AI image tools today while waiting for the official GPT Image 2 launch, Miraflow AI provides a single dashboard where you can generate images, create cinematic videos, build AI actor videos, and design YouTube thumbnails — all from one account.

How It Already Beat the King: GPT Image 2 vs. Nano Banana Pro

To appreciate the significance of the Duct Tape leak, you need to understand what it dethroned.

Prior to the leak, Google DeepMind's Nano Banana Pro had established itself as the benchmark for photorealism in early 2026, consistently outperforming GPT-Image-1.5 in head-to-head comparisons across AI benchmarking trackers.[5]

What followed was a genuine cultural moment. Nano Banana brought 10 million new users to Gemini and facilitated over 200 million image edits within weeks. Gemini briefly became the top free app on the App Store, displacing ChatGPT.[9]

Nano Banana Pro was the benchmark. It was what every model was measured against. Then the tape models appeared.

In blind Arena comparisons, testers noted that the tape models made Nano Banana Pro "look like DALL-E." One tester said they "outperform NBP in realism, text rendering, and world knowledge simultaneously."[10]

The early GPT-Image-2 samples suggest OpenAI has closed — and in some categories, surpassed — that lead. Independent testers noted the model outperforms Nano Banana Pro in realism, text rendering, and world knowledge simultaneously, a combination that, if sustained in the final release, would represent a rare sweep across all three categories at once.[5]

Specific examples from community testing tell the story. One user noted that packingtape-alpha correctly rendered the time on a watch in an image, something Nano Banana Pro failed to do. Another found that in a side-by-side comparison of a first-person Minecraft gameplay screenshot set in Manhattan, maskingtape-alpha outperformed all three of its tape siblings — and Nano Banana Pro. Not everything is perfect, though: the models reportedly still fail the Rubik's Cube reflection test, a standard stress test for spatial reasoning in image generation.[9]

The leaked models showed particular strength on interface screenshots and game UIs. A prompt for "top-down strategy game about optimizing an AI data center" reportedly made Nano Banana Pro look a full generation behind. This suggests the training data included a significant volume of screen recordings and UI captures, which makes the model unusually capable for anyone building product mockups, app demos, or game assets.[3]

Google has since responded with Nano Banana 2. On the April 9, 2026 LM Arena text-to-image leaderboard, Google's gemini-3.1-flash-image-preview (Nano Banana 2) holds first place. OpenAI's gpt-image-1.5-high-fidelity sits second.[6] But GPT Image 2 has not yet been officially added to the leaderboard — and when it is, the standings are expected to shift dramatically.

It's Already in Your ChatGPT: The Silent A/B Test

Here is the part that most people miss: GPT Image 2 is not just a model that briefly appeared on an Arena leaderboard. It is already being served to real ChatGPT users.

A large number of users on X (formerly Twitter) have reported that when the ChatGPT Images feature generates complex images (such as those containing significant amounts of text, UI elements, or product shots), it randomly switches to a noticeably different new model, with output quality significantly higher than GPT Image 1.5.[1]

Some ChatGPT users have reported gaining permanent access to the new model, while others are seeing its outputs through an A/B testing framework where they are asked to choose between competing results.[7]

OpenAI routinely A/B tests features inside ChatGPT before official rollout. This is standard practice — different users get different model versions, and the results inform what gets deployed more broadly.[2]

There are ways to increase your chances of triggering the new model. The key is to generate complex images that contain a lot of text and interface elements. Simple landscapes or pure artistic creations are more likely to be routed to the older model. Generating multiple images in a row increases the probability of hitting the new model.[1]

How can you tell if you have been served GPT Image 2 instead of GPT Image 1.5? The community has identified several telltale signs: no yellow color cast on the image, text that renders cleanly within the scene rather than floating on top of it, noticeably higher resolution and detail density, and outputs with contextual accuracy that feels like the model "knows" what specific real-world things look like.

The Sora Connection: Where the Compute Came From

The timing of the Duct Tape leak is not a coincidence. It traces directly back to one of the most dramatic product shutdowns in AI history.

OpenAI shut down Sora, its AI video generation tool, on March 24, 2026. The numbers were brutal: Sora was burning $15 million per day in inference costs at peak while generating only $2.1 million in total lifetime revenue. Sam Altman stated the shutdown was to "focus compute and product capabilities on next generation" products.[10]

That freed up an enormous amount of GPU capacity, and it's hard to imagine that capacity isn't being redirected toward models like GPT Image 2. The timing lines up perfectly. Sora shut down March 24. The tape models appeared on LM Arena eleven days later on April 4. That's not a coincidence.[10]

The shutdown was abrupt enough that Disney — which had committed to a $1 billion partnership built around the platform — found out less than an hour before the public announcement.[9]

The strategic calculation is clear. OpenAI killed a product that was hemorrhaging money and redirected those compute resources toward a product category where it could actually win. Image generation is cheaper to run than video generation, has higher user engagement, and is a category where OpenAI's ChatGPT integration gives it an enormous distribution advantage.

OpenAI's GPT Image 1.5 had already topped the LMArena image leaderboard in December 2025, edging past Nano Banana Pro — a direct response to Google's momentum in the space. A GPT-Image-2, if that's what the tape models represent, would be OpenAI doubling down on the one consumer AI category[9] where viral adoption is genuinely happening.

The DALL-E Deadline: Why a Launch Is Imminent

There is a hard deadline forcing OpenAI's hand.

OpenAI announced in November 2025 that both DALL-E 2 and DALL-E 3 will be shut down on May 12, 2026.[10] Azure OpenAI already retired DALL-E 3 on February 18, 2026.[10] The official replacement is the GPT Image model family.[10]

DALL-E 2 and DALL-E 3 are also being retired on May 12, 2026, which means developers currently using the older API endpoints need to migrate anyway. GPT Image 2 would give them something worth migrating to.[3]

This creates a natural forcing function. The convergence of signals all point to a launch window that is measured in weeks, not months.

The testing pattern matches GPT Image 1.5. In December 2025, anonymous models ("Chestnut" and "Hazelnut") appeared on LM Arena, were pulled quickly, and GPT Image 1.5 launched within weeks. The tape models follow the same pattern.[10]

OpenAI doesn't A/B test products that are months away from launch. The DALL-E deadline creates urgency.[10]

Anonymous testing in the Arena and beta rollouts in ChatGPT are usually signals that a release is 2–4 weeks away.[1]

Analyst estimates point to May–June 2026. OpenAI has not confirmed any dates.[9]

According to leaks, GPT Image 2 will not be a standalone model but will be integrated directly into the GPT-5 family. This would mean native generation: images will be generated by the language model itself, not by a separate module.[9]

If that integration happens, the DALL-E brand name that launched the consumer AI image generation era may be permanently retired. GPT Image 2 is the direct evolution of GPT Image 1. With integration into the GPT-5 family, the "DALL-E" brand may be permanently retired in favor of the "GPT Image" naming convention.[9]

What GPT Image 2 Means for the Competitive Landscape

The Duct Tape leak landed like a bomb in an already intensely competitive AI image generation market. Here is how each major player is affected.

Google DeepMind (Nano Banana family): Google has the most to lose. Nano Banana Pro was the undisputed leader for months. Google's Nano Banana 2 leading text-to-image is a meaningful signal. It's exactly the kind of gap that historically precedes a major release.[6] Google has already responded with Nano Banana 2, but the tape models suggest OpenAI has pulled ahead on text rendering and world knowledge — two of the highest-value capabilities for commercial users.

Midjourney: Midjourney remains strong for artistic and stylistic output, but it does not compete on text rendering or world knowledge. If GPT Image 2 matches Midjourney's aesthetic quality while offering superior text accuracy and contextual understanding, the value proposition for paying a separate Midjourney subscription weakens considerably.

Open-source (FLUX, Stable Diffusion): We're in the middle of an AI image generation arms race. Google has been recommending Nano Banana Pro as its go-to image model, Midjourney continues to evolve, and open-source models like Stable Diffusion are getting increasingly capable.[8] Open-source models remain the only option that offers zero per-generation cost and full local control, but the quality gap with GPT Image 2 is likely to widen at launch.

The broader market: One thing is clear: 2026 is shaping up to be the year AI image generation becomes truly production-ready.[8] The combination of near-perfect text rendering, photorealism that fools humans, and sub-3-second generation times means that AI image generation is crossing the threshold from "interesting experiment" to "default production tool."

For creators navigating this rapidly shifting landscape, building workflows that work across multiple models is not optional — it is essential. Our guide to what Wan 2.7 is and everything creators need to know covers the most capable open-source option for video, and Miraflow AI offers an all-in-one dashboard covering images, videos, AI actors, and music without locking you into any single provider.

Expected Capabilities at Launch: What the Leaks Tell Us

Based on the Arena testing, community reports, and OpenAI's documented roadmap, here is what GPT Image 2 is expected to deliver at official launch.

The maximum resolution for GPT Image 1.5 is 1536×1024. GPT Image 2 is expected to support native 4K output (2048×2048 or 4096×4096), along with a 16:9 widescreen aspect ratio, meeting the needs of professional content creation and commercial printing.[4]

Text rendering is a signature capability of the GPT Image series. While GPT Image 1.5 has already achieved about 95% accuracy for English text, it still struggles with non-Latin scripts like CJK (Chinese, Japanese, Korean) and Arabic. GPT Image 2 is expected to boost text rendering accuracy to over 99% and provide full support for multilingual text.[4]

One of the most requested features is maintaining a consistent character identity across multiple image generations. GPT Image 2 may introduce persistent embeddings or a reference system to solve this, though this remains speculative.[10]

Some rumors point to ControlNet-style spatial controls like bounding boxes or layered composition tools. This would give users much more precise control over where elements appear in the image.[10]

Expected Pricing: What Developers Should Know

GPT Image 1.5 currently costs $0.133 to $0.200 per image through the API, depending on resolution and quality settings. GPT Image 1 Mini runs about 80% cheaper.[10]

Industry analysts expect GPT Image 2 to land around $0.15 to $0.20 per image, representing a slight increase from GPT Image 1.5.[10]

From DALL-E 3 to GPT Image 1.5, OpenAI's image generation costs have been on a steady downward trend.[4] GPT Image 2 is expected to continue this trend, potentially introducing a new "turbo" pricing tier that offers faster generation at a slight quality trade-off.

For developers who are currently on the DALL-E API, the message is urgent. OpenAI has announced that DALL-E 2 and DALL-E 3 will be discontinued on May 12, 2026. This means all applications relying on the DALL-E API must migrate to the GPT Image series before this date.[4]

What It Doesn't Do (Yet): Known Limitations

The Duct Tape models are not perfect. The community has identified specific areas where GPT Image 2 still falls short.

The models reportedly still fail the Rubik's Cube reflection test, a standard stress test for spatial reasoning in image generation.[9]

Reports circulating on Russian-language Telegram channels describe aggressive content filtering that produces some bizarre results, including one case where the model allegedly rendered a map of Africa with "CIGER" instead of "Niger." This would track with OpenAI's historically aggressive approach to content safety in image generation.[5]

The key question is whether OpenAI will maintain the model's current quality at launch or dial it back for cost and safety reasons, a pattern the company has followed before.[7]

It's worth noting that what we're seeing is still a testing build. OpenAI has historically fine-tuned models between internal testing and public release, sometimes adjusting outputs for quality, cost efficiency, or safety considerations. The final GPT Image 2 may differ from what early testers have seen.[5]

How to Prepare: A Practical Guide for Creators and Developers

Whether you are a content creator, marketer, designer, or developer, the arrival of GPT Image 2 changes the landscape. Here is what you should be doing right now.

If you are a creator: Start learning prompt architecture for photorealistic image models. GPT Image 2 rewards specificity. The leaked examples that impressed people most were responses to specific, contextually grounded prompts. "Average engineer's screen" worked because it referenced something the model has concrete knowledge about. Abstract prompts like "futuristic interface" leave too much to interpretation. The more specific your reference point, the more the model's world knowledge kicks in.[3] Our guide to 10 AI prompts for YouTube thumbnails that stop the scroll covers the specific prompt structures that translate directly to this new model's strengths.

If you are a developer on the DALL-E API: Migrate to GPT Image 1.5 immediately. It is currently the most powerful image generation model from OpenAI, with Low quality costing only $0.009 per image. The API is compatible, so migrating to GPT Image 2 later will only require a simple model name swap. Waiting will only cause you to miss the migration window before DALL-E retires.[4]

If you are building a content pipeline: Use a multi-model approach. The Cinematic Video Generator and AI Image Generator inside Miraflow AI let you produce visual assets across multiple models from a single interface. When GPT Image 2 officially launches, you can adopt it without rebuilding your entire workflow.

If you want to try triggering GPT Image 2 right now: Open ChatGPT and attempt to generate images with complex text, UI elements, or specific real-world references. A large number of users on X have reported that when ChatGPT generates complex images containing significant amounts of text, UI elements, or product shots, it randomly switches to a noticeably different new model.[1] There are no guarantees, but complex prompts appear to increase the odds of being routed to the new model.

The Bigger Picture: Why This Leak Matters Beyond Image Generation

The Duct Tape leak is not just an AI image story. It is a signal about OpenAI's broader strategic direction.

Sora, OpenAI's video model, was shut down in March 2026. The shutdown freed up enormous computational resources that, according to industry sources, have been reallocated precisely to the development and training of GPT Image 2. The timing is no coincidence: Sora shuts down in March, GPT Image 2 appears on Arena in April. As we saw in the case of GPT-5.5 Spud, OpenAI is consolidating its resources around the most promising models.[9]

OpenAI has been operating under what CEO Sam Altman described as a "code red" posture since Google's Gemini 3 and Nano Banana Pro began eating into its market position in late 2025, and a strong Image 2 release would be a direct answer to that pressure.[7]

The pattern is clear: OpenAI is no longer trying to do everything. It is killing products that burn money (Sora), doubling down on products with viral consumer adoption (image generation), and integrating everything into a unified GPT-5 ecosystem. GPT Image 2 is the first major product to emerge from that strategic realignment — and if the Duct Tape samples are representative of the final quality, it is a convincing proof of concept.

The leaked model's performance suggests that the gap between AI-generated and real images is about to become nearly indistinguishable. For creators, designers, and businesses, this changes everything.[8]

When Will It Launch? Our Best Estimate

There is no confirmed GPT Image 2 release date. OpenAI has not announced one.[6]

But the convergence of evidence points to an imminent release. Testing three model variants at once (maskingtape, gaffertape, packingtape) suggests OpenAI is comparing final candidates, not early prototypes.[10] Given that it's already in A/B testing within ChatGPT, a broader rollout could happen quickly — potentially measured in weeks rather than months.[2]

The DALL-E retirement on May 12 creates a hard deadline. The Sora compute has been freed. The Arena testing followed the exact same pattern as GPT Image 1.5, which launched within weeks of its Arena appearance.

Our best estimate: expect an official announcement between late April and mid-May 2026, with the ChatGPT rollout happening first, followed by API access shortly after.

OpenAI's general pattern is: internal testing → selected user groups → ChatGPT rollout → API access. GPT Image 1 followed this rough path, and there's no reason to think GPT Image 2 will be different.[2]

Conclusion: The Rules Just Changed

The "Duct Tape" leak is one of those moments where the AI community collectively realizes the ground has shifted beneath them. It already makes Nano Banana Pro look outdated.[9] The combination of near-perfect text rendering, genuine world knowledge, photorealism that fools human observers, and a completely new architecture designed from the ground up — all wrapped in a model that some ChatGPT users are already accessing without knowing it — makes GPT Image 2 the most significant leap in AI image generation since the original Nano Banana moment in August 2025.

For creators, the takeaway is not to panic but to prepare. The tools are getting dramatically better, dramatically faster, and the creators who learn to harness them will have an exponential advantage over those who do not.

Start building your AI visual content pipeline today with Miraflow AI — generate images, cinematic videos, AI actor videos, YouTube Shorts, custom background music, and professional thumbnails from a single dashboard. The Duct Tape model is coming. The question is whether you'll be ready when it arrives.

Frequently Asked Questions

What is the "Duct Tape" AI model?

"Duct Tape" is the community nickname for three anonymous AI image generation models that appeared on LM Arena on April 4, 2026, under adhesive-tape-themed codenames: maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. They are widely believed to be OpenAI's upcoming GPT Image 2 model, though OpenAI has not officially confirmed this.

Is GPT Image 2 officially released?

No. As of April 16, 2026, OpenAI has not officially announced or released GPT Image 2. The model was briefly available for testing on LM Arena before being pulled within hours, and some ChatGPT users are encountering it through silent A/B testing.

How can I try GPT Image 2 right now?

You cannot access it directly, but there is a chance of triggering it through ChatGPT's image generation feature. Community reports suggest that generating complex images containing significant text, UI elements, or detailed real-world references increases the probability of being served the new model. There is no guaranteed method.

How is GPT Image 2 different from GPT Image 1.5?

Based on leaked testing, GPT Image 2 features a completely new standalone architecture (not built on GPT-4o), near-perfect text rendering, dramatically improved world knowledge, elimination of the yellow color cast, photorealism indistinguishable from photographs, and expected support for native 4K resolution.

When will GPT Image 2 launch?

Analyst estimates point to May–June 2026. The convergence of A/B testing in ChatGPT, the DALL-E retirement deadline of May 12, and the pattern from the GPT Image 1.5 launch suggest an announcement could come within 2-4 weeks of the Arena testing.

Does GPT Image 2 beat Nano Banana Pro?

In blind Arena comparisons during the brief testing window, community testers reported that the tape models outperformed Nano Banana Pro in realism, text rendering, and world knowledge simultaneously. However, Google has since released Nano Banana 2, and the final GPT Image 2 may differ from the testing build. The models still fail certain spatial reasoning tests like the Rubik's Cube reflection test.

How much will GPT Image 2 cost?

GPT Image 1.5 costs $0.133 to $0.200 per image via the API. Industry analysts expect GPT Image 2 to land around $0.15 to $0.20 per image, with potential new pricing tiers including a faster "turbo" option.

What happened to DALL-E?

OpenAI announced that both DALL-E 2 and DALL-E 3 will be shut down on May 12, 2026. The GPT Image model family (GPT Image 1, 1.5, and eventually 2) is the official replacement. Developers on the DALL-E API need to migrate before the shutdown date.

What does the Sora shutdown have to do with GPT Image 2?

OpenAI shut down its video generation tool Sora on March 24, 2026, citing unsustainable costs ($15M/day in inference). The massive GPU capacity freed by the shutdown appears to have been redirected toward GPT Image 2 development, with the tape models appearing on Arena just 11 days later.

References

- Full Interpretation of GPT Image 2 Grayscale Leak: 3 Codename Models Appear in Arena, 5 Major Capability Upgrades, and Trigger Verification Techniques - Apiyi.com Blog

- GPT Image 1.5 vs Nano Banana Pro | AI Image Models Comparison | getimg.ai

- What Is GPT Image 2? Everything We Know About OpenAI's Next Image Model | MindStudio

- GPT Image 2 vs Nano Banana Pro: The Ultimate AI Image Generator Showdown (2025)

- GPT Image 2 Just Leaked! | Eachlabs

- Nano Banana vs. ChatGPT Image Generator - Which One is Better? - LogoAI

- Preview of GPT Image 2: 3 Grayscale Codenames Exposed and a Comprehensive Interpretation of 5 Major Expected Upgrades - Apiyi.com Blog

- Nano Banana 2 vs GPT Image 1.5: API Cost & Quality Comparison (2026) | LaoZhang AI Blog

- GPT-Image-2 Leaked: OpenAI's Unreleased Model Briefly Appeared Online — Here's What We Know - Frontierbeat

- Aihola

- GPT Image 2 Will Be Released: Everything We Know About It | Dzine Blog

- GPT Image 1.5 vs. Nano Banana Pro vs. FLUX.2 maxmaxmax | AI Hub

- GPT Image 2: Rumours, Leaks & Release Date (2026) | Summary | getimg.ai

- ChatGPT's NEW Image Model vs. Nano Banana: 9 Tests + 82 Image Prompts Across Advertising, Board Slides, and Education + My Complete Readout

- OpenAI tests next-gen Image V2 model on ChatGPT and LM Arena

- Nano Banana vs ChatGPT Image (2025): Realism, Speed, & Policy Guide

- What Will GPT Image 2 Be? Predictions Based on OpenAI's Trajectory | WaveSpeedAI Blog

- OpenAI GPT-Image-2 Leaked: Features, Release Date & What It Means | Oimi AI

- GPT Image 1.5 vs Gemini Nano Banana Pro: Which Is Better for Professional Work? - Bind AI

- GPT Image 2: Free AI Image Generator — Photorealistic Output

- GPT Image 2 Leaked: Prompts and Workflow Guide 2026

- GPT Image 2: OpenAI's New Model Leaked on Chatbot Arena

- GPT Image 2 Is Already Leaking — Here's What's Coming and How to Get Ready

- Three Image Generation Models Named Maskingtape, Gaffertape and Packingtape Create Buzz On Arena, Rumoured To Be OpenAI's GPT-Image 2

- OpenAI’s New GPT Image 02 Leak: Everything You Should Know

- chatgpt-image-latest vs Nano Banana 2 API pricing comparison: generating 1 image costs 3-10 times more - Apiyi.com Blog

- GPT Image 2 Just Leaked: Everything We Know (April 2026)

- can on X: "GPT Image V2 in on LM Arena 🖼️ It has three variations; Duct Tape 1, 2 and 3 Duct Tape 2 and 3 looks better. Thursday release? 👀 https://t.co/BkW8qR7F2P" / X

- I Tested ChatGPT Images (GPT Image 1.5)vs Nano Banana Pro.