GPT-5.5 Explained: Everything You Need to Know About OpenAI's Most Powerful Model

Written by

Jay Kim

OpenAI released GPT-5.5 on April 23, 2026 — the first fully retrained base model since GPT-4.5. It scores 82.7% on Terminal-Bench 2.0 (vs Claude's 69.4%), costs $5/$30 per million tokens (2x GPT-5.4), and helped discover a new mathematical proof about Ramsey numbers. This is the complete breakdown: benchmarks, pricing, safety classification, API details, and how it compares to Claude Opus 4.7 and Gemini 3.1 Pro.

OpenAI didn't just release another incremental update. On April 23, 2026 — less than seven weeks after shipping GPT-5.4 — the company dropped GPT-5.5, codenamed "Spud," and it represents something fundamentally different from the rapid-fire model iterations that defined the last six months of AI development.

GPT-5.5 is OpenAI's most capable model to date and the first fully retrained base model since GPT-4.5.[10] Every GPT-5.x release between them — 5.1, 5.2, 5.3, 5.4 — was a post-training iteration on top of the same base.[9] GPT-5.5 is not. The architecture, pretraining corpus, and agent-oriented objectives have all been reworked.[9]

That distinction matters enormously. While GPT-5.1 through 5.4 each delivered genuine improvements — better coding, stronger reasoning, enhanced computer use — they were all variations on the same underlying foundation. GPT-5.5 is a new foundation entirely, and the benchmark results, early user reactions, and pricing decisions all reflect that.

This article provides the most comprehensive breakdown available: what GPT-5.5 actually does differently, exactly how it performs against Claude Opus 4.7 and Gemini 3.1 Pro, what the pricing means for developers and businesses, the safety implications that delayed the API launch, and what it signals about where AI is heading in the second half of 2026.

Why GPT-5.5 Matters: The Context Behind the Release

To understand why GPT-5.5 is significant, you need to understand the competitive pressure that shaped it.

The release, coming just six weeks after the company debuted GPT-5.4, is an extremely fast turnaround that underscores how fiercely frontier AI labs are competing for enterprise customers, and how their models are increasingly evolving through continuous, incremental updates.[4] OpenAI released its most capable model, GPT-5.5, codenamed "Spud," just one week after competitor Anthropic launched its latest model.[4]

The timing is no coincidence. Anthropic released Claude Opus 4.7 just days before GPT-5.5's launch, and the much-discussed Claude Mythos Preview — with its controversial cybersecurity capabilities — had been dominating AI discourse for weeks. OpenAI no doubt hopes those figures will undercut a narrative that has been building across social media that OpenAI has lost traction among consumers and has fallen behind its arch-rival Anthropic in the race for enterprise customers.[4]

OpenAI backed up its release with scale metrics that are difficult to argue with. The company said there are 4 million active Codex users and 9 million paying business users on ChatGPT. ChatGPT also has more than 900 million weekly active users and over 50 million subscribers.[4] Those numbers, confirmed across multiple reporting outlets, make ChatGPT the most widely used AI product on Earth by a significant margin — ChatGPT accounts for 62.5% of the market share of AI tools.[4]

The revenue picture is equally staggering. OpenAI's monthly revenue reached $2 billion as of March 2026. OpenAI generated a total of $20 billion in annual sales in 2025.[4] OpenAI's annualized revenue crossed $25 billion by end of February 2026.[5]

All of this context matters because GPT-5.5 isn't just a technical achievement — it's a strategic move designed to reassert OpenAI's position as the frontier leader at a moment when that leadership was genuinely contested.

What GPT-5.5 Actually Is: The Core Capabilities

OpenAI's positioning for GPT-5.5 is deliberately different from previous launches. Rather than emphasizing a single breakthrough capability, the company is framing this as a model built for sustained, autonomous work.

OpenAI describes GPT-5.5 as its "smartest model yet — faster, more capable, and built for complex tasks like coding, research, and data analysis across tools."[3] GPT‑5.5 understands what you're trying to do faster and can carry more of the work itself. It excels at writing and debugging code, researching online, analyzing data, creating documents and spreadsheets, operating software, and moving across tools until a task is finished. Instead of carefully managing every step, you can give GPT‑5.5 a messy, multi-part task and trust it to plan, use tools, check its work, navigate through ambiguity, and keep going.[3]

Greg Brockman, OpenAI's president and co-founder, was characteristically direct about the model's positioning. "What is really special about this model is how much more it can do with less guidance," Brockman said. "It can look at an unclear problem and figure out just what needs to happen next. It really, to me, feels like it's setting the foundation for how we're going to use computers, how we're going to do computer work going forward."[1]

Brockman called GPT-5.5 "a new class of intelligence" and "a big step towards more agentic and intuitive computing." He added that GPT-5.5 is a "faster, sharper thinker for fewer tokens" compared to 5.4, and can handle multi-step workflows more autonomously with less user input.[4]

The Four Pillars of Improvement

OpenAI identifies four areas where GPT-5.5 delivers the strongest gains:

The gains are especially strong in agentic coding, computer use, knowledge work, and early scientific research — areas where progress depends on reasoning across context and taking action over time.[3]

These aren't arbitrary categories. They map directly to the work that enterprise customers — the primary revenue driver for frontier AI labs — need AI to perform. Agentic coding means software engineering tasks that require planning, multi-file changes, and iterative debugging. Computer use means operating software interfaces, navigating browsers, and interacting with real applications. Knowledge work means analyzing data, creating documents and spreadsheets, and completing professional tasks across 44 different occupations. Early scientific research means persisting through multi-step experimental workflows in domains like genetics, bioinformatics, and mathematics.

The Efficiency Breakthrough

Perhaps the most commercially significant claim about GPT-5.5 is not raw intelligence but efficiency. GPT‑5.5 delivers this step up in intelligence without compromising on speed: larger, more capable models are often slower to serve, but GPT‑5.5 matches GPT‑5.4 per-token latency in real-world serving.[3]

It also uses significantly fewer tokens to complete the same Codex tasks, making it more efficient as well as more capable.[3] In plain terms, it is smarter without being slower, and it uses fewer tokens to reach the same answer.[10]

According to third-party analysis, Artificial Analysis measured this at roughly a 40% reduction in total tokens per Intelligence Index run.[9] For developers running AI at scale, this efficiency gain has direct cost implications — fewer tokens per task means lower cost per task, even if the per-token price has increased.

For agent builders, this compounds. Fewer tokens per task means lower cost per task, shorter wall-clock time per task, and more tasks per dollar of budget. It also means longer agent runs become economically feasible, which is one of the main things holding back complex workflows today.[10]

The Benchmark Picture: Where GPT-5.5 Wins, Ties, and Trails

OpenAI published an unusually transparent benchmark comparison with GPT-5.5, including results where the model trails competitors. OpenAI published a detailed benchmark table at launch comparing GPT-5.5 against Claude Opus 4.7, GPT-5.4, and Gemini 3.1 Pro. Unlike many model launches, they included benchmarks where they trail — a sign of genuine confidence.[6]

Where GPT-5.5 Dominates

Terminal-Bench 2.0 (Agentic Command-Line Workflows): This is GPT-5.5's headline benchmark victory. On Terminal-Bench 2.0, which tests complex command-line workflows requiring planning, iteration, and tool coordination, it achieves a state-of-the-art accuracy of 82.7%. On SWE-Bench Pro, which evaluates real-world GitHub issue resolution, it reaches 58.6%.[3] It achieved a rating of 82.7%, showing a clear lead over GPT-5.4's score of 75.1%, Anthropic's Opus 4.7's score of 69.4%, and Google's Gemini 3.1 Pro's score of 68.5%.[8]

Terminal-Bench 2.0 at 82.7% is GPT-5.5's most decisive win. This benchmark tests real command-line workflows: planning, iteration, and tool coordination in a sandboxed terminal environment. GPT-5.4's previous score was 75.1%. Claude Opus 4.7 sits at 69.4%. For developers building unattended terminal agents, pipeline runners, or DevOps automation, this benchmark matters more than SWE-bench. No publicly available model is close.[6]

GDPval (Knowledge Work Across 44 Occupations): On GDPval, which tests agents' abilities to produce well-specified knowledge work across 44 occupations, GPT‑5.5 scores 84.9%.[3] It set a record on GDPval, a benchmark dataset that tests LLMs' ability to complete economically valuable tasks across 44 fields. Notably, the standard version of GPT-5.5 bested both the Pro edition and Claude Opus 4.7 with an 84.9% score.[6]

OSWorld-Verified (Computer Use): On OSWorld-Verified, which measures whether a model can operate real computer environments on its own, it reaches 78.7%.[3] On OSWorld-Verified, it scores 78.7%, versus 75% for GPT-5.4 and 78% for Anthropic's Opus 4.7.[8]

FrontierMath (Advanced Mathematics): One of the most difficult benchmarks in OpenAI's test suite is FrontierMath Tier 4. It comprises dozens of postdoctoral-level math problems that can take a human expert upwards of days to solve. GPT 5.5 Pro scored 39.6%, nearly double the 22.9% that Claude Opus 4.7 achieved.[6]

Publicly Available Model Crown: Because Mythos is excluded from broad commercial use, the primary market competition remains between GPT-5.5, Gemini 3.1 Pro, and Claude Opus 4.7. So when it comes to models that the general public can access, GPT-5.5 has retaken the crown for OpenAI, achieving the state-of-the-art across 14 benchmarks.[4]

Where GPT-5.5 Trails

The picture is not uniformly positive. GPT-5.5 has clear weaknesses that matter for specific use cases.

SWE-Bench Pro (GitHub Issue Resolution): Claude Opus 4.7 leads on SWE-bench Pro (64.3% vs 58.6%) for complex multi-file GitHub issue resolution.[2] GPT-5.5 leads on Terminal-Bench 2.0 (82.7%) for command-line workflows and uses fewer tokens per task in Codex. For pure code quality on hard problems, Opus 4.7 wins. For agentic coding workflows with tool coordination, GPT-5.5 has the edge.[2]

Humanity's Last Exam (Academic Reasoning): HLE (Humanity's Last Exam, no tools) shows Claude Opus 4.7 at 46.9% versus GPT-5.5's 41.4%. This benchmark tests expert-level cross-domain reasoning without tool assistance. Gemini 3.1 Pro (44.4%) and Claude Opus 4.7 both outperform GPT-5.5 here, suggesting that on raw knowledge-recall-heavy academic reasoning, OpenAI's model has not yet fully closed the gap.[6]

MCP-Atlas (Multi-Tool Orchestration): MCP-Atlas: Claude Opus 4.7 scores 79.1% versus GPT-5.5's 75.3%. For teams heavily invested in multi-tool orchestration via the Model Context Protocol, Claude's lead on this benchmark reflects better tool-call reliability in complex, chained scenarios.[6]

Multilingual Performance: Multilingual Q&A (MMMLU): 83.2% — noticeably behind Opus 4.7 (91.5%) and Gemini 3.1 Pro (92.6%).[9]

The Three-Way Frontier Summary

The benchmark landscape in April 2026 is the most competitive it has ever been. Three frontier models, three different strengths. GPT-5.5 leads agentic workflows (84.9% GDPval, 78.7% OSWorld). Gemini 3.1 Pro leads reasoning (77.1% ARC-AGI-2, 94.3% GPQA). Claude Mythos leads coding (93.9% SWE-bench).[1]

The pattern that emerges is consistent: GPT-5.5 dominates agentic and autonomous workflows. Claude Mythos dominates coding and security. Gemini 3.1 Pro dominates reasoning, long-context, and cost-sensitive workloads. There is no single "best" model — there's only the best model for your specific task distribution.[1]

Agentic Coding in Codex: The Headline Use Case

If there's a single use case that defines GPT-5.5's identity, it's agentic coding inside OpenAI's Codex platform. GPT‑5.5 is OpenAI's strongest agentic coding model to date.[3]

The improvements aren't just about benchmark numbers. Senior engineers who tested the model said GPT‑5.5 was noticeably stronger than GPT‑5.4 and Claude Opus 4.7 at reasoning and autonomy, catching issues in advance and predicting testing and review needs without explicit prompting. In one case, an engineer asked it to re-architect a comment system in a collaborative markdown editor and returned to a 12-diff stack that was nearly complete. Others said they needed surprisingly little implementation correction and felt more confident in GPT‑5.5's plans compared with GPT‑5.4.[3]

The practical impact at NVIDIA, one of GPT-5.5's earliest enterprise testers, was dramatic. Over 10,000 NVIDIANs — across engineering, product, legal, marketing, finance, sales, HR, operations and developer programs — are already using GPT-5.5-powered Codex to achieve, in their words, "mind-blowing" and "life-changing" results. NVIDIA engineers have had access to GPT-5.5 through the Codex app for a few weeks, and the gains are measurable.[4] Debugging cycles that once stretched across days are closing in hours. Experimentation that previously required weeks is turning into overnight progress in complex, multi-file codebases. Teams are shipping end-to-end features from natural-language prompts, with stronger reliability and fewer wasted cycles than earlier models.[4]

More than 85% of OpenAI employees now use Codex weekly across departments, including engineering, finance, and marketing. In one example, the communications team used GPT-5.5 to process six months of speaking request data. The system built a scoring and risk framework and helped automate low-risk approvals.[7]

Michael Truell, co-founder and CEO at Cursor — one of the most popular AI-powered code editors — provided an endorsement that carries significant weight among developers: "GPT-5.5 is noticeably smarter and more persistent than GPT-5.4, with stronger coding performance and more reliable tool use."[10]

The Codex integration goes beyond just writing code. With GPT-5.5, Codex now gets more of the job done across the browser, files, docs, and your computer. OpenAI has expanded browser use so Codex can interact with web apps, test flows, click through pages, capture screenshots, and iterate on what it sees.[10] What makes this release particularly significant for agentic coding is the convergence of three things: a more capable base model, a mature Codex platform with SDK access, and OpenAI's strategic push toward a unified "super-app" that merges ChatGPT, Codex, and the Atlas browser agent into a single interface. The Codex app now merges designing, building, shipping, and maintaining software into one continuous workflow — and GPT-5.5 is the engine that makes that practical.[2]

Scientific Research: From Tool to Co-Scientist

The scientific research claims are among the most ambitious OpenAI has ever made about a model launch. OpenAI is making an unusually ambitious claim for GPT-5.5 in research settings. The launch post says the model is better not just at answering hard questions, but at persisting through research loops: exploring an idea, gathering evidence, testing assumptions, interpreting results, and deciding what to try next.[5]

The most striking evidence is a concrete mathematical discovery. An internal version of GPT‑5.5 with a custom harness helped discover a new proof about Ramsey numbers, one of the central objects in combinatorics. Combinatorics studies how discrete objects fit together: graphs, networks, sets, and patterns. Ramsey numbers ask, roughly, how large a network has to be before some kind of order is guaranteed to appear. Results in this area are rare and often technically difficult. Here, GPT‑5.5 found a proof of a longstanding asymptotic fact about off-diagonal Ramsey numbers, later verified in Lean.[3]

Ramsey numbers are notoriously hard to compute. Exact values are only known for a handful of small cases, and each new result tends to take decades of work. So GPT-5.5 is already contributing to active research.[4]

On the biology front, On BixBench, a benchmark designed around real-world bioinformatics and data analysis, GPT‑5.5 achieved leading performance among models with published scores. The model's scientific capabilities are now strong enough to meaningfully accelerate progress at the frontiers of biomedical research as a bona fide co-scientist.[3]

Mark Chen, OpenAI's chief research officer, was direct about the ambition. Chen said that GPT-5.5 was better at navigating computer work than its predecessors, and also said that the model "shows meaningful gains on scientific and technical research workflows," noting that the company feels it could really "help expert scientists make progress." Chen also said it could assist with drug discovery.[2]

Pricing: The 2x Increase and the Efficiency Argument

The pricing structure for GPT-5.5 represents OpenAI's most aggressive per-token price increase in the GPT-5 series.

For API developers, gpt-5.5 will soon be available in the Responses and Chat Completions APIs at $5 per 1M input tokens and $30 per 1M output tokens, with a 1M context window. Batch and Flex pricing are available at half the standard API rate, while Priority processing is available at 2.5x the standard rate. OpenAI will also release gpt-5.5-pro in the API for even higher accuracy, priced at $30 per 1M input tokens and $180 per 1M output tokens.[3]

GPT-5.4 was $2.50 / $15. GPT-5.5 is $5 / $30. On raw per-token cost that's a 2× jump — the largest single-release price increase OpenAI has made in the GPT-5.x series.[9]

The Cost-Per-Task Argument

OpenAI's defense of the price increase rests on token efficiency. While GPT‑5.5 is priced higher than GPT‑5.4, it is both more intelligent and much more token efficient. In Codex, OpenAI has carefully tuned the experience so GPT‑5.5 delivers better results with fewer tokens than GPT‑5.4 for most users.[3]

In practical terms, the math works out to a more modest increase than the headline 2x suggests. The practical math for production teams: if you are running Codex at scale with 100 million output tokens per month on GPT-5.4, you paid $1,500. At the same task volume on GPT-5.5 (40% fewer tokens = 60M output tokens), you pay $1,800. The cost increase is 20%, not 100%. For teams where Codex's higher task completion rate means fewer retries, the efficiency argument is even stronger — failed tasks are pure cost with no output.[6]

However, the efficiency claim comes with a caveat. The contrarian view: this token-efficiency claim is entirely self-reported. OpenAI has not published the benchmark scaffold or token-count data from the Codex task comparison.[6]

Pricing Compared to Competitors

The competitive pricing picture gives context. GPT-5.5 is cheaper than Opus 4.7 at equivalent intelligence. Artificial Analysis notes: "GPT-5.5 (medium) scores the same as Claude Opus 4.7 (max) on our Intelligence Index at one quarter of the cost (~$1,200 vs $4,800)."[9]

Gemini 3.1 Pro is the clear winner on raw pricing. At $1.25 per million input tokens and $10 per million output tokens, it's 2-5x cheaper than the competition. Combined with its 2M token context window, Gemini offers the best cost-per-capability ratio for high-volume workloads that don't require the absolute best coding or agentic performance.[1]

Full Pricing Breakdown

Here's the complete pricing structure for GPT-5.5 as of April 24, 2026:

GPT-5.5 Standard API:

Input: $5.00 per million tokens — Output: $30.00 per million tokens — Context window: 1M tokens

GPT-5.5 Pro API:

GPT-5.5 Pro: $30.00 per million input tokens, $180.00 per million output tokens. Context window: 1M tokens on both variants. Reasoning tokens count against the window and against output billing.[9]

Batch & Flex Pricing: Half the standard API rate.

Priority Processing: 2.5x the standard rate — GPT-5.5 is also available in Fast mode, generating tokens 1.5x faster for 2.5x the cost.[10]

ChatGPT Subscription Tiers:

GPT-5.5 is rolling out to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex.[10] GPT‑5.5 Pro, designed for even harder questions and higher-accuracy work, is available to Pro, Business, and Enterprise users.[10] In Codex, GPT‑5.5 is available for Plus, Pro, Business, Enterprise, Edu, and Go plans with a 400K context window.[10]

Developer Cost Optimization Strategies

For developers concerned about the price increase, several mitigation strategies are available. A 10-line router saves more than any prompt-level optimization. Use Batch for anything offline. Evaluations, backfills, nightly report generation; all 50% off.[9] Track usage.reasoning_tokens. The billing surprise on GPT-5.5 is almost always reasoning-token spend at high effort. Alert on it.[9]

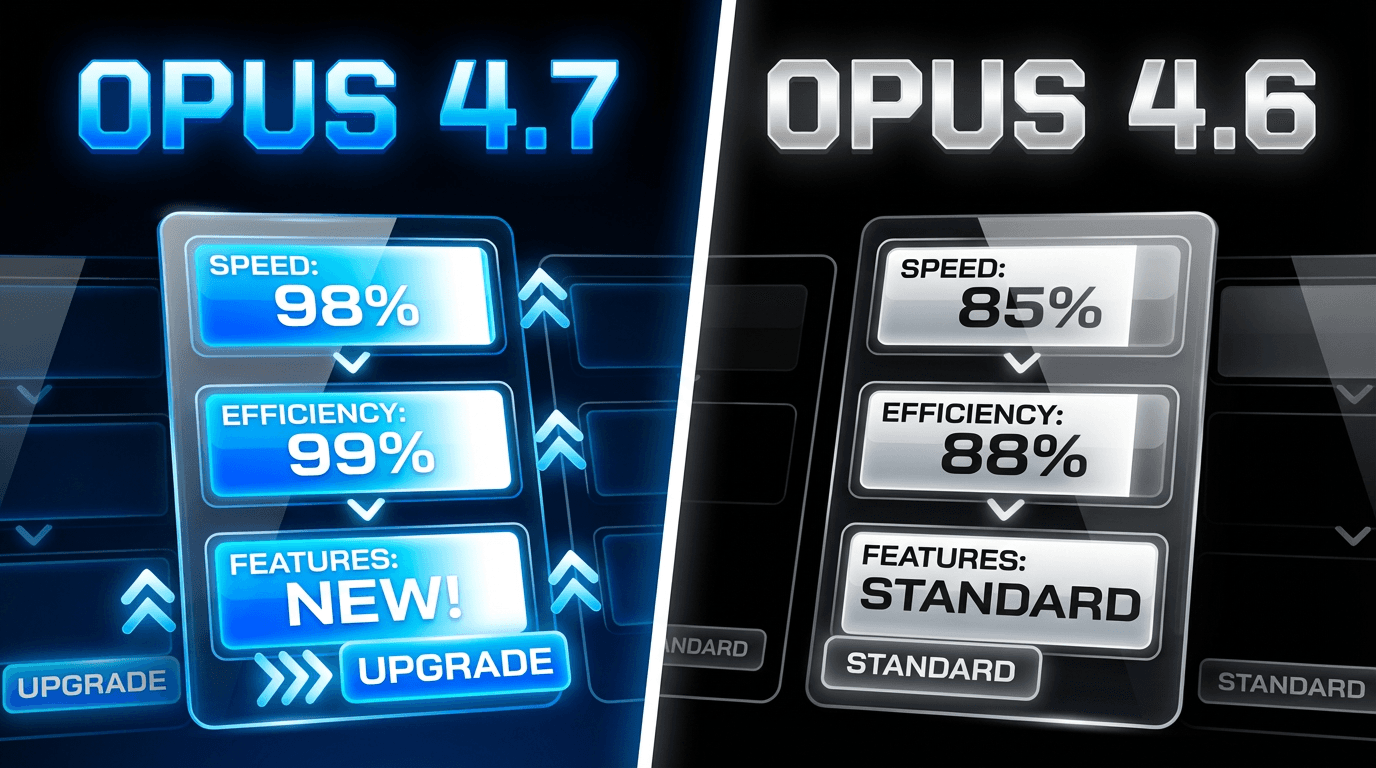

GPT-5.5 vs. Claude Opus 4.7: The Head-to-Head

The most direct competitive comparison is between GPT-5.5 and Claude Opus 4.7, which Anthropic released just a week earlier.

The new model beats Anthropic's Opus 4.7 on most standard benchmarks.[10] But "most" is doing important work in that sentence. The reality is considerably more nuanced.

Where GPT-5.5 Wins

The agentic workflow benchmarks clearly favor GPT-5.5. Terminal-Bench 2.0 shows a 13-point lead (82.7% vs 69.4%). GDPval shows GPT-5.5 at 84.9% against lower scores from Opus 4.7. On CyberGym, GPT-5.5 scores 81.8%, compared to GPT-5.4's 79.0% and Claude Opus 4.7's 73.1%.[6]

Where Claude Opus 4.7 Wins

Opus 4.7 leads on SWE-bench Pro (64.3 vs 58.6), HLE no tools (46.9 vs 41.4) and HLE with tools (54.7 vs 52.2).[8] These are not marginal differences — Claude's lead on SWE-bench Pro in particular matters because it's the benchmark most closely associated with real-world software engineering productivity.

The Practical Distinction

The benchmark comparison reveals a clear philosophical split. In real usage: if the task is "help me understand this complex doc and rewrite it to be more readable," Claude often feels very smooth. If the task is "inspect code, locate issue, propose patch, add tests, and produce launch checklist," GPT-5.5 often feels more execution-driven.[9]

Both GPT-5.5 and Claude Opus 4.7 support 1 million token context windows at standard API pricing. Both support 128K max output tokens. For most practical purposes, context window size is a draw.[2]

The Mythos Question

The elephant in every GPT-5.5 comparison is Anthropic's Claude Mythos Preview — the model that's been restricted due to cybersecurity concerns. Claude Mythos Preview appears to lead cleanly on six of nine overlapping rows, especially SWE-bench Pro and Humanity's Last Exam (HLE), but benchmark comparisons between Mythos and GPT-5.5 are imprecise. The released Opus 4.7 and GPT-5.5 are neck and neck.[8]

It is important to clarify that Mythos Preview is not a generally available product; Anthropic has classified it as a strategic defensive asset due to its high cybersecurity risks, restricting its access to a small, limited audience of trusted partners and government agencies. Because Mythos is excluded from broad commercial use, the primary market competition remains between GPT-5.5, Gemini 3.1 Pro, and Claude Opus 4.7.[4]

GPT-5.5 vs. Gemini 3.1 Pro: The Value Play

Google's Gemini 3.1 Pro occupies a distinct position in the competitive landscape — not the most capable on any single metric, but potentially the best value.

In OpenAI's published comparisons: on BrowseComp, Gemini 3.1 Pro reaches 85.9, slightly above GPT-5.5's 84.4; but on GDPval, GPT-5.5 is 84.9 vs Gemini 3.1 Pro at 67.3; on Toolathlon, GPT-5.5 is 55.6 vs Gemini 3.1 Pro at 48.8.[9]

The pricing gap is the main argument for Gemini. If you need the absolute largest context window, Gemini 3.1 Pro still leads at 2 million tokens — at a significantly lower price point ($1.25/$10 per million tokens). For long-document analysis or massive codebase ingestion, Gemini remains the cost-effective choice.[2]

In real workflows, Gemini often fits tasks tightly coupled to Google systems (search, video understanding, Google Workspace). GPT-5.5 is often better when operating inside ChatGPT / Codex / API pipelines for complex coding and multi-step execution.[9]

Safety and Cybersecurity: Why the API Launch Is Delayed

The most unusual aspect of the GPT-5.5 launch is the delayed API availability. Unlike previous GPT-5.x releases, which were immediately available via API, GPT-5.5 launched only in ChatGPT and Codex — with API access announced as coming "very soon."

The reason is explicitly stated: cybersecurity concerns.

OpenAI classifies the biological, chemical, and cybersecurity capabilities of GPT-5.5 as "High" in its Preparedness Framework, the same rating as its recent predecessors, but not "Critical."[1] That's the reason the API is delayed. OpenAI says serving a High-classified model at API scale requires additional safeguards it's working through.[4]

The Cybersecurity Assessment

OpenAI's system card provides detailed data on GPT-5.5's cybersecurity capabilities. The strongest runs produced credible memory safety leads in hardened targets, including cases with controlled exploitation primitives. This suggests that substantial parts of real world vulnerability research are becoming increasingly automatable when models are paired with tool use, build systems, and verification infrastructure. However, GPT-5.5 did not independently produce a functional full chain exploit or another verifier-confirmed Critical-level outcome against real world targets in this evaluation.[8]

GPT-5.5 had higher success rates and lower costs per success than GPT-5.4, indicating its cyberoffensive capabilities are stronger. On Irregular's atomic challenge suite, GPT-5.5 achieved an average success rate of 98% in Network Attack Simulation challenges, 92% in Vulnerability Research and Exploitation challenges, and 54% in Evasion challenges.[8]

Trusted Access for Cyber

To balance capability with safety, OpenAI introduced a novel access-tiering system. Trusted Access for Cyber is OpenAI's identity-gated pathway for giving verified defenders and legitimate users access to more permissive cyber capabilities while keeping broader safeguards in place.[5]

Standard users will hit stricter classifiers on cyber-adjacent requests. OpenAI acknowledges that some of these refusals "may be annoying." OpenAI introduced Trusted Access for Cyber, a program where verified defenders can apply to get fewer restrictions on legitimate security work. This is the first time OpenAI has formally tiered cybersecurity access based on who's asking.[4]

The Bio Bug Bounty

In a novel approach to safety validation, OpenAI launched a red-teaming program that invites selected researchers to try to find a universal jailbreak that defeats GPT-5.5's bio safety challenge. OpenAI says the goal is to test whether a reproducible universal jailbreak exists after deployment.[5]

The Broader Safety Context

The cybersecurity precautions exist within a broader context that has become increasingly tense. The cybersecurity risks presented by AI have been top of mind for tech executives and government officials since Anthropic announced its Mythos model earlier this month. The company decided to limit Mythos' rollout because of its ability to identifying weaknesses and security flaws within software.[1]

OpenAI released GPT-5.5 with its strongest set of safeguards to date, designed to reduce misuse while preserving access for beneficial work. OpenAI evaluated this model across its full suite of safety and preparedness frameworks, worked with internal and external redteamers, added targeted testing for advanced cybersecurity and biology capabilities, and collected feedback on real use cases from nearly 200 trusted early-access partners before release.[3]

GPT-5.5 Pro: The Premium Tier

GPT-5.5 ships in two variants, and the distinction between them matters for enterprise buyers.

The model is available in two variants: GPT-5.5 and GPT-5.5 Pro, distinguished by the latter offering enhanced precision and specialized logic for handling the most rigorous cognitive demands. While the standard version serves as the versatile flagship for general intelligence tasks, the Pro model is architected specifically for high-stakes environments such as legal research, data science, and advanced business analytics where accuracy is paramount. This premium tier provides noticeably more comprehensive and better-structured responses.[4]

The Pro tier shows its strongest advantages on the most demanding benchmarks. On BrowseComp, which tests a model's ability to track down hard-to-find information across the web, GPT-5.5 Pro scores 90.1%, ahead of Gemini 3.1 Pro at 85.9%.[1]

Early testers used GPT‑5.5 Pro in ChatGPT less like a one-shot answer engine and more like a research partner: critiquing manuscripts over multiple passes, stress-testing technical arguments, proposing analyses, and working with code, notes, and PDF context.[3]

The "Super App" Vision

GPT-5.5 isn't just a model release — it's a building block in OpenAI's broader product strategy. Brockman said that the model was an additional step toward creating a "super app" — a multi-purpose, Swiss Army knife of a program. The co-founders envision combining ChatGPT, Codex, and AI browser into one unified service that can aid enterprise customers.[2]

OpenAI is building the global infrastructure for agentic AI, making it possible for people and businesses around the world to get work done with AI. Over the past year, AI has dramatically accelerated software engineering. With GPT‑5.5 in Codex and ChatGPT, that same transformation is beginning to extend into scientific research and the broader work people do on computers.[3]

The infrastructure behind this vision is massive. OpenAI has committed to deploying more than 10 gigawatts of NVIDIA systems for its next-generation AI infrastructure — a buildout that will put millions of NVIDIA GPUs at the foundation of OpenAI's model training and inference for years ahead.[4]

The Infrastructure: NVIDIA Partnership and Hardware

GPT-5.5 runs on cutting-edge NVIDIA hardware, and the infrastructure story is an important part of the performance narrative.

GPT-5.5 runs on NVIDIA GB200 NVL72 rack-scale systems, which NVIDIA says deliver 35x lower cost per million tokens and 50x higher token output per second per megawatt compared with prior systems.[10]

In one of the more remarkable claims, OpenAI says it has already put GPT-5.5's coding skills to use internally. The LLM helped optimize the software that manages the infrastructure on which it runs. That hardware comprises Nvidia's GB200 and GB300 NVL72 systems. According to the company, GPT-5.5 developed a more efficient way of going about the task that increased token generation speeds by over 20%.[6]

In other words, GPT-5.5 helped make itself faster — a recursive improvement loop that highlights how AI capabilities are beginning to compound.

Who Should Use GPT-5.5 (And Who Shouldn't)

Based on the benchmark data, pricing structure, and early user reports, clear use-case recommendations emerge.

GPT-5.5 Is the Best Choice For:

Agentic coding and DevOps automation. The Terminal-Bench 2.0 lead is decisive. If you're building autonomous coding agents, CI/CD pipeline automation, or unattended terminal workflows, GPT-5.5 is the strongest available option.

Multi-step knowledge work. The GDPval results suggest GPT-5.5 excels at tasks that span multiple tools and require persistent execution — creating reports, analyzing data, building spreadsheets, and completing professional tasks across disciplines.

Computer use and software operation. The OSWorld-Verified results place GPT-5.5 at the top for tasks requiring interaction with real computer environments — clicking, typing, navigating, and completing workflows across applications.

Scientific research workflows. The GeneBench, BixBench, and Ramsey numbers results suggest GPT-5.5 is the strongest available model for multi-stage scientific data analysis, especially in genetics, bioinformatics, and mathematics.

Claude Opus 4.7 Remains Better For:

Complex GitHub issue resolution. SWE-bench Pro shows a clear Claude advantage. For teams where the primary workflow involves resolving real software engineering issues in existing codebases, Claude is still ahead.

Academic reasoning tasks. HLE results favor Claude and Gemini for expert-level cross-domain reasoning without tool assistance.

Long-form writing and prose quality. While not directly benchmarked in the GPT-5.5 launch, Claude's reputation for higher-quality prose and more nuanced writing remains strong based on previous model comparisons.

Gemini 3.1 Pro Remains Better For:

Cost-sensitive production deployments. At 2-5x cheaper per token and with a 2M token context window, Gemini is the pragmatic choice for high-volume workloads.

Ultra-long context processing. Gemini 3.1 Pro still leads at 2 million tokens[2] — double GPT-5.5's context window.

Google ecosystem integration. In real workflows, Gemini often fits tasks tightly coupled to Google systems.[9]

The Pace of AI Development: What GPT-5.5 Tells Us

Perhaps the most significant aspect of GPT-5.5 is what it says about the speed of AI development in 2026.

OpenAI released its last model only last month, with a previous release in December and, before that, November. The company has continued to churn out new models at a crisp pace, a trend that company staff said should be expected to continue for the foreseeable future. "We see pretty significant improvements in the short term, extremely significant improvements in the medium term," said Jakub Pachocki, OpenAI's chief scientist.[2]

This launch lands in a market that is moving rapidly since the boom of agentic AI. GPT-5.4 arrived just two days after GPT-5.3, while Xiaomi went from MiMo-V2-Pro to MiMo 2.5 Pro — with full multimodal capabilities — in roughly five weeks. The gap between GPT-5.4 and GPT-5.5 was about seven weeks. That's the tempo now.[1]

GPT-5.5 is the clearest example yet that the AI capability curve is still bending upward rather than flattening. It is not a new paradigm, but it is a real jump: state-of-the-art agentic benchmarks, meaningful wins on economically valuable knowledge work, a context window large enough for almost any document set, and a Pro tier fast enough that advanced reasoning is not a once-a-day treat.[10]

The practical implication is perhaps best summarized by this observation: A year ago, AI could draft. Six months ago, it could reason. Today it can complete. That changes what it is worth handing to a model: not a small piece of work to be reviewed, but an entire task to be finished.[10]

Enterprise Impact: The Bank of New York Case Study

One of the most telling data points from the GPT-5.5 launch comes from an early enterprise tester. The Bank of New York has been testing GPT-5.5 in recent weeks, alongside early access to models from rivals like Anthropic. CIO Leigh-Ann Russell said the improvements are meaningful. "What we're actually seeing from 5.5, that I think is really important for a highly regulated institution, is the response quality — but also a really impressive hallucination resistance," she said. "A bank needs to have very high accuracy, so this becomes critical, and we are seeing a step change with this model."[4]

This feedback is significant because hallucination resistance has been one of the most persistent criticisms of large language models in enterprise contexts. If GPT-5.5 represents a genuine "step change" in accuracy for regulated industries, the implications for financial services, healthcare, and legal applications are substantial.

Availability and Access

GPT-5.5 is available starting April 23, 2026 to Plus, Pro, Business, and Enterprise users in ChatGPT, and in Codex for developers. GPT-5.5 Pro is available to Pro, Business, and Enterprise users in ChatGPT.[10]

Unfortunately for third-party software developers, API access is not yet available for either GPT-5.5 nor GPT-5.5 Pro and will be coming "very soon," according to the company's announcement blog post.[4]

OpenAI has not announced a free-tier rollout.[10]

Frequently Asked Questions

What is GPT-5.5?

GPT-5.5 is OpenAI's newest frontier model and the headline release in the GPT-5 family. OpenAI describes it as a new class of intelligence built specifically for real work and for powering agents, with the ability to understand complex goals, use tools, check its own work, and carry multi-step tasks through to completion.[10]

When was GPT-5.5 released?

OpenAI on Thursday announced its latest artificial intelligence model, GPT-5.5.[1] The model began rolling out to paid subscribers on April 23, 2026.

How much does GPT-5.5 cost?

API pricing for GPT-5.5 will be $5 per million input tokens and $30 per million output tokens, with a 1-million token context window. GPT-5.5 Pro will cost $30 per million input tokens and $180 per million output tokens.[8] In ChatGPT, it's available to Plus ($20/month), Pro ($200/month), Business, and Enterprise subscribers.

Is GPT-5.5 better than Claude?

It depends on the task. Claude Opus 4.7 leads on SWE-bench Pro (64.3% vs 58.6%) for complex multi-file GitHub issue resolution. GPT-5.5 leads on Terminal-Bench 2.0 (82.7%) for command-line workflows. For pure code quality on hard problems, Opus 4.7 wins. For agentic coding workflows with tool coordination, GPT-5.5 has the edge.[2]

Is GPT-5.5 available for free?

OpenAI has not announced a free-tier rollout.[10] GPT-5.5 currently requires a paid ChatGPT subscription (Plus, Pro, Business, or Enterprise).

What is GPT-5.5's context window?

GPT-5.5 supports a 1 million token context window in the API. GPT-5.5 in Codex uses a 400K context window by default, which OpenAI has found sufficient for most engineering workflows.[2]

Why is the API delayed?

OpenAI classified GPT-5.5 as High on both biological/chemical and cybersecurity capabilities under its Preparedness Framework. That's the reason the API is delayed. OpenAI says serving a High-classified model at API scale requires additional safeguards it's working through.[4]

What is GPT-5.5 Pro?

GPT‑5.5 Pro is designed for even harder questions and higher-accuracy work.[10] It's available to Pro, Business, and Enterprise users and delivers higher scores on the most demanding benchmarks, particularly in mathematics and web research.

What codename does GPT-5.5 have?

GPT-5.5 is codenamed "Spud."[4]

How does GPT-5.5 compare to GPT-5.4?

GPT-5.5 scored 82.7% on Terminal-Bench 2.0, which tests command-line workflows, compared to 75.1% for its predecessor GPT-5.4.[5] The company reports that GPT‑5.5 maintains the same per-token latency as its predecessor GPT‑5.4 while using fewer tokens to complete similar tasks.[3] The trade-off is a 2x increase in per-token pricing.

What is the best model available right now?

Rather than asking "which model is best," the right question is "which model is best for this specific task?" There is no single "best" model — there's only the best model for your specific task distribution.[1] GPT-5.5 leads agentic workflows, Claude models lead coding and reasoning, and Gemini leads on cost efficiency and long context.

Conclusion

GPT-5.5 is a new foundation, the first fully retrained base model OpenAI has produced since GPT-4.5 — and it represents a genuine step forward in what AI can do autonomously. The Terminal-Bench 2.0 dominance, the Ramsey numbers proof, the efficiency gains, and the early enterprise feedback all point to a model that's less about answering questions and more about completing work.

The competitive picture remains genuinely complex. Claude Opus 4.7 is still the stronger pure coder on SWE-bench Pro. Gemini 3.1 Pro is still the cost leader with the largest context window. And Anthropic's restricted Mythos Preview appears to exceed all publicly available models on several key benchmarks.

But for the broadest definition of "getting work done on a computer" — the agentic, multi-tool, multi-step workflows that enterprise customers are increasingly demanding — GPT-5.5 makes the strongest case of any model available today. Whether that advantage holds for weeks or months in this relentless release cadence remains to be seen.

"We are moving to a compute-powered economy," Brockman added, referring to the idea that work will be powered by AI capacity, and therefore compute will become the bedrock of the economy.[4]

GPT-5.5 is OpenAI's most convincing evidence yet that this future is arriving faster than most people expected.

References

- OpenAI announces GPT-5.5, its latest artificial intelligence model

- OpenAI Releases GPT-5.5: Faster, Smarter—And Pricier

- GPT-5.5 vs Gemini 3.1 Pro vs Claude Mythos: Benchmarks & Routing Guide | Lushbinary

- OpenAI unveils GPT-5.5, claims a "new class of intelligence" at double the API price

- ChatGPT Revenue and Usage Statistics (2026) - Business of Apps

- OpenAI releases GPT-5.5, bringing company one step closer to an AI 'super app' | TechCrunch

- GPT-5.5 vs Claude Opus 4.7: Benchmarks, Pricing & Coding Compared | Lushbinary

- OpenAI releases GPT-5.5 with improved coding and research capabilities By Investing.com

- GPT-5.5 Codex Guide: Autonomous Coding Agents & SDK Integration | Lushbinary

- ChatGPT Statistics (2026) – Active Users & Growth Data

- OpenAI rolls out GPT-5.5 with improved contextual understanding, Plus and up

- Introducing GPT-5.5 | OpenAI

- How GPT-5.2 stacks up against Gemini 3.0 and Claude Opus 4.5

- OpenAI releases GPT‑5.5, its newest AI model with enhanced coding capabilities

- Introducing GPT-5.2-Codex | OpenAI

- OpenAI ChatGPT Statistics 2026: The Definitive Data

- OpenAI releases "Spud" GPT-5.5 model

- OpenAI launches GPT-5.5 just weeks after GPT-5.4 as AI race accelerates | Fortune

- OpenAI’s New GPT-5.5 Powers Codex on NVIDIA Infrastructure — and NVIDIA Is Already Putting It to Work

- OpenAI's GPT-5.5 is here, and it's no potato: narrowly beats Anthropic's Claude Mythos Preview on Terminal-Bench 2.0 | VentureBeat

- OpenAI's GPT-5.5 is here, and it's no potato: narrowly beats Anthropic's Claude Mythos Preview on Terminal-Bench 2.0 | VentureBeat

- Open AI's GPT-5.5: Benchmarks, Safety Classification, and Availability | DataCamp

- ChatGPT Statistics 2026: How Many People Use ChatGPT?

- OpenAI Unveils GPT-5.5 to Field Tasks With Limited Instructions - Bloomberg

- AI Model Benchmarks Apr 2026 | Compare GPT-5, Claude 4.5, Gemini 2.5, Grok 4 | LM Council

- OpenAI releases GPT-5.5, its latest AI model with enhanced coding abilities

- Introducing upgrades to Codex | OpenAI

- OpenAI’s ChatGPT-5.5 release is really a bet on agentic work

- ChatGPT Statistics 2026: Users, Revenue, Traffic, Crawl ...

- OpenAI releases GPT-5.5 with advanced math, coding capabilities - SiliconANGLE

- GPT-5.5 Review: Benchmarks, Pricing & Vs Claude (2026)

- Codex leak suggests imminent GPT-5.5 announcement

- AINewsAINewsAINews GPT 5.5 and OpenAI Codex Superapp - Latent.Space

- OpenAI Releases GPT-5.5 With Stronger Agentic Coding, Computer Use, and Scientific Research Capabilities - gHacks Tech News

- ChatGPT Statistics 2026: Users, Revenue & Growth

- GPT-5.4 vs Claude Opus 4.6 vs Gemini 3.1 Pro: Real Benchmark Results Compared | MindStudio

- OpenAI's GPT-5.5 masters agentic coding with 82.7% benchmark score

- GPT-5.5 Arrives, OpenAI's Smartest Model Yet Excels at Coding and Research - PhoneWorld

- ChatGPT Statistics 2026 | Superlines

- How OpenAI's recently released GPT-5.5 stacks up with Anthropic's gated Claude Mythos

- OpenAI releases GPT-5.5 with improved coding and research capabilities By Investing.com

- OpenAI releases GPT-5.5, a more powerful engine for coding, science, and general work - Fast Company

- GPT-5.5 System Card - Deployment Safety Hub - OpenAI

- ChatGPT Statistics and User Trends for 2026 | Second Talent

- What is OpenAI’s GPT-5.5, its newest ‘smartest' model?

- GPT-5.5 Released: First Fully Retrained Base Model Since GPT-4.5, 1M Context, $5/$30 Pricing

- GPT-5.5 Deep Review: New Features, Real Metrics, Pricing, and Competitor Comparison

- GPT-5.5 Pricing: Full Breakdown of API, Codex, and ChatGPT Costs (April 2026)

- Models – Codex | OpenAI Developers

- OpenAI releases ChatGPT 5.5 Flagship AI Models (APK Download)

- ChatGPT statistics 2026: all the essential figures you need - Incremys

- OpenAI upgrades ChatGPT and Codex with GPT-5.5: 'a new class of intelligence for real work' - 9to5Mac

- GPT-5.5 Released: Everything You Need to Know

- OpenAI Releases GPT-5.5: Stronger Agentic Coding, Knowledge Work, and Research

- OpenAI launches GPT-5.5, calling it "a new class of intelligence" - The New Stack

- OpenAI releases GPT-5.5 with agentic AI and token efficiency gains | ETIH EdTech News — EdTech Innovation Hub

- OpenAI's ChatGPT Surpasses 900 Million Weekly Users, 50 Million Paying Subscribers — Roic News