Claude Opus 4.7 Is Here — What Changed, What's Better, and Is It Worth Upgrading?

Written by

Jay Kim

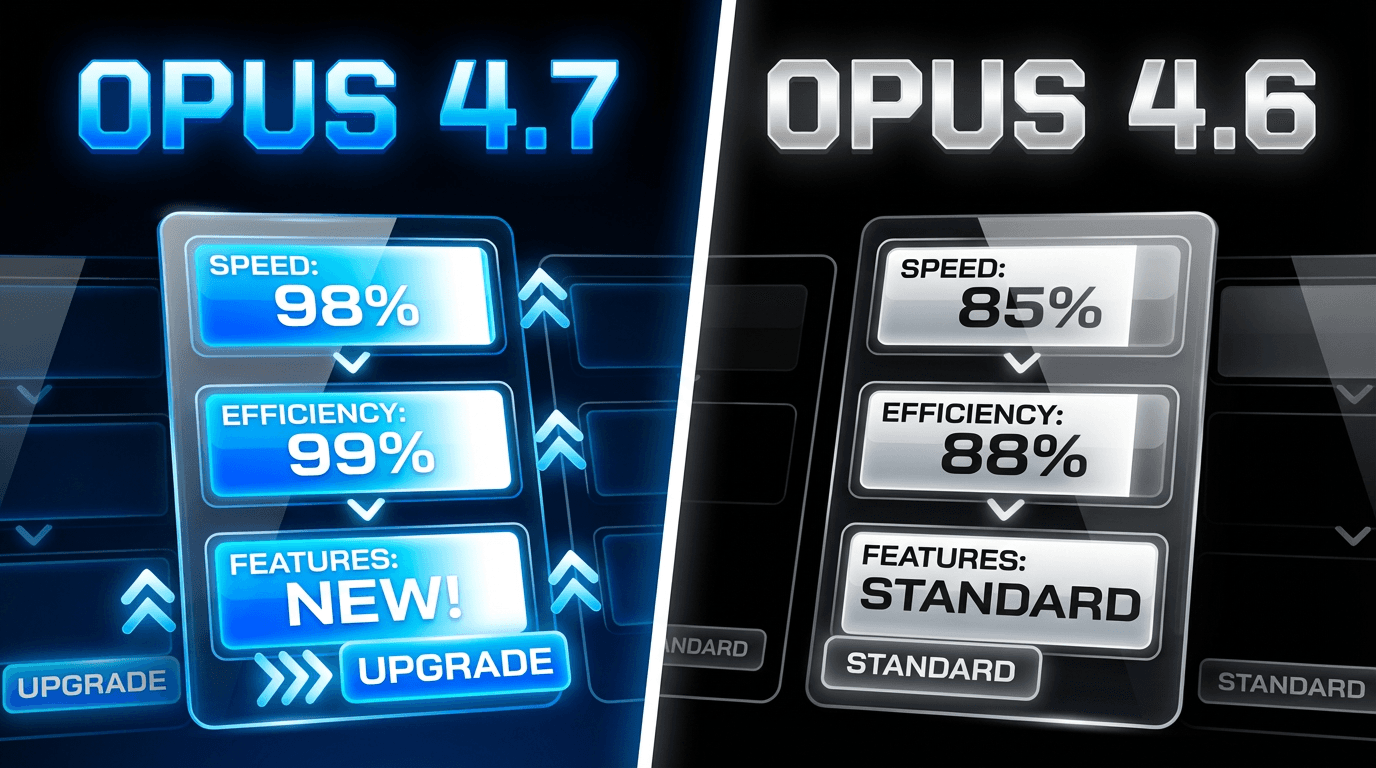

Claude Opus 4.7 launched April 16, 2026 with major upgrades in coding, vision, and instruction following. Here is what changed, how it compares to GPT-5.4, and what it means for content creators.

If you use AI for content creation, coding, scripting, or generating visuals, the model powering your workflow matters more than you think. On April 16, 2026, Anthropic released Claude Opus 4.7, the newest upgrade to its flagship AI model, and the improvements are significant enough that every creator and developer should pay attention.

Claude Opus 4.7 is Anthropic's most advanced AI model, released in April 2026 as the successor to Opus 4.6. It is optimized for multi-step reasoning, autonomous long-running tasks, and multi-agent coordination. The model retains a 1 million token context window and delivers improvements in coding, analysis, creative writing, and enterprise knowledge work across finance, legal, and research domains.[9]

Whether you are writing scripts for YouTube Shorts, prompting AI image generators, or building content strategies for your channel, the AI model behind your tools determines the quality of what you get back. Opus 4.7 changes the game in several ways, and this guide walks through every detail you need to know.

What Actually Changed in Claude Opus 4.7

The upgrade from Opus 4.6 to Opus 4.7 covers five major areas. Each one has practical implications for how creators and developers work with AI on a daily basis.

1. Coding Performance Got a Major Boost

The biggest headline is coding. Anthropic's new Claude Opus 4.7 improves on software-engineering benchmarks, including SWE-bench Pro at 64.3%, SWE-bench Verified at 87.6%, Terminal-Bench 2.0 at 69.4%, and OSWorld-Verified at 78.0%.[1]

For context, Opus 4.6 scored 53.4% on SWE-bench Pro. That means Opus 4.7 jumped roughly 11 points on the benchmark that most closely simulates real-world GitHub issue resolution.

On one company's 93-task coding benchmark, Claude Opus 4.7 lifted resolution by 13% over Opus 4.6, including four tasks neither Opus 4.6 nor Sonnet 4.6 could solve.[5]

Why does this matter for creators? If you use AI to build websites, automate workflows, or generate code for tools and integrations, the model behind those tasks now handles complex multi-step problems with more precision. This includes things like building custom thumbnail generators, setting up automation pipelines, or creating interactive content tools.

If you are exploring AI business ideas in 2026, the coding capabilities alone open up entirely new opportunities for building products and services with AI assistance.

2. Vision Resolution Tripled

This upgrade does not get the attention it deserves. Opus 4.7 processes images at resolutions up to 2,576 pixels on the long edge, more than three times the capacity of prior Claude models.[6]

Claude Opus 4.7 also advances visual capabilities with high-resolution image support improving accuracy on charts, dense documents, and screen UIs where fine detail matters.[3]

For content creators, this has direct practical value. When you are analyzing thumbnails, working with dense visual layouts, or processing screenshots for tutorials, the model now reads fine details that previous versions missed entirely. The model generates higher-quality interfaces, slides, and documents as a result, which matters for workflows that involve visual content processing or creation.[4]

If you are creating YouTube thumbnails with AI, this kind of visual understanding improvement means the AI behind thumbnail generation can handle more complex compositions, read text overlays more accurately, and produce visuals with better detail. Creators working on thumbnail makeovers or AI thumbnail styles will notice the difference in output quality.

3. Long-Running Agentic Tasks Without Losing Focus

One of the most frustrating problems with earlier AI models was context drift. You would give the model a complex task, and halfway through it would lose track of what it was doing. Opus 4.7 addresses this directly.

Opus 4.7 pushes past its predecessor on every major benchmark, from agentic coding and graduate-level reasoning to enterprise knowledge work. It is designed to run tasks that take hours, not seconds, without losing coherence or accuracy along the way.[9]

Opus 4.7 retains the full 1 million token context window introduced in Opus 4.6, but now uses it more effectively. Improved long-context retrieval means the model scores higher on multi-needle recall tasks even deep into massive documents, entire codebases, or lengthy conversation histories.[9]

For creators who use AI to generate entire content plans, write long scripts, or process research across multiple sources, this means you can push further in a single session without the model drifting off track. If you are planning a month of YouTube content in one hour, the improved context retention makes the output more consistent from start to finish.

4. Better Instruction Following and Self-Verification

Opus 4.7 is designed to handle longer, less supervised tasks, verifying its own outputs before reporting back and following instructions with more precision. The pitch is a model you can hand off genuinely hard work to without watching every step.[4]

Claude Opus 4.7 is the strongest model Hex has evaluated. It correctly reports when data is missing instead of providing plausible-but-incorrect fallbacks, and it resists dissonant-data traps that even Opus 4.6 falls for.[5]

This matters because AI hallucination has been one of the biggest obstacles to trusting AI outputs in professional workflows. When you ask for statistics, data analysis, or factual content, a model that tells you it does not have the answer is far more useful than one that confidently makes something up.

For creators writing blog posts that rank on Google, accuracy is everything. A model that self-verifies before responding gives you a stronger foundation for content that needs to be factually reliable.

5. Adaptive Thinking Is Always On

Unlike Opus 4.6, where you could toggle between adaptive and fixed thinking budgets, Opus 4.7 is a more intelligent, more efficient model at every effort level. Low-effort Opus 4.7 is roughly equivalent to medium-effort Opus 4.6.[5]

The model automatically adjusts how much reasoning it applies based on the complexity of the task. Simple questions get quick answers. Complex multi-step problems get deeper reasoning. You no longer need to manually configure thinking budgets, which simplifies the workflow for both developers and creators.

New Features for Developers and Power Users

The New "xhigh" Effort Level

Anthropic introduced a new "xhigh" effort level between high and max settings, providing additional control over the balance between reasoning capability and response speed.[6]

The effort scale now runs from low to medium to high to xhigh to max, giving developers five levels of control over how deeply the model reasons through a problem. For creators using AI through APIs or advanced tools, this means you can fine-tune the tradeoff between speed and quality depending on whether you need a quick draft or a deeply reasoned analysis.

Task Budgets in Public Beta

Task budgets, currently in beta, let Claude prioritize work and manage costs across longer runs. For teams that have been leaning heavily on Claude for autonomous coding workflows, this kind of cost visibility matters.[4]

This is particularly relevant for teams running content production at scale. If you are generating hundreds of AI images, scripts, or thumbnails through API integrations, task budgets help you manage costs without manually monitoring every request.

The /ultrareview Command

The new /ultrareview command runs a dedicated review session that flags issues a careful human reviewer would catch, complementing the AI-powered Code Review feature Anthropic introduced earlier this year.[4]

While this is primarily a coding feature, the principle applies broadly. AI that can review its own work and flag potential issues before delivery is valuable across any professional workflow, from content creation to data analysis.

How Claude Opus 4.7 Compares to GPT-5.4 and Gemini 3.1 Pro

The competitive landscape in April 2026 is intense. Here is how the three major frontier models stack up.

GPT-5.4 trades blows with Opus 4.7 depending on the task, and Gemini 3.1 Pro holds its own on multilingual benchmarks. But on the aggregate, particularly for agentic and coding workloads where Claude has historically led, Opus 4.7 extends the gap rather than ceding ground.[4]

On SWE-bench Pro (agentic coding), Opus 4.7 leads at 64.3% compared to GPT-5.4 at 57.7% and Gemini 3.1 Pro at 54.2%. On graduate-level reasoning (GPQA Diamond), all three models score in the 94% range, making it essentially a tie.

GPT-5.4 has advantages in specific areas like mathematical reasoning and agentic search. Opus at $5/M input and $25/M output is 2x GPT-5.4's input price and 1.67x its output price. The decision to pay that premium only makes sense when Opus's writing and instruction-following edge reduces enough downstream rework to justify it.[1]

For content creators specifically, the instruction-following and writing quality improvements in Opus 4.7 are the most relevant differentiators. When you are generating AI prompts for YouTube thumbnails, writing YouTube Shorts scripts, or crafting detailed visual descriptions, a model that follows your instructions precisely saves significant time on revisions.

Pricing: What You Need to Know

Pricing for Opus 4.7 starts at $5 per million input tokens and $25 per million output tokens, with up to 90% cost savings with prompt caching and 50% savings with batch processing.[5]

The per-token price is the same as Opus 4.6, which itself represented a major price cut from earlier Opus generations. However, there is an important detail that could affect your actual costs.

Opus 4.7 uses a new tokenizer compared to previous models, contributing to its improved performance on a wide range of tasks. This new tokenizer may use up to 35% more tokens for the same fixed text.[3]

So while the price per token has not changed, the number of tokens you use for the same work may increase. If you are running high-volume AI workflows, the new effort levels and task budgets become essential tools for managing costs effectively.

For comparison, GPT-5.4 comes in at $2.50 per million input tokens and $15 per million output tokens, and Gemini 3.1 Pro is even cheaper at around $2 per million input tokens. The Claude premium makes sense primarily when writing quality and instruction-following accuracy save you enough rework time to justify the difference.

What This Means for Content Creators

If you are building a content workflow around AI tools, the improvements in Opus 4.7 have practical implications across multiple areas.

Better Scripts and Written Content

The improved instruction-following and self-verification make Opus 4.7 significantly better at generating scripts that match your specifications. Whether you are writing narration for AI-generated Shorts, blog posts, or video descriptions, the model is more likely to deliver exactly what you asked for on the first try.

If you are comparing AI tools for content creation, the recent ChatGPT vs Claude vs Gemini comparison for content creation in 2026 covers the strengths and weaknesses of each model across different content formats.

More Accurate Visual Prompts

The tripled vision resolution means Opus 4.7 understands visual composition at a much deeper level. When you describe what you want in a thumbnail, social media post, or video scene, the model has a better internal understanding of spatial relationships, lighting, and visual hierarchy.

Creators working on consistent AI characters across multiple images or designing YouTube banner ideas with AI prompts will benefit from the improved visual comprehension.

Stronger Long-Form Content Planning

The improved context retention is a game-changer for anyone doing long-form content strategy. You can now give the model an entire channel analysis, content calendar, and audience data in a single session and get back coherent, detailed recommendations that actually account for everything you provided.

This is especially useful for creators building 30-day YouTube Shorts plans or developing content pillar strategies for short-form creators.

How Miraflow AI Fits Into the Opus 4.7 Era

As AI models improve, the tools built on top of them get better automatically. Miraflow AI provides a complete content creation pipeline that benefits from every advancement in underlying AI capabilities.

Creators can generate the entire workflow in one place, from AI images and YouTube thumbnails to cinematic videos and AI music. Each of these tools leverages advanced AI models to turn simple prompts into production-ready content.

For example, if you are working on a new YouTube channel and need thumbnails, Shorts, and background music, you can handle the entire pipeline inside Miraflow without switching between multiple platforms. The Text2Shorts generator turns any topic into a complete vertical video with script, visuals, and voice, while the AI Music Generator creates custom background tracks that match your content style.

The principle behind better AI models applies directly here. When the underlying models improve in instruction following, visual understanding, and creative output, every tool in the Miraflow pipeline produces better results with simpler prompts.

If you are exploring how to write effective prompts for visual content, the improvements in Opus 4.7 level models mean your prompts can be more detailed and the AI will follow them more precisely.

7 Ways Creators Can Take Advantage of Opus 4.7 Level AI

Here are practical ways to apply the capabilities of frontier AI models like Opus 4.7 in your content workflow.

- Generate more detailed thumbnail prompts. With improved visual understanding, you can describe complex compositions with multiple elements, specific lighting conditions, and precise color palettes. The model handles the complexity without losing track of individual details. Try applying these techniques using the Miraflow YouTube Thumbnail Maker.

- Write longer, more structured video scripts in one session. The improved context window usage means you can provide detailed channel context, audience information, and style guidelines alongside your script request without the model losing important details mid-generation.

- Use AI for content analysis and strategy. Feed the model your YouTube analytics data, competitor analysis, and audience demographics in a single prompt to get strategic recommendations. The self-verification means the model will flag when it does not have enough data to make a recommendation rather than guessing.

- Create consistent visual styles across a content series. The enhanced vision capabilities make it easier to maintain visual consistency when generating AI images for a series of thumbnails, social posts, or video assets.

- Build automated content workflows. The coding improvements make it more practical to build custom tools, scripts, and automations that integrate AI into your content production pipeline.

- Generate multi-format content from a single brief. Give the model a comprehensive content brief and generate blog posts, video scripts, social captions, and thumbnail descriptions in one session without quality degradation across formats.

- Improve prompt quality for cinematic video generation. Better instruction following means your video prompts produce results that more closely match your creative vision, reducing the number of iterations needed to get the perfect clip.

Should You Upgrade to Tools Using Opus 4.7?

The answer depends on what you are doing with AI right now.

If you are using AI primarily for content creation, writing, and visual generation, the improvements in writing quality, instruction following, and visual understanding make Opus 4.7 powered tools noticeably better. You will spend less time revising outputs and more time publishing content.

If you are primarily cost-sensitive and running high-volume workflows, be aware that the new tokenizer may increase token usage by up to 35% for the same input. The per-token price has not changed, but your actual bill could go up. In this case, evaluate whether the quality improvements save enough revision time to offset the cost increase.

If you are building custom AI integrations or automations, the coding performance improvements and new developer features like task budgets and the xhigh effort level give you significantly more control over quality and cost.

For most creators, the improvements in Opus 4.7 are meaningful enough that any tool or platform updating to this model will produce noticeably better results, especially for complex prompts and multi-step workflows.

Frequently Asked Questions

What is Claude Opus 4.7?

Claude Opus 4.7 is Anthropic's newest flagship AI model, released on April 16, 2026. It delivers improvements in coding, vision, instruction following, and long-running task performance compared to its predecessor, Opus 4.6.

How much does Claude Opus 4.7 cost?

API pricing starts at $5 per million input tokens and $25 per million output tokens, which is the same as Opus 4.6. However, the new tokenizer may use up to 35% more tokens for the same text, which could increase actual costs.

Is Claude Opus 4.7 better than GPT-5.4?

It depends on the task. Opus 4.7 leads on coding benchmarks like SWE-bench Pro (64.3% vs 57.7%) and is generally stronger at instruction following and writing quality. GPT-5.4 has advantages in mathematical reasoning and comes at a lower price point.

Can I use Claude Opus 4.7 for content creation?

Yes. Opus 4.7 excels at generating scripts, visual prompts, blog content, and strategic recommendations. Many AI content creation tools, including platforms like Miraflow AI, benefit from improvements in underlying models like Opus 4.7.

What is the difference between Claude Opus 4.7 and Claude Mythos?

Opus 4.7 is the most capable publicly available Claude model. Claude Mythos Preview is a more powerful model that Anthropic has not released publicly due to safety and cybersecurity concerns. Mythos scores 77.8% on SWE-bench Pro compared to Opus 4.7's 64.3%.

Does Claude Opus 4.7 work with images?

Yes. Opus 4.7 supports images at up to 2,576 pixels on the long edge, which is more than three times the resolution of previous Claude models. This makes it significantly better at analyzing charts, documents, screenshots, and visual content.

Where can I access Claude Opus 4.7?

The model is available through the Claude API, Amazon Bedrock, Google Cloud Vertex AI, Microsoft Foundry, GitHub Copilot, and the Claude app for Pro, Max, Team, and Enterprise users.

How does Opus 4.7 affect AI content tools like Miraflow?

As underlying AI models improve, the tools built on them produce better results. Miraflow's AI image generator, thumbnail maker, Text2Shorts, and other features all benefit from advancements in AI model capabilities like improved instruction following and visual understanding.

Conclusion

Claude Opus 4.7 represents a meaningful step forward in what AI can do for creators and developers. The coding improvements, tripled vision resolution, better instruction following, and enhanced context retention all translate into practical benefits for anyone using AI in their daily workflow.

The Opus 4.7 release comes at a moment when Anthropic is running at a pace few anticipated. Claude's traffic has grown roughly 5x over the past year, the company raised $30 billion at a $380 billion valuation in February, and enterprise adoption has accelerated sharply. Eight of the Fortune 10 are now Claude customers.[4]

For content creators, the most relevant improvements are in writing quality, visual comprehension, and the ability to handle complex multi-step tasks without losing coherence. These are the capabilities that directly impact how good your scripts, thumbnails, and content strategies turn out when you use AI tools.

If you are ready to put frontier AI to work in your content workflow, start by exploring the complete creation pipeline at Miraflow AI, where you can generate everything from AI images and thumbnails to Shorts, cinematic videos, and AI music, all from a single platform.

References

- Claude API Pricing: Haiku 4.5, Sonnet 4.6, and Opus 4.7 (April 2026) | BenchLM.ai

- Claude Opus 4.7 is generally available - GitHub Changelog

- Claude Opus 4.7 Leaks & Anthropic's Full-Stack AI Studio - Geeky Gadgets

- Claude Opus 4.7 is now available in Amazon Bedrock - AWS

- Pricing - Claude API Docs

- Release notes | Claude Help Center

- Anthropic Releases Claude Opus 4.7, Beats GPT-5.4, Gemini 3.1 Pro On Most Benchmarks

- What's new in Claude Opus 4.7 - Claude API Docs

- Claude Opus 4.7

- Claude Opus 4.7 Coming This Week: The Information Leak and New AI Design Tool

- Anthropic launches Claude Opus 4.7 with enhanced coding capabilities By Investing.com

- Inside the Opus 4.7 Leak and Anthropic’s Massive Claude Code 2.0 Upgrade

- Claude Opus 4.7: Complete Guide to Features ...

- Anthropic To Launch Claude Opus 4.7 This Week - Dataconomy

- Claude Opus 4.5: 67% Price Cut, Full Specs (2026)

- Claude Opus 4.7

- Anthropic launches Claude Opus 4.7 with enhanced coding capabilities

- Claude Opus 4.7 is about to be released: 5 key insights interpreted from the Vertex AI leak and The Information report - Apiyi.com Blog

- Introducing Claude Opus 4.7